Pdf A Vector Quantized Masked Autoencoder For Audiovisual Speech

Pdf A Vector Quantized Masked Autoencoder For Audiovisual Speech In this paper, we propose the vq mae av model, a vector quantized mae specifically designed for audiovisual speech self supervised representation learning. View a pdf of the paper titled a vector quantized masked autoencoder for audiovisual speech emotion recognition, by samir sadok and 2 other authors.

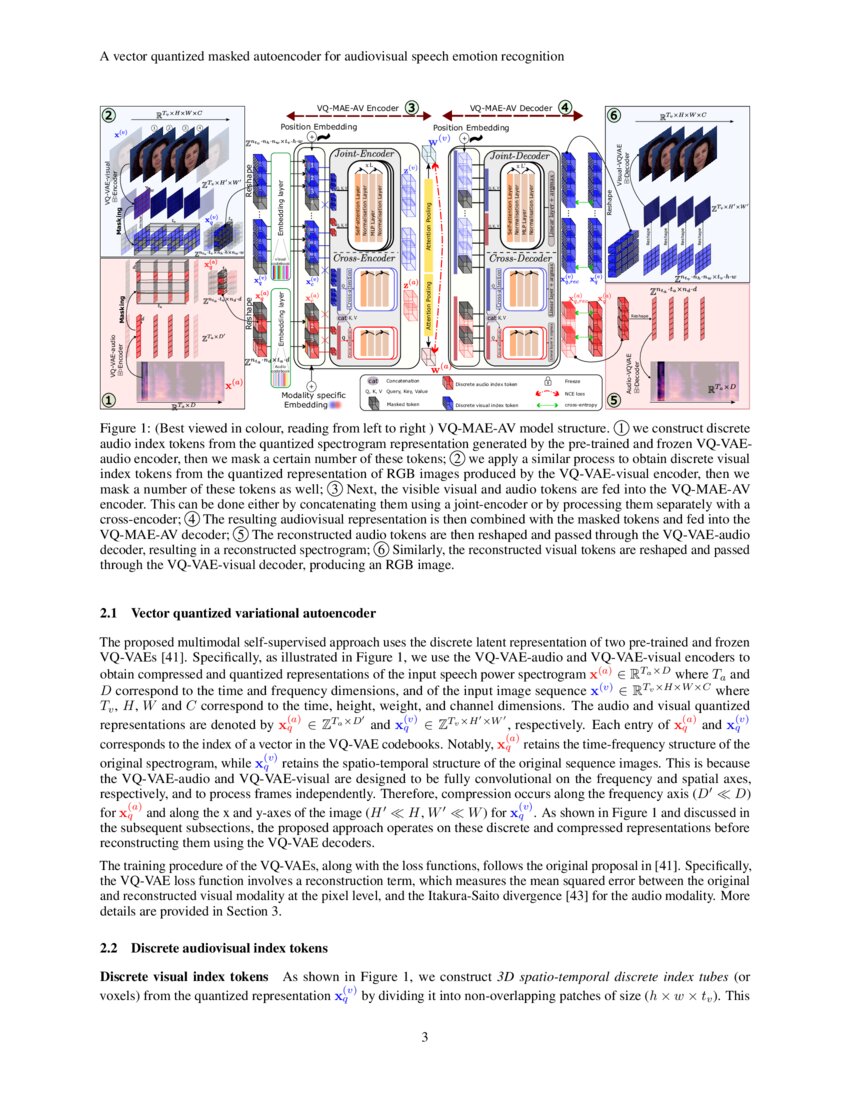

Figure 1 From A Vector Quantized Masked Autoencoder For Audiovisual During self supervised pre training, the vq mae av model is trained on a large scale unlabeled dataset of audiovisual speech, for the task of reconstructing randomly masked audiovisual speech tokens and with a contrastive learning strategy. We propose the vq mae av model, a vector quantized (vq) masked autoencoder (mae) designed for audiovisual (av) speech representation learning and applied to emotion recognition. To address this issue, self supervised learning approaches, such as masked autoencoders (maes), have gained popularity as potential solutions. in this paper, we propose the vq mae av model, a vector quantized mae specifically designed for audiovisual speech self supervised representation learning. In this paper, we propose the vq mae av model, a self supervised multimodal model that leverages masked autoencoders to learn representations of audiovisual speech without labels.

A Vector Quantized Masked Autoencoder For Audiovisual Speech Emotion To address this issue, self supervised learning approaches, such as masked autoencoders (maes), have gained popularity as potential solutions. in this paper, we propose the vq mae av model, a vector quantized mae specifically designed for audiovisual speech self supervised representation learning. In this paper, we propose the vq mae av model, a self supervised multimodal model that leverages masked autoencoders to learn representations of audiovisual speech without labels. In this paper, we propose the vector quantized masked autoencoder for speech (vq mae s), a self supervised model that is fine tuned to recognize emotions from speech signals. In this paper, we propose the vector quantized masked autoencoder for speech (vq mae s), a self supervised model that is fine tuned to recognize emotions from speech signals. To address this issue, self supervised learning approaches, such as masked autoencoders (maes), have gained popularity as potential solutions. in this paper, we propose the vq mae av model, a vector quantized mae specifically designed for audiovisual speech self supervised representation learning. This paper proposes the vq mae av model, a vector quantized masked autoencoder (mae) designed for audiovisual speech self supervised representation learning and applied to ser.

Pdf A Vector Quantized Masked Autoencoder For Speech Emotion Recognition In this paper, we propose the vector quantized masked autoencoder for speech (vq mae s), a self supervised model that is fine tuned to recognize emotions from speech signals. In this paper, we propose the vector quantized masked autoencoder for speech (vq mae s), a self supervised model that is fine tuned to recognize emotions from speech signals. To address this issue, self supervised learning approaches, such as masked autoencoders (maes), have gained popularity as potential solutions. in this paper, we propose the vq mae av model, a vector quantized mae specifically designed for audiovisual speech self supervised representation learning. This paper proposes the vq mae av model, a vector quantized masked autoencoder (mae) designed for audiovisual speech self supervised representation learning and applied to ser.

Figure 2 From A Vector Quantized Masked Autoencoder For Audiovisual To address this issue, self supervised learning approaches, such as masked autoencoders (maes), have gained popularity as potential solutions. in this paper, we propose the vq mae av model, a vector quantized mae specifically designed for audiovisual speech self supervised representation learning. This paper proposes the vq mae av model, a vector quantized masked autoencoder (mae) designed for audiovisual speech self supervised representation learning and applied to ser.

A Vector Quantized Masked Autoencoder For Audiovisual Speech Emotion

Comments are closed.