Cvpr2024 Codebook Transfer With Part Of Speech For Vector Quantized Image Modeling

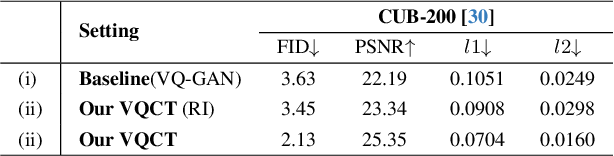

Beit V2 Masked Image Modeling With Vector Quantized Visual Tokenizers To this end, we propose a novel codebook transfer framework with part of speech, called vqct, which aims to transfer a well trained codebook from pretrained language models to vqim for robust codebook learning. In tabel 1, we report the details (including the number of codes and the number of trainable parameters) of codebook of vq vae, vq gan, gumbel vq, cvq, and our vqct.

Codebook Transfer With Part Of Speech For Vector Quantized Image To this end we propose a novel codebook transfer framework with part of speech called vqct which aims to transfer a well trained codebook from pretrained language models to vqim for robust codebook learning. Formation is rarely exploited in vqim. to this end, we propose a novel codebook transfer framework with part of speech, called vqct, which aims to transfer a well trained codebook from pretrained language model. This paper proposes a novel codebook transfer framework for vector quantized image modeling. in particular, we introduce a pretrained codebook and part of speech knowledge as priors and then design a graph convolution codebook transfer network to generate codebook. This study proposes the two stage framework, which consists of residual quantized vae (rq vae) and rq transformer, to effectively generate high resolution images and out performs the existing ar models on various benchmarks of unconditional and conditional image generation.

Codebook Transfer With Part Of Speech For Vector Quantized Image This paper proposes a novel codebook transfer framework for vector quantized image modeling. in particular, we introduce a pretrained codebook and part of speech knowledge as priors and then design a graph convolution codebook transfer network to generate codebook. This study proposes the two stage framework, which consists of residual quantized vae (rq vae) and rq transformer, to effectively generate high resolution images and out performs the existing ar models on various benchmarks of unconditional and conditional image generation. This is a video for cvpr2024 codebook transfer with part of speech for vector quantized image modeling. To this end, we propose a novel codebook transfer framework with part of speech, called vqct, which aims to transfer a well trained codebook from pretrained language models to vqim for robust codebook learning. We propose two novel model architectures for computing continuous vector representations of words from very large data sets. the quality of these representations is measured in a word similarity. To this end, we propose a novel codebook transfer framework with part of speech, called vqct, which aims to transfer a well trained codebook from pretrained language models to vqim for robust codebook learning.

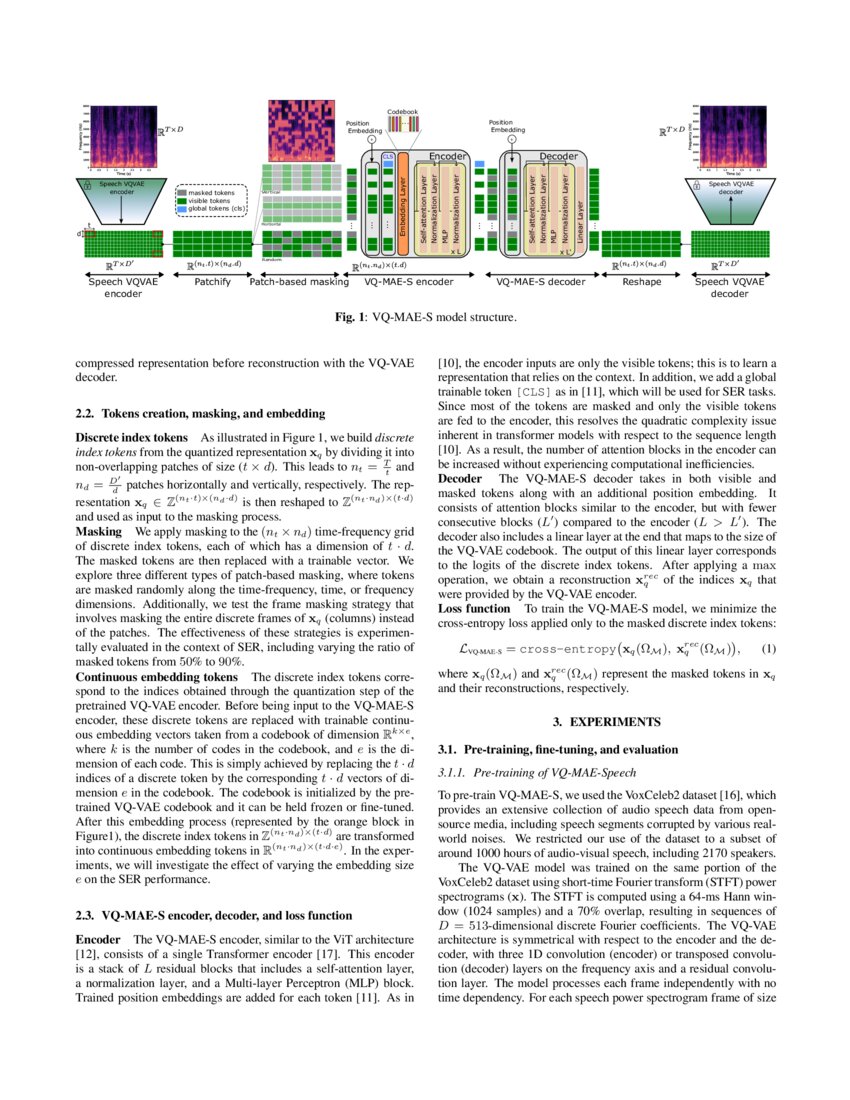

A Vector Quantized Masked Autoencoder For Speech Emotion Recognition This is a video for cvpr2024 codebook transfer with part of speech for vector quantized image modeling. To this end, we propose a novel codebook transfer framework with part of speech, called vqct, which aims to transfer a well trained codebook from pretrained language models to vqim for robust codebook learning. We propose two novel model architectures for computing continuous vector representations of words from very large data sets. the quality of these representations is measured in a word similarity. To this end, we propose a novel codebook transfer framework with part of speech, called vqct, which aims to transfer a well trained codebook from pretrained language models to vqim for robust codebook learning.

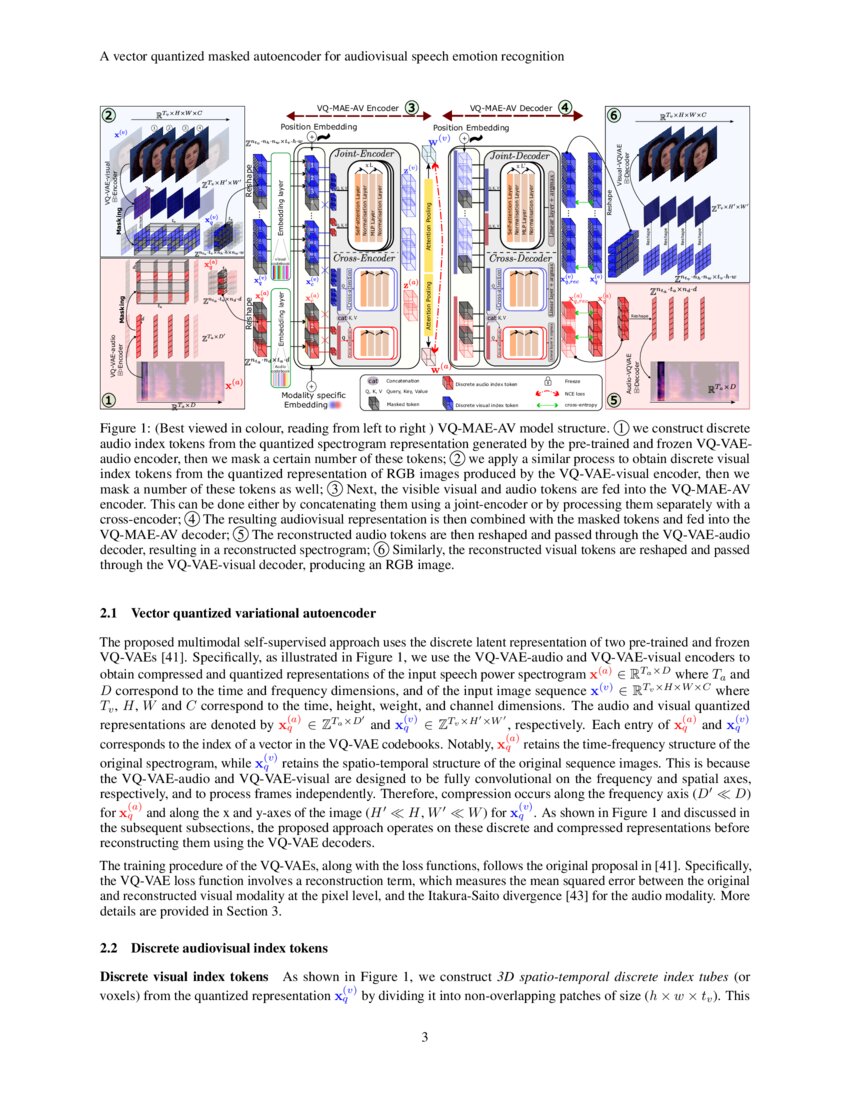

A Vector Quantized Masked Autoencoder For Audiovisual Speech Emotion We propose two novel model architectures for computing continuous vector representations of words from very large data sets. the quality of these representations is measured in a word similarity. To this end, we propose a novel codebook transfer framework with part of speech, called vqct, which aims to transfer a well trained codebook from pretrained language models to vqim for robust codebook learning.

Comments are closed.