Masked Autoencoders Mae Paper Explained

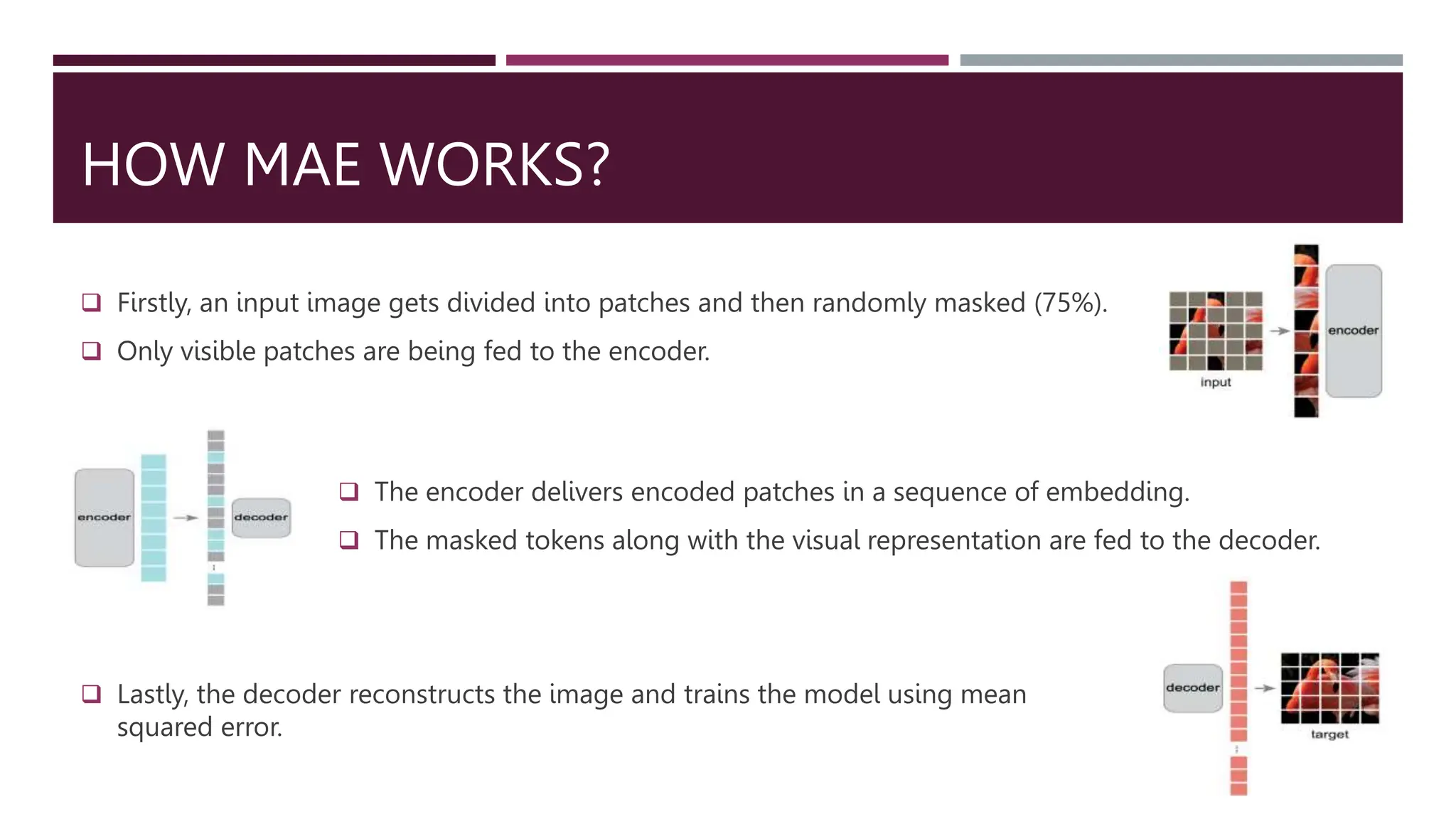

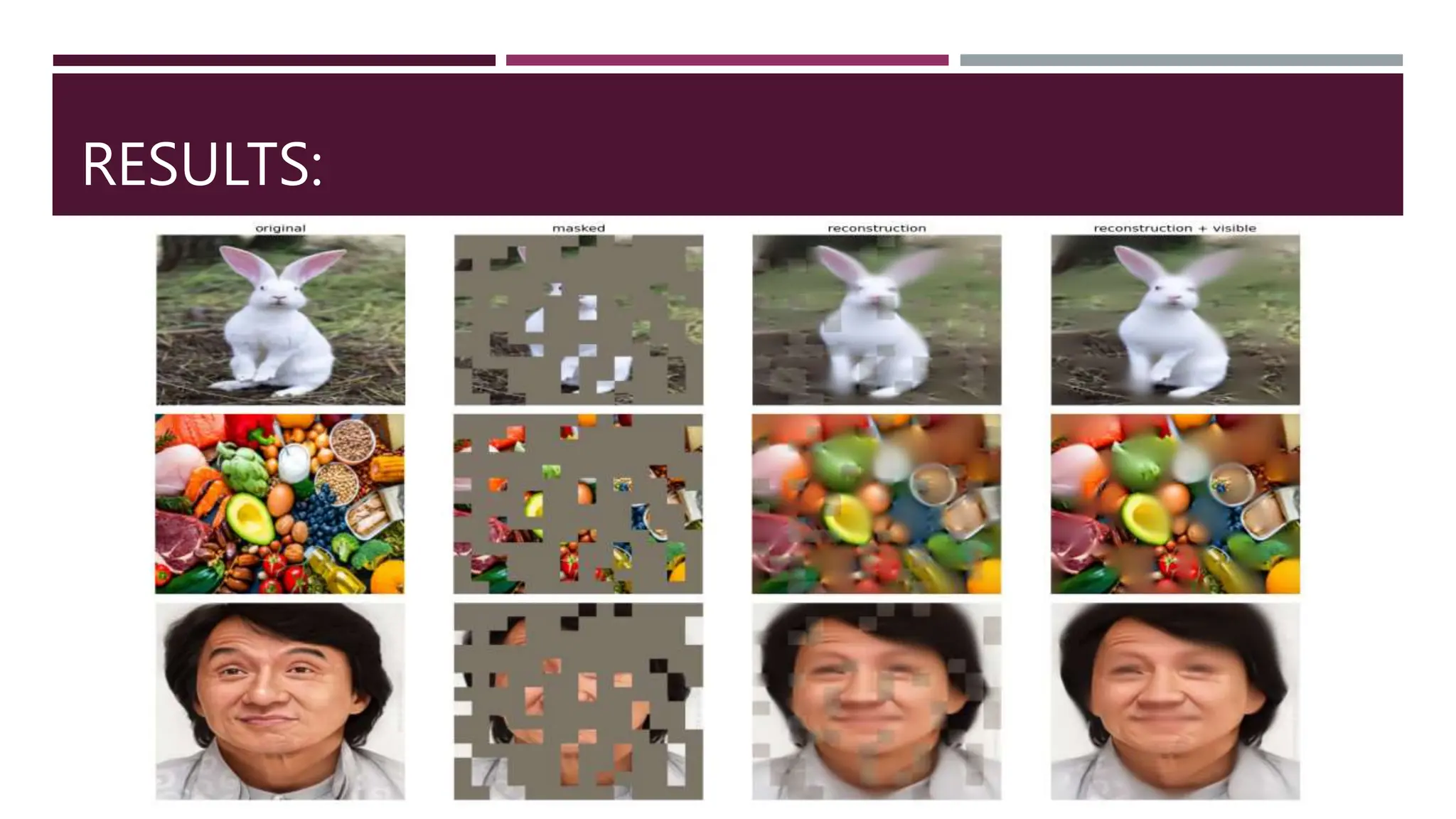

Masked Autoencoder Mae Mae He Et Al 2022 Is A Powerful Masked This paper shows that masked autoencoders (mae) are scalable self supervised learners for computer vision. our mae approach is simple: we mask random patches of the input image and reconstruct the missing pixels. it is based on two core designs. This paper shows that masked autoencoders (mae) are scalable self supervised learners for computer vision. our mae approach is simple: we mask random patches of the input image and reconstruct the missing pixels. it is based on two core designs.

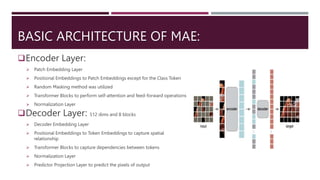

Assignment On Masked Autoencoders Mae Pptx This paper shows that masked autoencoders (mae) are scalable self supervised learners for computer vision. our mae approach is simple: we mask random patches of. In this article, you have learned about masked autoencoders (mae), a paper that leverages transformers and autoencoders for self supervised pre training and adds another simple but effective concept to the self supervised pre training toolbox. Driven by this analysis, mae masks random patches from the input image and reconstructs the missing patches in the pixel space. it has an asymmetric encoder decoder design. Authored by swapnil singh, it provides an in depth look at how maes predict masked parts of images to capture essential visual representations, inspired by techniques like bert in nlp.

Assignment On Masked Autoencoders Mae Pptx Driven by this analysis, mae masks random patches from the input image and reconstructs the missing patches in the pixel space. it has an asymmetric encoder decoder design. Authored by swapnil singh, it provides an in depth look at how maes predict masked parts of images to capture essential visual representations, inspired by techniques like bert in nlp. In this article, you have learned about masked autoencoders (mae), a paper that leverages transformers and autoencoders for self supervised pre training and adds another simple but effective concept to the self supervised pre training toolbox. Unveiling the operation of masked autoencoder (mae) which fundamentally learns pattern based patch level clustering, we expedite the mae to learn patch clustering by incorporating informed mask derived from itself. The paper which i am explaining in this article deals with distilling the knowledge directly from the pre trained models (here masked autoencoders) into student models. Despite the emergence of intriguing empirical observations on mae, a theoretically principled understanding is still lacking. in this work, we formally characterize and justify existing empirical insights and provide theoretical guarantees of mae.

Assignment On Masked Autoencoders Mae Pptx Computing In this article, you have learned about masked autoencoders (mae), a paper that leverages transformers and autoencoders for self supervised pre training and adds another simple but effective concept to the self supervised pre training toolbox. Unveiling the operation of masked autoencoder (mae) which fundamentally learns pattern based patch level clustering, we expedite the mae to learn patch clustering by incorporating informed mask derived from itself. The paper which i am explaining in this article deals with distilling the knowledge directly from the pre trained models (here masked autoencoders) into student models. Despite the emergence of intriguing empirical observations on mae, a theoretically principled understanding is still lacking. in this work, we formally characterize and justify existing empirical insights and provide theoretical guarantees of mae.

Assignment On Masked Autoencoders Mae Pptx Computing The paper which i am explaining in this article deals with distilling the knowledge directly from the pre trained models (here masked autoencoders) into student models. Despite the emergence of intriguing empirical observations on mae, a theoretically principled understanding is still lacking. in this work, we formally characterize and justify existing empirical insights and provide theoretical guarantees of mae.

Assignment On Masked Autoencoders Mae Pptx Computing

Comments are closed.