14 Vq Vae Vector Quantized Variational Autoencoder

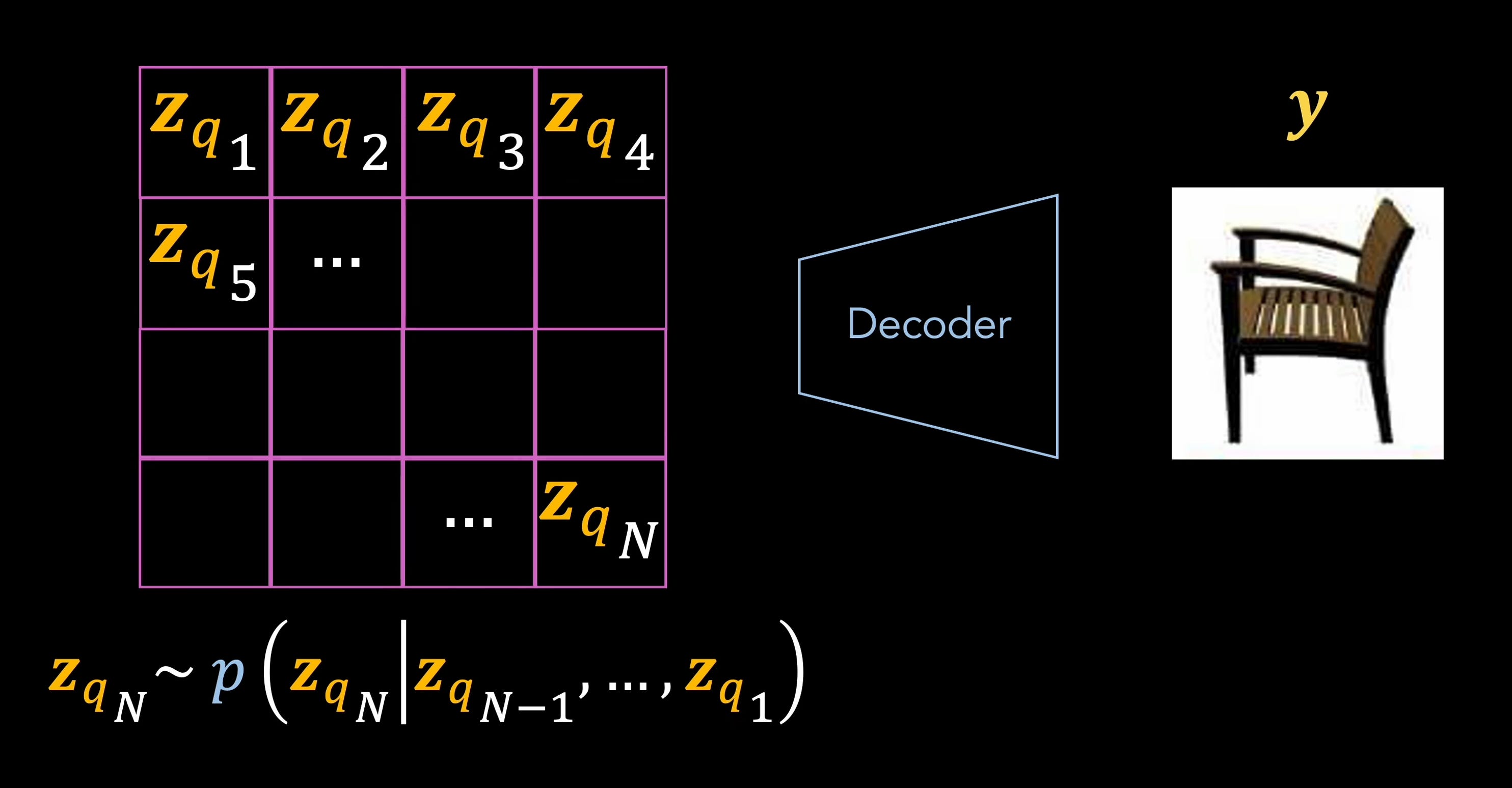

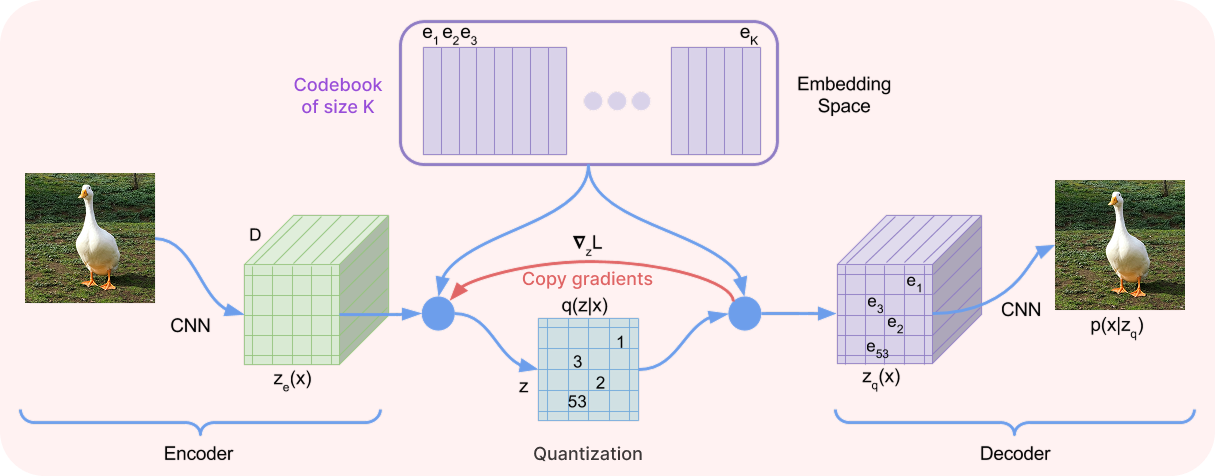

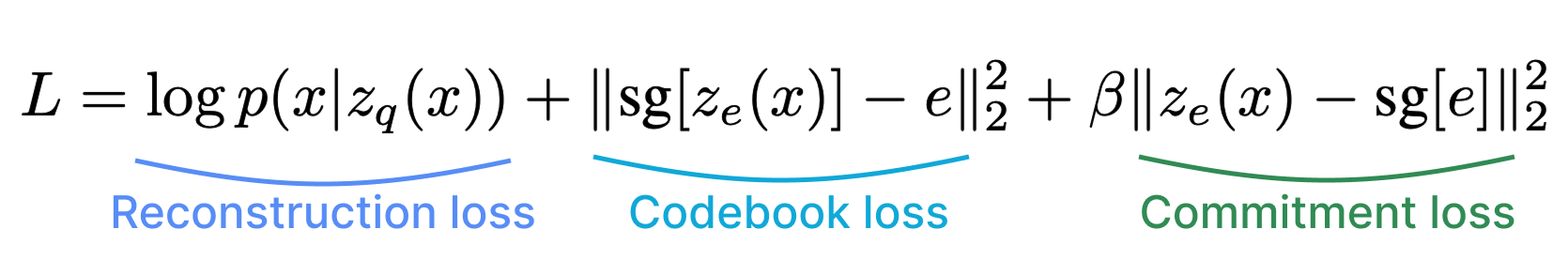

14 Vq Vae Vector Quantized Variational Autoencoder The vq vae takes the classic autoencoder framework and pushes it into the new territory of discrete latent spaces. some challenges are posed by the non differentiable quantization step, but they are nicely addressed by copying the gradients and a well designed loss function. Description: training a vq vae for image reconstruction and codebook sampling for generation. in this example, we develop a vector quantized variational autoencoder (vq vae).

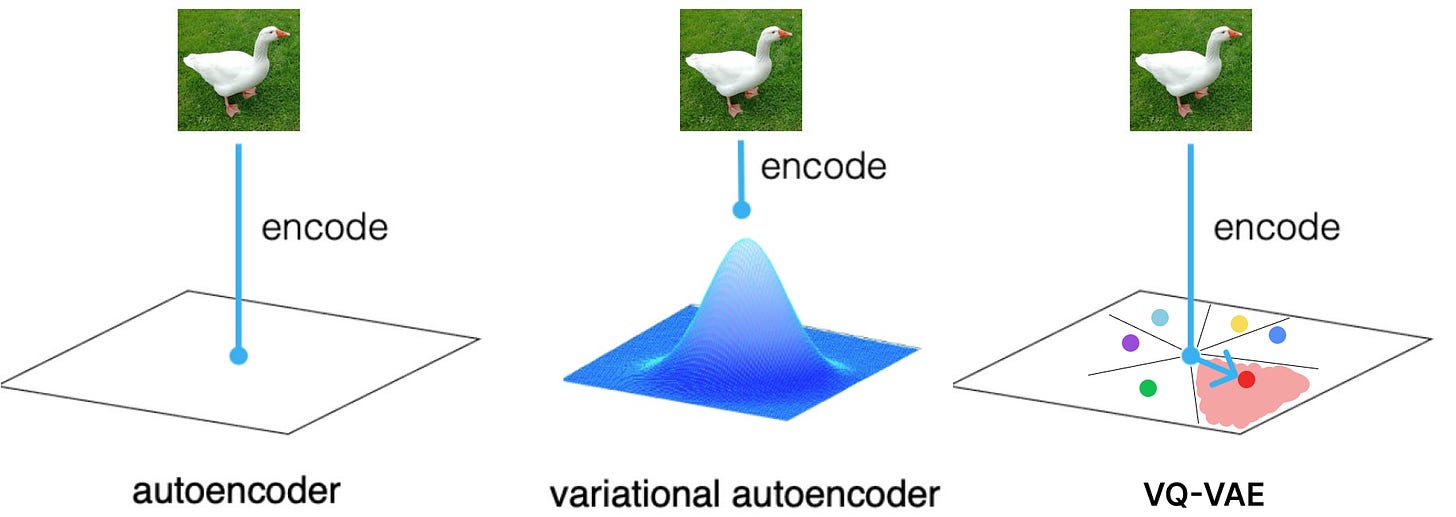

14 Vq Vae Vector Quantized Variational Autoencoder This is a pytorch implementation of the vector quantized variational autoencoder ( arxiv.org abs 1711.00937). you can find the author's original implementation in tensorflow here with an example you can run in a jupyter notebook. Our model, the vector quantised variational autoencoder (vq vae), differs from vaes in two key ways: the encoder network outputs discrete, rather than continuous, codes; and the prior is learnt rather than static. Learn about vq vaes using discrete latent representations via vector quantization. Instead of embedding loss we can set the embedding vectors to the moving averages of encoder outputs $q {\boldsymbol {\phi}} (\boldsymbol {z}|\boldsymbol {x})$ that are closest to the embedding vector.

14 Vq Vae Vector Quantized Variational Autoencoder Learn about vq vaes using discrete latent representations via vector quantization. Instead of embedding loss we can set the embedding vectors to the moving averages of encoder outputs $q {\boldsymbol {\phi}} (\boldsymbol {z}|\boldsymbol {x})$ that are closest to the embedding vector. At the heart of vector quantization lies the distance computation between the encoded vectors and the codebook embeddings. to compute distance we use the mean squared error (mse) loss. In subjective evaluations, a deep ar model (dar) outperformed an rnn. here, we propose a vector quantized variational autoencoder (vq vae) neural f 0 model that is both more efficient and more interpretable than the dar. This notebook will provide a minimalistic but effective implementation of vq vae, explaining all the components and the usefulness of this method. the main idea: vq vae learns a. Vq vaes are the foundation for a lot of advanced generative models. thus, this post show how to implement a simple vq vae and train it on mnist. i end things by exploring the influence of the codebook size on the performance of the vq vae.

14 Vq Vae Vector Quantized Variational Autoencoder At the heart of vector quantization lies the distance computation between the encoded vectors and the codebook embeddings. to compute distance we use the mean squared error (mse) loss. In subjective evaluations, a deep ar model (dar) outperformed an rnn. here, we propose a vector quantized variational autoencoder (vq vae) neural f 0 model that is both more efficient and more interpretable than the dar. This notebook will provide a minimalistic but effective implementation of vq vae, explaining all the components and the usefulness of this method. the main idea: vq vae learns a. Vq vaes are the foundation for a lot of advanced generative models. thus, this post show how to implement a simple vq vae and train it on mnist. i end things by exploring the influence of the codebook size on the performance of the vq vae.

14 Vq Vae Vector Quantized Variational Autoencoder This notebook will provide a minimalistic but effective implementation of vq vae, explaining all the components and the usefulness of this method. the main idea: vq vae learns a. Vq vaes are the foundation for a lot of advanced generative models. thus, this post show how to implement a simple vq vae and train it on mnist. i end things by exploring the influence of the codebook size on the performance of the vq vae.

Comments are closed.