Masked Autoencoders That Listen

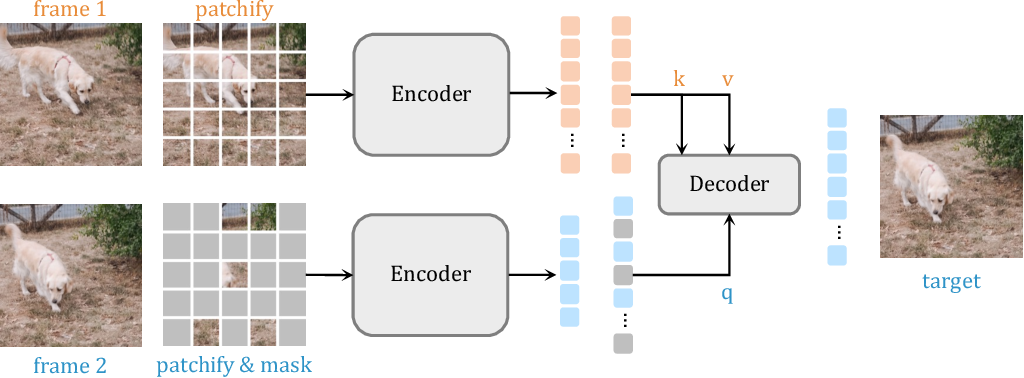

Github Kalelpark Maskedautoencoder Masked Autoencoders Are Scalable Following the transformer encoder decoder design in mae, our audio mae first encodes audio spectrogram patches with a high masking ratio, feeding only the non masked tokens through encoder layers. The audio mae, a simple extension of image based masked autoencoders to self supervised representation learning from audio spectrograms, sets new state of the art performance on six audio and speech classification tasks, outperforming other recent models that use external supervised pre training.

Pdf Masked Autoencoders That Listen Abstract and figures this paper studies a simple extension of image based masked autoencoders (mae) to self supervised representation learning from audio spectrograms. Abstract: this paper studies a simple extension of image based masked autoencoders (mae) to self supervised representation learning from audio spectrograms. Audio mae (masked autoencoders that listen) extends the successful masked autoencoder framework from computer vision to audio understanding. Following the transformer encoder decoder design in mae, our audio mae first encodes audio spectrogram patches with a high masking ratio, feeding only the non masked tokens through encoder layers.

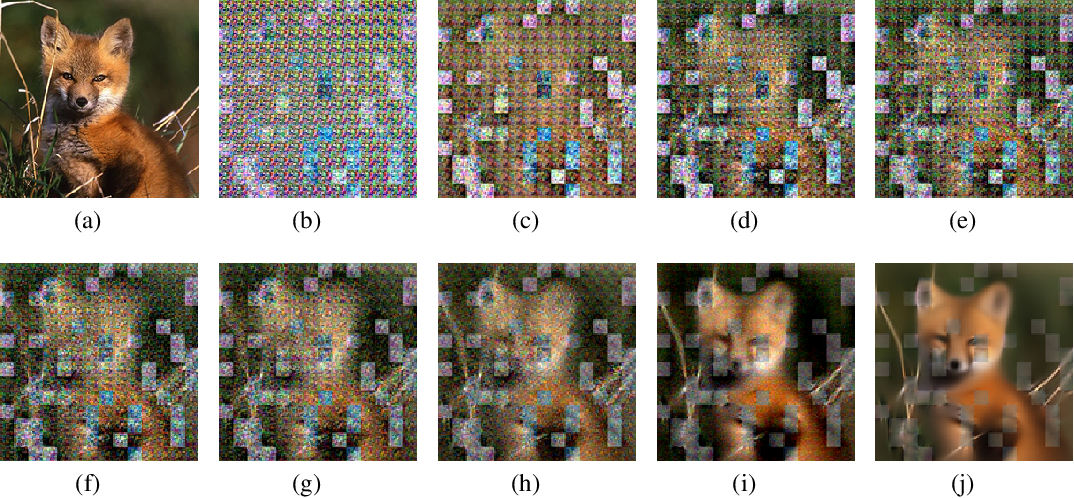

Siamese Masked Autoencoders Audio mae (masked autoencoders that listen) extends the successful masked autoencoder framework from computer vision to audio understanding. Following the transformer encoder decoder design in mae, our audio mae first encodes audio spectrogram patches with a high masking ratio, feeding only the non masked tokens through encoder layers. Following the transformer encoder decoder design in mae, our audio mae first encodes audio spectrogram patches with a high masking ratio, feeding only the non masked tokens through encoder layers. This paper adapts masked autoencoders to audio spectrograms, achieving state of the art audio classification without external supervised pre training. By combining these elements, masked autoencoders effectively learn to reconstruct missing or corrupted parts of the input data, making them powerful tools for various applications in deep learning. Audio mae is a transformer based model that encodes and decodes audio spectrogram patches with a high masking ratio. it learns to reconstruct the input spectrogram and fine tunes the encoder for audio and speech classification tasks.

How To Understand Masked Autoencoders Following the transformer encoder decoder design in mae, our audio mae first encodes audio spectrogram patches with a high masking ratio, feeding only the non masked tokens through encoder layers. This paper adapts masked autoencoders to audio spectrograms, achieving state of the art audio classification without external supervised pre training. By combining these elements, masked autoencoders effectively learn to reconstruct missing or corrupted parts of the input data, making them powerful tools for various applications in deep learning. Audio mae is a transformer based model that encodes and decodes audio spectrogram patches with a high masking ratio. it learns to reconstruct the input spectrogram and fine tunes the encoder for audio and speech classification tasks.

Paper Insights Masked Autoencoders That Listen By Shanmuka Sadhu By combining these elements, masked autoencoders effectively learn to reconstruct missing or corrupted parts of the input data, making them powerful tools for various applications in deep learning. Audio mae is a transformer based model that encodes and decodes audio spectrogram patches with a high masking ratio. it learns to reconstruct the input spectrogram and fine tunes the encoder for audio and speech classification tasks.

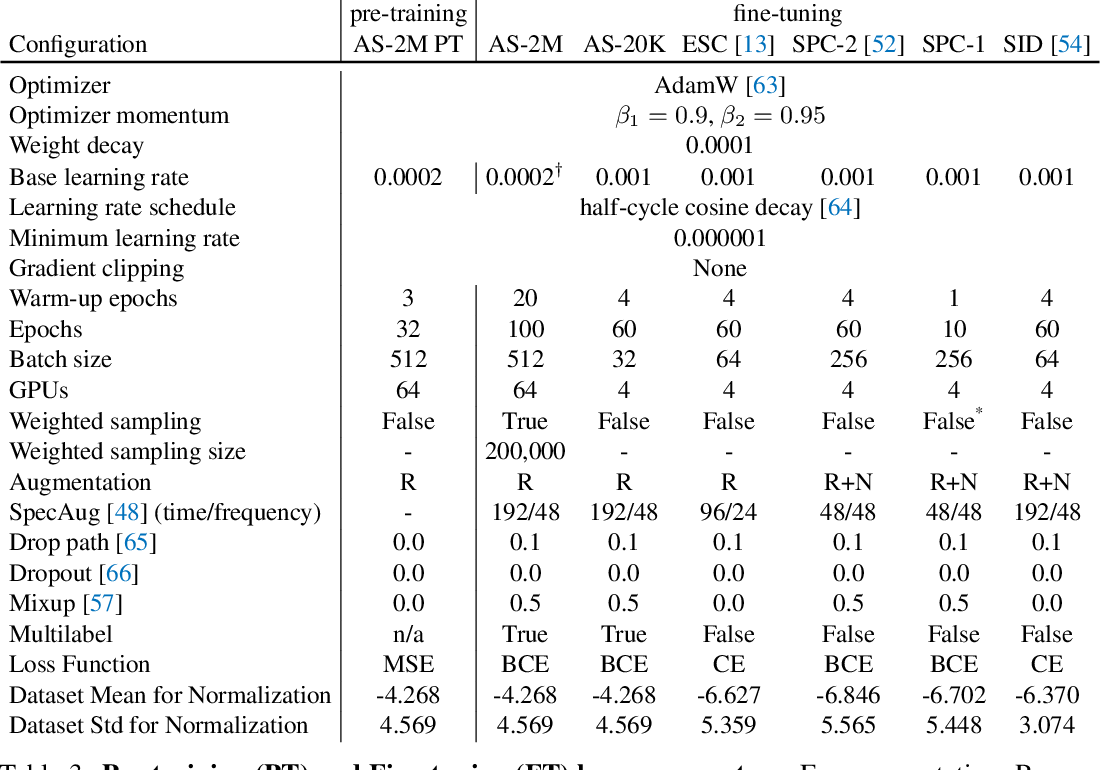

Table 3 From Masked Autoencoders That Listen Semantic Scholar

Comments are closed.