Tilekernels Deepseeks Internal Gpu Kernels Moe Routing Fp4 Quantization Written In Tilelang

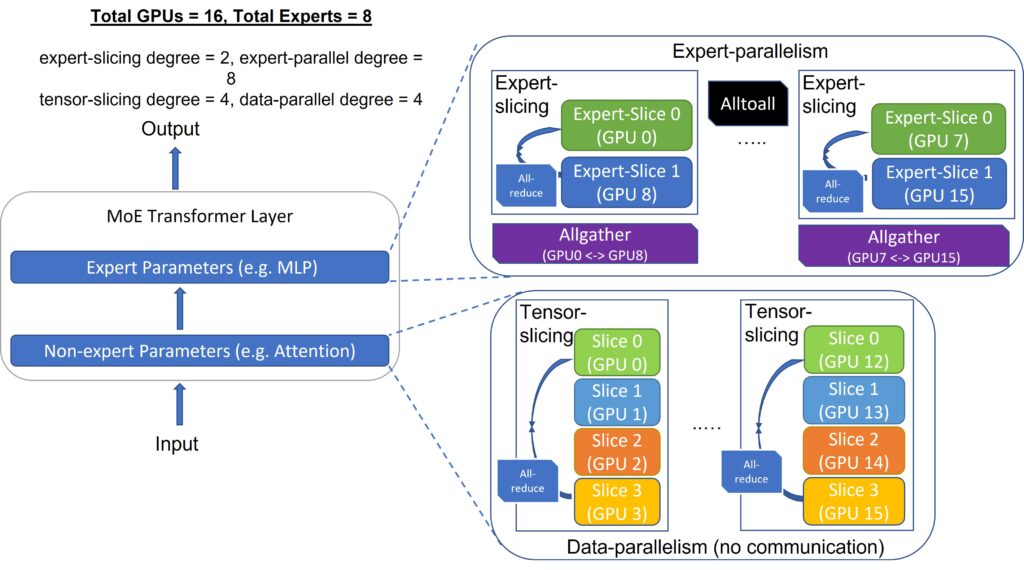

Deepspeed Inference Multi Gpu Inference With Customized Inference Tilelang is a domain specific language for expressing high performance gpu kernels in python, featuring easy migration, agile development, and automatic optimization. most kernels in this project approach the limit of hardware performance regarding the compute intensity and memory bandwidth. The moe routing kernels provide the computational foundation for token to expert assignment in mixture of experts (moe) models. this module handles the selection of experts (top k), group based scoring, load balancing auxiliary loss computation, and the construction of indexing mappings required for efficient expert dispatch.

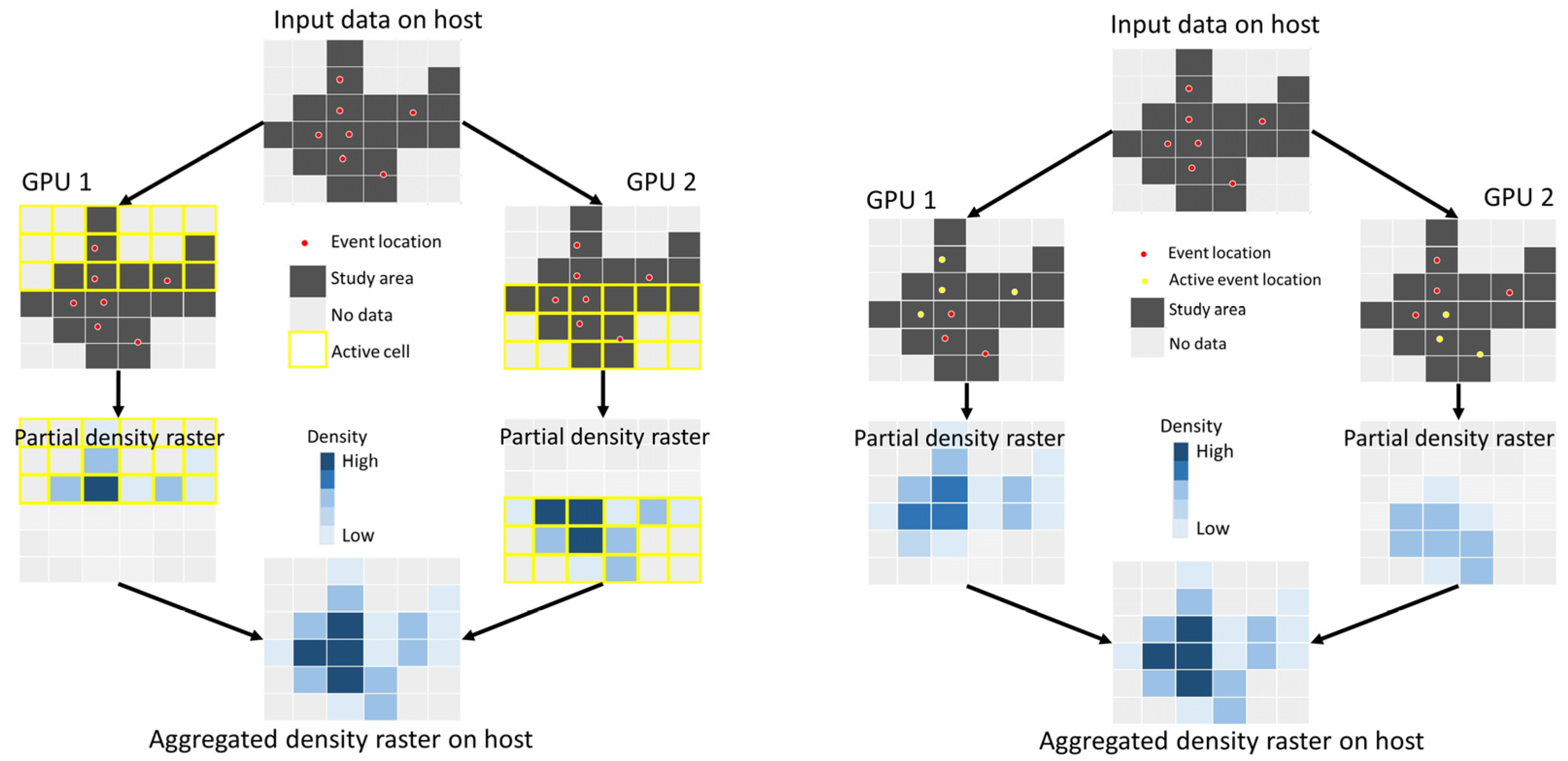

Multi Gpu Parallel And Tile Based Kernel Density Estimation For Large It launched and open sourced a new repository, tile kernels, and at the same time updated the deepep repository, bringing deepep v2 online. it has been less than a week since deepseek quietly updated mega moe and fp4 indexer last time. Deepseek just open sourced the gpu kernels underneath their models. tilekernels is written entirely in tilelang and bypasses standard libraries to extract maximum floating point throughput directly from nvidia hopper and blackwell architectures. optimized mixture of experts routing, fp8 and fp4 per channel quantization, specialized engram gating, the exact kernels deepseek runs internally. Deepseek launched tilekernels on friday, april 24, 2026, an open source library written in python that achieves gpu performance levels near theoretical hardware limits. the project utilizes the tilelang domain specific language to optimize critical paths for large language model training and inference without using cuda c . Deepseek just open sourced the gpu kernels underneath their models. tilekernels is written entirely in tilelang and bypasses standard libraries to extract maximum floating point throughput.

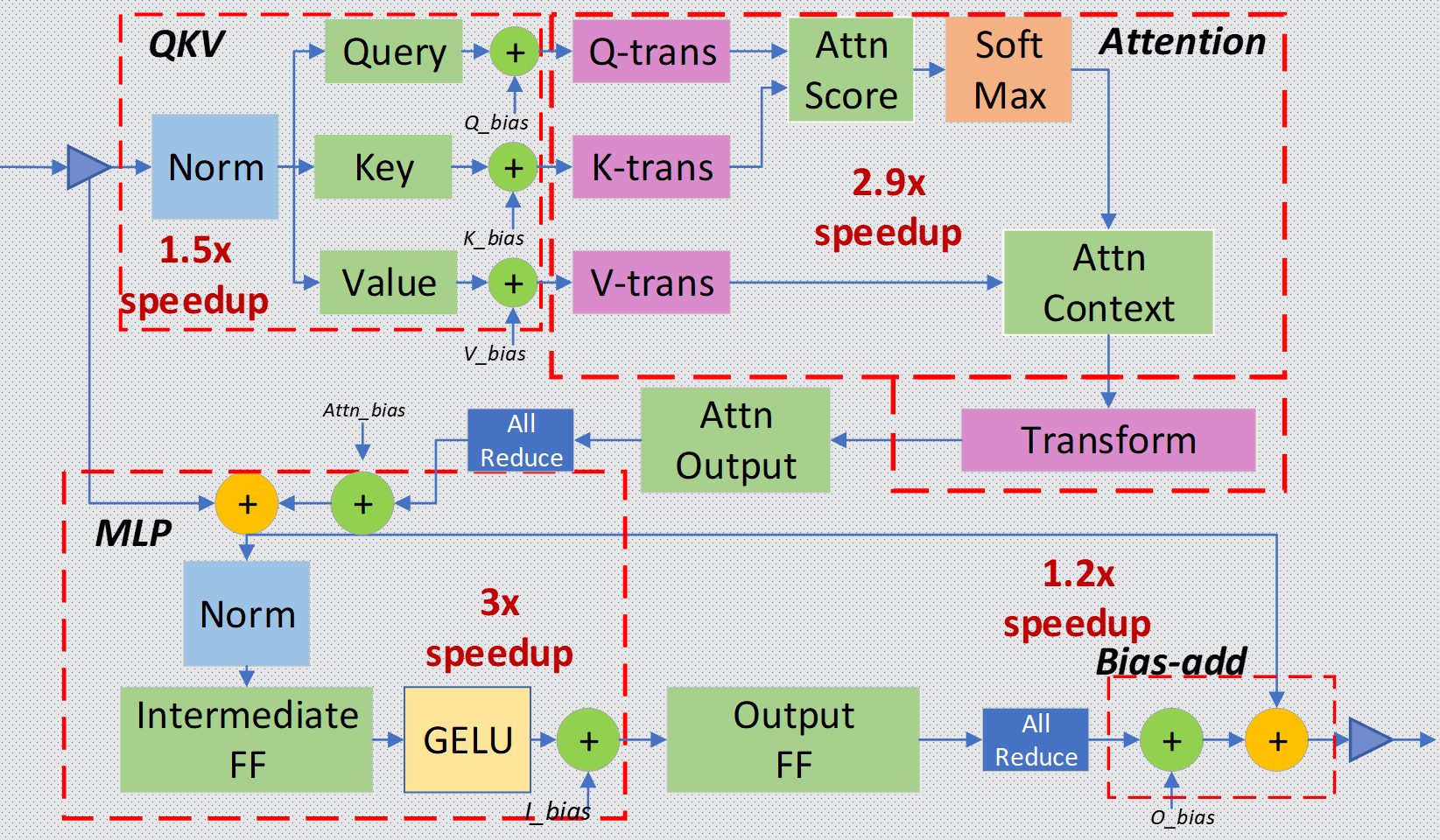

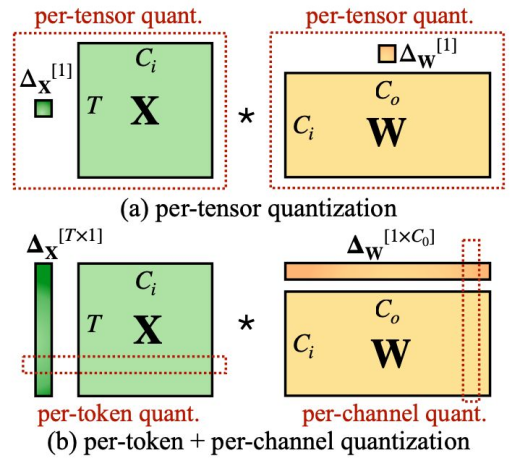

Fine Tuning Deepseek R1 A Step By Step Guide By Jamal Nasir Medium Deepseek launched tilekernels on friday, april 24, 2026, an open source library written in python that achieves gpu performance levels near theoretical hardware limits. the project utilizes the tilelang domain specific language to optimize critical paths for large language model training and inference without using cuda c . Deepseek just open sourced the gpu kernels underneath their models. tilekernels is written entirely in tilelang and bypasses standard libraries to extract maximum floating point throughput. Tile kernels provides near hardware limit gpu kernels for moe routing, quantization, transpose, and gating in llm training and inference. This post delves into the optimization strategies for deepseek r1 throughput oriented scenarios (tps gpu), developed by nvidia within tensorrt llm on nvidia’s blackwell b200 gpus. we will explore the rationale behind each enhancement. Looking at the architecture diagram, the lightning indexer sits at the bottom right. it takes the input hidden states and produces compressed representations {q^a {t,i}}, {k^r t}, and {w^i {t,j}}. these fp8 quantized index vectors are what feed into the top k selector. Tilelang is a domain specific language for expressing high performance gpu kernels in python, featuring easy migration, agile development, and automatic optimization. most kernels in this project approach the limit of hardware performance regarding the compute intensity and memory bandwidth.

Deepspeed Advancing Moe Inference And Training To Power Next Tile kernels provides near hardware limit gpu kernels for moe routing, quantization, transpose, and gating in llm training and inference. This post delves into the optimization strategies for deepseek r1 throughput oriented scenarios (tps gpu), developed by nvidia within tensorrt llm on nvidia’s blackwell b200 gpus. we will explore the rationale behind each enhancement. Looking at the architecture diagram, the lightning indexer sits at the bottom right. it takes the input hidden states and produces compressed representations {q^a {t,i}}, {k^r t}, and {w^i {t,j}}. these fp8 quantized index vectors are what feed into the top k selector. Tilelang is a domain specific language for expressing high performance gpu kernels in python, featuring easy migration, agile development, and automatic optimization. most kernels in this project approach the limit of hardware performance regarding the compute intensity and memory bandwidth.

The Pipeline Of Kernel Level Quantization And Finetuning Download Looking at the architecture diagram, the lightning indexer sits at the bottom right. it takes the input hidden states and produces compressed representations {q^a {t,i}}, {k^r t}, and {w^i {t,j}}. these fp8 quantized index vectors are what feed into the top k selector. Tilelang is a domain specific language for expressing high performance gpu kernels in python, featuring easy migration, agile development, and automatic optimization. most kernels in this project approach the limit of hardware performance regarding the compute intensity and memory bandwidth.

Gpu Mode Lecture 7 Advanced Quantization Christian Mills

Comments are closed.