Accelerating Moe Model Inference With Locality Aware Kernel Design

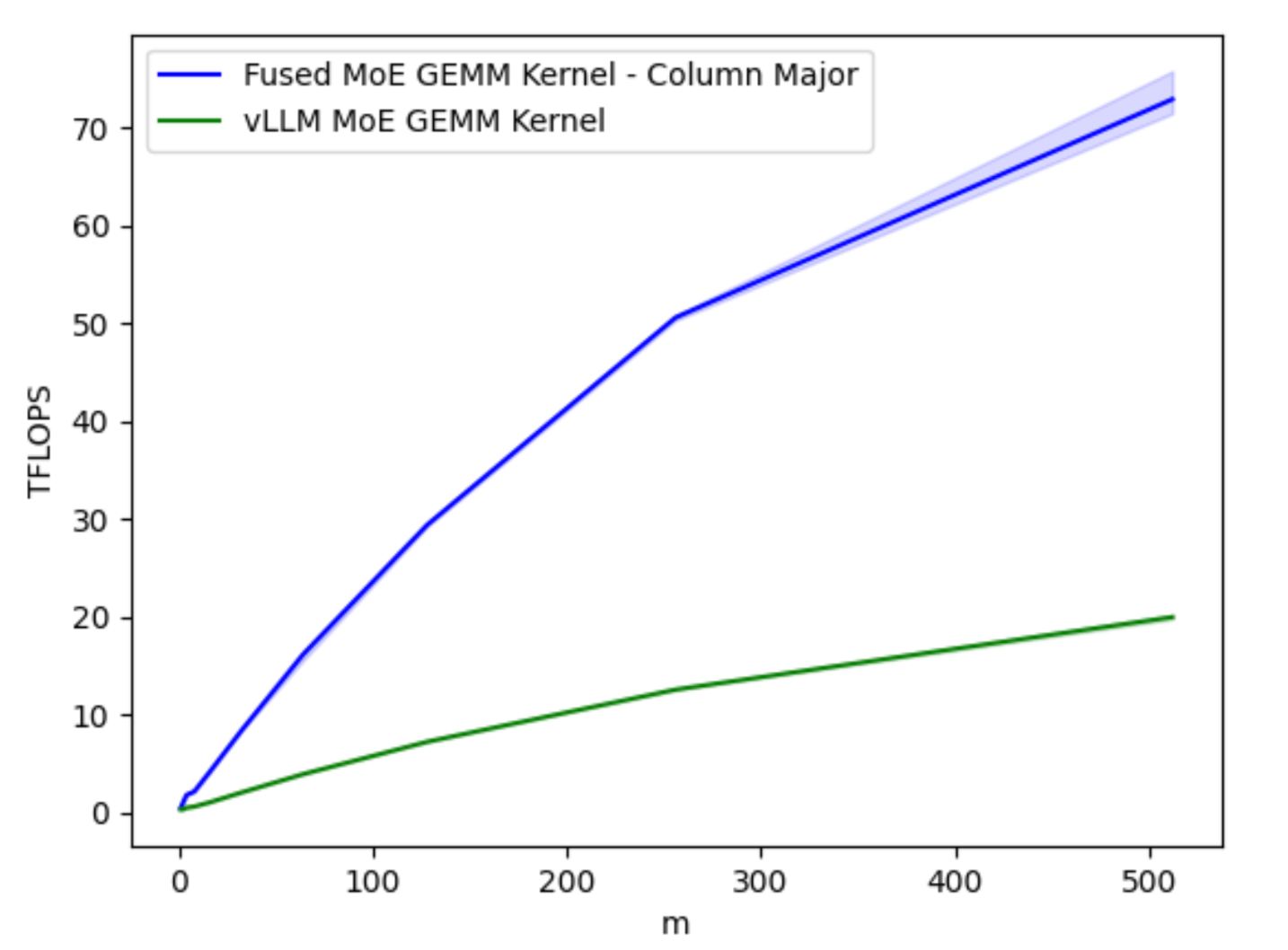

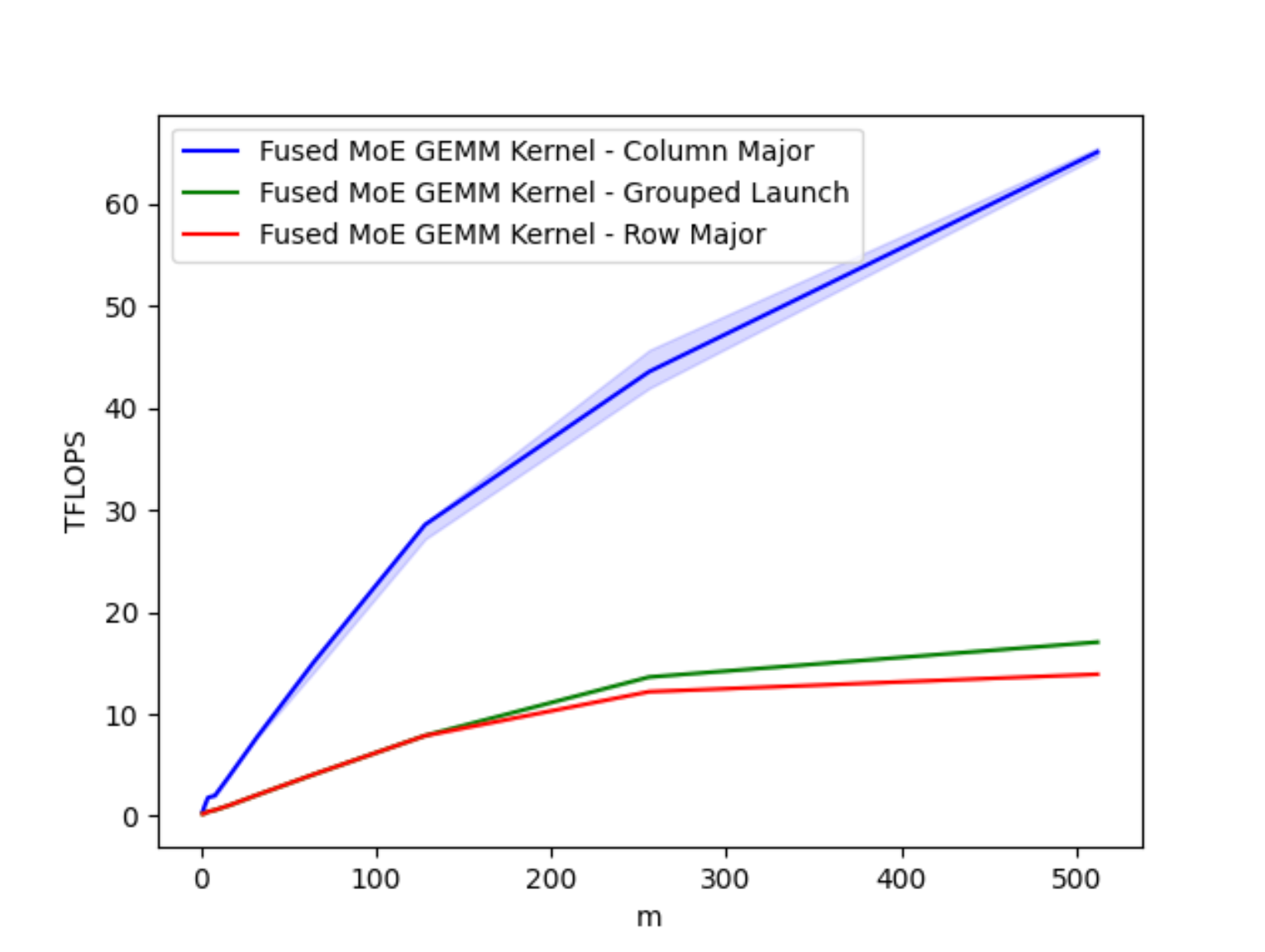

Accelerating Moe Model Inference With Locality Aware Kernel Design рџ ґ In this post, we provide methods to efficiently parallelize this computation during inference time, specifically during autoregression (or decoding stages). We show that by implementing column major scheduling to improve data locality, we can accelerate the core triton gemm (general matrix matrix multiply) kernel for moes (mixture of experts) up to 4x on a100, and up to 4.4x on h100 nvidia gpus.

Accelerating Moe Model Inference With Locality Aware Kernel Design Triton kernel supporting and accelerating moe inference (mixtral). this kernel was contributed by ibm research. this requires vllm to be installed to run. applied ai experiments and examples for pytorch. contribute to meta pytorch applied ai development by creating an account on github. In response, we propose a memory efficient algorithm to compute the forward and backward passes of moes with minimal activation caching for the backward pass. we also design gpu kernels that overlap memory io with computation, benefiting all moe architectures. With the prevailing mixture of experts (moe) architecture pushing the performance of large language models (llms) to new limits, fine tuning moe models presents. Experiments across multiple moe models and multi node gpu clusters show that grace moe achieves up to 3.79× end to end inference speedup over state of the art systems such as deepspeed, tutel, megablocks, and c2r, without compromising accuracy.

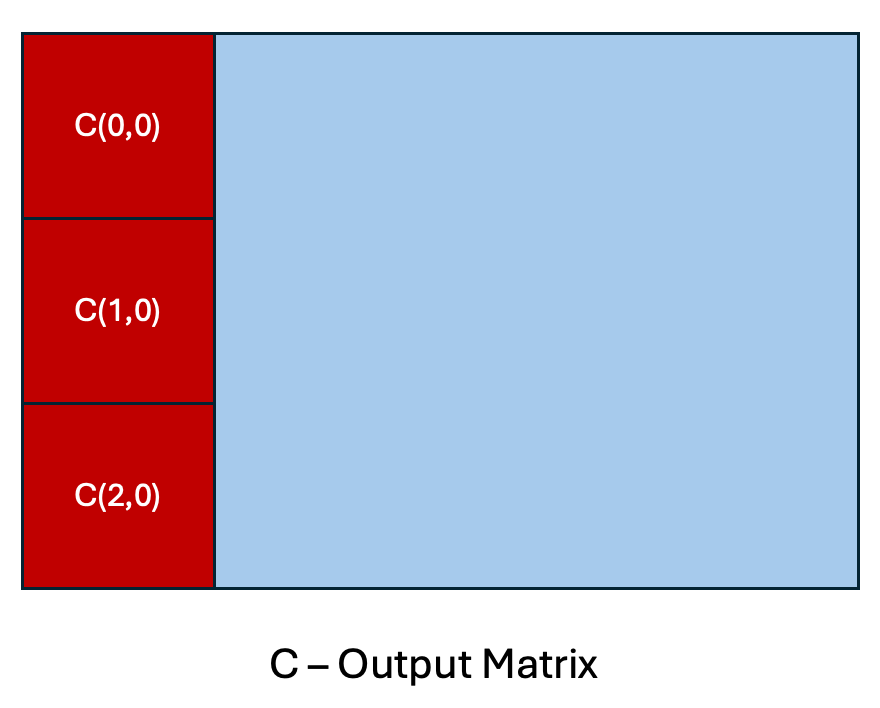

Accelerating Moe Model Inference With Locality Aware Kernel Design With the prevailing mixture of experts (moe) architecture pushing the performance of large language models (llms) to new limits, fine tuning moe models presents. Experiments across multiple moe models and multi node gpu clusters show that grace moe achieves up to 3.79× end to end inference speedup over state of the art systems such as deepspeed, tutel, megablocks, and c2r, without compromising accuracy. Accelerating moe model inference with locality aware kernel design ? check out several different work decomposition and scheduling algorithms for moe gemms and how at the hardware. Accelerating moe model inference with locality aware kernel design 🔥 check out several different work decomposition and scheduling algorithms for moe gemms and how at the. We need to re visit our infrastructure design blueprint to embrace this moe model trend, and sonicmoe is one of our answers. activation memory efficient and io aware algorithm design. In simple words, applying data parallelism to large moe models when working with small fine tuning datasets leads to unnecessary model replication, which significantly wastes computational resources especially for end users.

Accelerating Moe Model Inference With Locality Aware Kernel Design Accelerating moe model inference with locality aware kernel design ? check out several different work decomposition and scheduling algorithms for moe gemms and how at the hardware. Accelerating moe model inference with locality aware kernel design 🔥 check out several different work decomposition and scheduling algorithms for moe gemms and how at the. We need to re visit our infrastructure design blueprint to embrace this moe model trend, and sonicmoe is one of our answers. activation memory efficient and io aware algorithm design. In simple words, applying data parallelism to large moe models when working with small fine tuning datasets leads to unnecessary model replication, which significantly wastes computational resources especially for end users.

Comments are closed.