Gpu Mode Lecture 7 Advanced Quantization Christian Mills

Gpu Mode Lecture 11 Sparsity Christian Mills Lecture #7 discusses gpu quantization techniques in pytorch, focusing on performance optimizations using triton and cuda kernels for dynamic and weight only quantization, including challenges and future directions. Slides: dropbox scl fi hzfx1l267m8gwyhcjvfk4 quantization cuda vs triton.pdf?rlkey=s4j64ivi2kpp2l0uq8xjdwbab&dl=0.

Gpu Mode Lecture 7 Advanced Quantization Christian Mills Lecture #7 discusses gpu quantization techniques in pytorch, focusing on performance optimizations using triton and cuda kernels for dynamic and weight only quantization, including challenges and future directions. Material for gpu mode lectures. contribute to gpu mode lectures development by creating an account on github. The document serves as a navigational entry point for the complete lecture series, which spans fundamental gpu programming concepts to advanced optimization techniques across multiple hardware platforms. 【gpu mode】lecture 7 advanced quantization, 视频播放量 10、弹幕量 0、点赞数 1、投硬币枚数 0、收藏人数 1、转发人数 0, 视频作者 id 半夜汽笛, 作者简介 ,相关视频:【gpu mode】lecture 6 optimizing optimizers,【gpu mode】lecture 11: sparsity,【gpu mode】lecture 4 compute and memory basics.

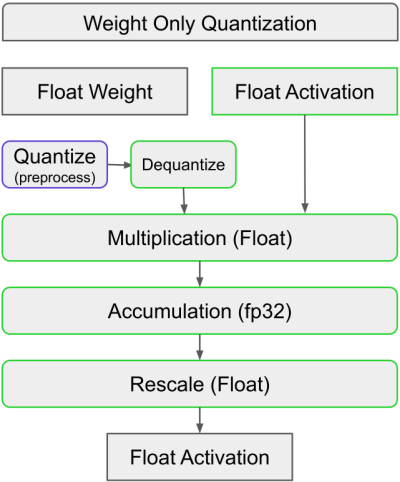

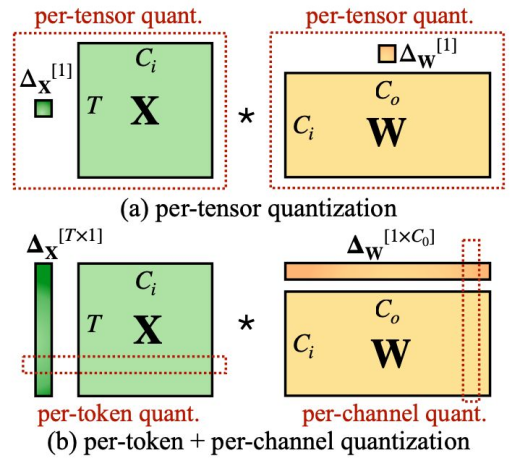

Gpu Mode Lecture 7 Advanced Quantization Christian Mills The document serves as a navigational entry point for the complete lecture series, which spans fundamental gpu programming concepts to advanced optimization techniques across multiple hardware platforms. 【gpu mode】lecture 7 advanced quantization, 视频播放量 10、弹幕量 0、点赞数 1、投硬币枚数 0、收藏人数 1、转发人数 0, 视频作者 id 半夜汽笛, 作者简介 ,相关视频:【gpu mode】lecture 6 optimizing optimizers,【gpu mode】lecture 11: sparsity,【gpu mode】lecture 4 compute and memory basics. In depth exploration of modern gpu optimization techniques, including fused kernels, quantization, and attention mechanisms. practical examples and code for integrating gpu acceleration into python frameworks like pytorch. If it is possible to run a quantized model on cuda with a different framework such as tensorflow i would love to know. this is the code to prep my quantized model (using post training quantization). I believe its only possible to use the cpu for quantization though. i have also tried to load the model as a pytorch nn module instead of a torchscript, but it seems the model architecture is changed. Quantization is great for compute bound inference problems as it allows us to utilize lower precision alus.

Gpu Mode Lecture 2 Ch 1 3 Pmpp Book Christian Mills In depth exploration of modern gpu optimization techniques, including fused kernels, quantization, and attention mechanisms. practical examples and code for integrating gpu acceleration into python frameworks like pytorch. If it is possible to run a quantized model on cuda with a different framework such as tensorflow i would love to know. this is the code to prep my quantized model (using post training quantization). I believe its only possible to use the cpu for quantization though. i have also tried to load the model as a pytorch nn module instead of a torchscript, but it seems the model architecture is changed. Quantization is great for compute bound inference problems as it allows us to utilize lower precision alus.

Lecturenotes7 Pdf Quantum Computing Mathematics I believe its only possible to use the cpu for quantization though. i have also tried to load the model as a pytorch nn module instead of a torchscript, but it seems the model architecture is changed. Quantization is great for compute bound inference problems as it allows us to utilize lower precision alus.

Comments are closed.