Lecture 73 Scaleml Series Quantization In Large Models

Quantization For Large Language Models Llms Reduce Ai Model Sizes Each day will consist of ~2 hours of talks and discussions, covering a different component of the evolving transformer stack—from quirks in the attention mechanism and positional encodings to. Full schedule: scale ml.org bootcamp the gpu mode x scale ml speaker series is a 5 day, online event hosted on the gpu mode channel where to.

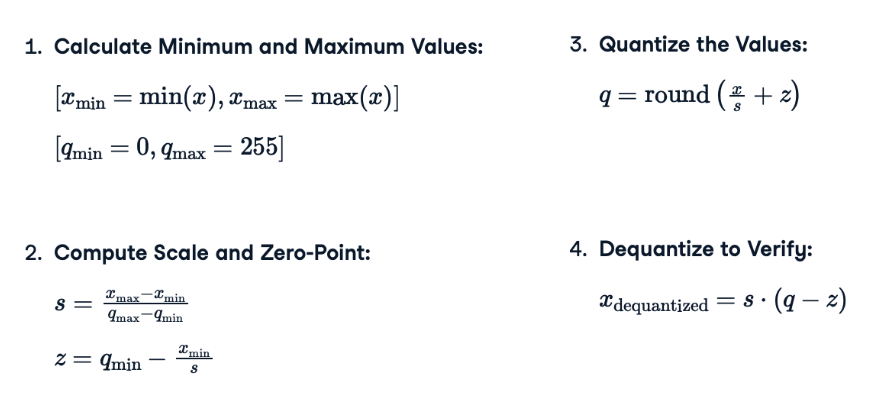

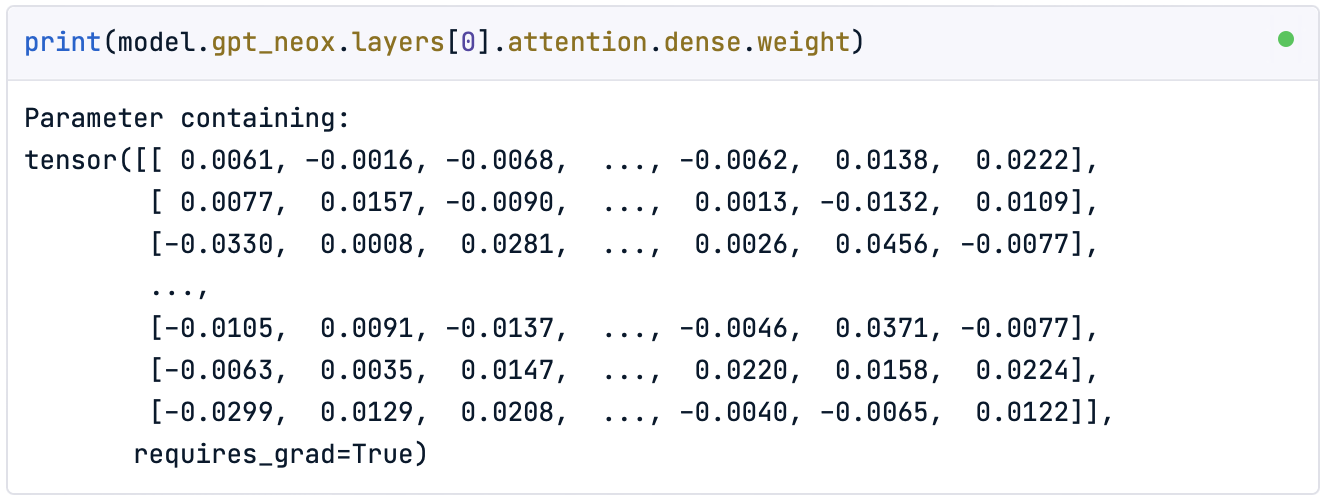

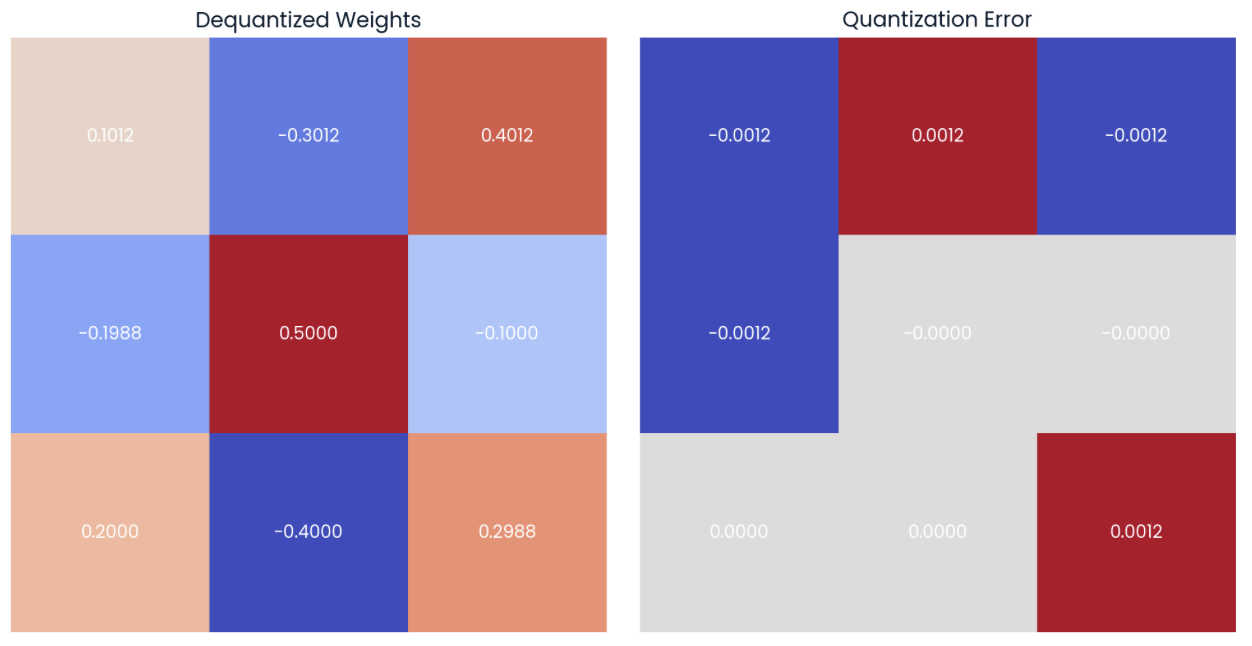

Quantization For Large Language Models Llms Reduce Ai Model Sizes 人工智能 大模型 ai技术 ai编程 ai, 视频播放量 1、弹幕量 0、点赞数 0、投硬币枚数 0、收藏人数 0、转发人数 0, 视频作者 安得广厦千万间678, 作者简介 天下寒士俱欢颜,相关视频:l.71 scaleml series flexolmo open language models for flexible data use,lecture 70 pccl fault. Day 3: quantization in large models by chris de sa. We are a cross lab mit ai graduate student collective focusing on algorithms that learn and scale. the group is open to all with an academic email however if you are still interested shoot us an email or message us via twitter. we currently host bi weekly seminars and will have hands on sessions and research socials in the future. We begin by exploring the mathematical theory of quantization, followed by a review of common quantization methods and how they are implemented. furthermore, we examine several prominent quantization methods applied to llms, detailing their algorithms and performance outcomes.

Quantization For Large Language Models Llms Reduce Ai Model Sizes We are a cross lab mit ai graduate student collective focusing on algorithms that learn and scale. the group is open to all with an academic email however if you are still interested shoot us an email or message us via twitter. we currently host bi weekly seminars and will have hands on sessions and research socials in the future. We begin by exploring the mathematical theory of quantization, followed by a review of common quantization methods and how they are implemented. furthermore, we examine several prominent quantization methods applied to llms, detailing their algorithms and performance outcomes. Explore the quantization of large language models (llms) with 60 illustrations. Learn how quantization can reduce the size of large language models for efficient ai deployment on everyday devices. follow our step by step guide now!. Technologies like quantization and distillation have been developed to shrink model sizes. By enabling llms to operate with fewer bits per parameter, quantization not only compresses the model but also alleviates the computational burden, thereby expanding the accessibility and feasibility of deploying these models across diverse applications.

Quantization For Large Language Models Llms Reduce Ai Model Sizes Explore the quantization of large language models (llms) with 60 illustrations. Learn how quantization can reduce the size of large language models for efficient ai deployment on everyday devices. follow our step by step guide now!. Technologies like quantization and distillation have been developed to shrink model sizes. By enabling llms to operate with fewer bits per parameter, quantization not only compresses the model but also alleviates the computational burden, thereby expanding the accessibility and feasibility of deploying these models across diverse applications.

Quantization For Large Language Models Llms Reduce Ai Model Sizes Technologies like quantization and distillation have been developed to shrink model sizes. By enabling llms to operate with fewer bits per parameter, quantization not only compresses the model but also alleviates the computational burden, thereby expanding the accessibility and feasibility of deploying these models across diverse applications.

Comments are closed.