Using Multiple Cores And Gpus In Native Code

Using Multiple Cores And Gpus In Native Code Microsoft Research Ever wonder how to get full use of all those cores in your machines? how about using your gpu for more than just painting the screen? this talk will cover the various libraries that are. Ever wonder how to get full use of all those cores in your machines? how about using your gpu for more than just painting the screen? this talk will cover the various libraries that are available to make parallel programming in c easier. we will explore what is available in ppl, c amp, and cuda gpu.

Requesting Gpus Msu Hpcc User Documentation Aparapi allows developers to write native java code capable of being executed directly on a graphics card gpu by converting java byte code to an opencl kernel dynamically at runtime. Learn how to build a multicore java application that leverages native code for optimized performance. discover tips, code examples, and common pitfalls. This cuda programming guide is the official, comprehensive resource on the cuda programming model and how to write code that executes on the gpu using the cuda platform. The good news is, you can use a network of pcs with cpus to get 2 10x compile speedups, depending on how optimized your code is already, and you can get the fastest multi core cpu and high speed ssd available for your desktop to get gains for less hassle before you resort to network builds.

Gpu Acceleration Gpus Have Thousands Of Cores To Process Parallel This cuda programming guide is the official, comprehensive resource on the cuda programming model and how to write code that executes on the gpu using the cuda platform. The good news is, you can use a network of pcs with cpus to get 2 10x compile speedups, depending on how optimized your code is already, and you can get the fastest multi core cpu and high speed ssd available for your desktop to get gains for less hassle before you resort to network builds. Scale is a cuda compatible programming toolkit for ahead of time compilation of cuda source code on amd gpus, aiming to expand support for other gpus in the future. The simplest way to run on multiple gpus, on one or many machines, is using distribution strategies. this guide is for users who have tried these approaches and found that they need fine grained control of how tensorflow uses the gpu. Learn how to offload compute heavy java tasks to the gpu using jni and cuda for ten to one hundred times performance improvement in secure and data parallel workloads. By implementing the core processing algorithms in native code, the app can leverage the full potential of cpu capabilities, resulting in faster image manipulation and seamless real time.

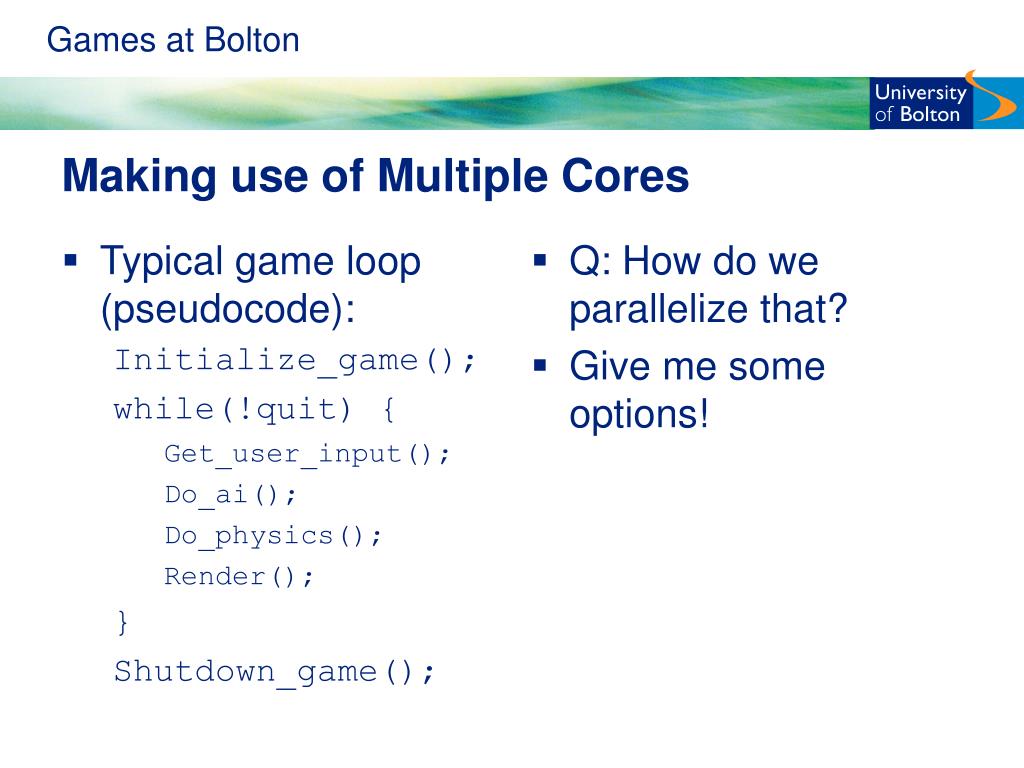

Ppt Programming Multiple Cores Powerpoint Presentation Free Download Scale is a cuda compatible programming toolkit for ahead of time compilation of cuda source code on amd gpus, aiming to expand support for other gpus in the future. The simplest way to run on multiple gpus, on one or many machines, is using distribution strategies. this guide is for users who have tried these approaches and found that they need fine grained control of how tensorflow uses the gpu. Learn how to offload compute heavy java tasks to the gpu using jni and cuda for ten to one hundred times performance improvement in secure and data parallel workloads. By implementing the core processing algorithms in native code, the app can leverage the full potential of cpu capabilities, resulting in faster image manipulation and seamless real time.

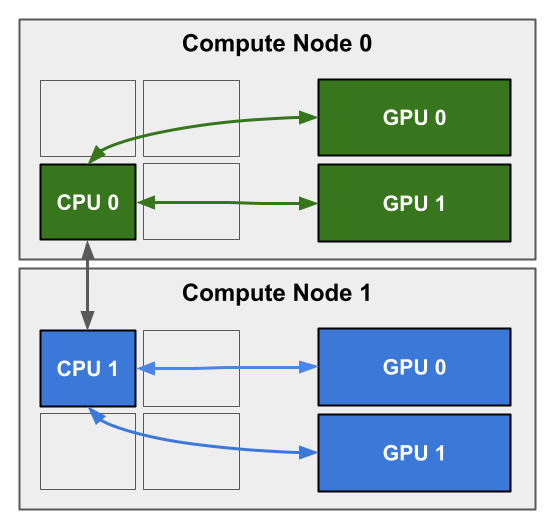

Multi Gpu Training Example Using Two Gpus But Scalable To All Gpus Learn how to offload compute heavy java tasks to the gpu using jni and cuda for ten to one hundred times performance improvement in secure and data parallel workloads. By implementing the core processing algorithms in native code, the app can leverage the full potential of cpu capabilities, resulting in faster image manipulation and seamless real time.

Comments are closed.