Path Forward Beyond Simulators Fast And Accurate Gpu Execution Time

Pdf Path Forward Beyond Simulators Fast And Accurate Gpu Execution After identifying the relationships of directly known information (e.g., network structure, hardware theoretical computing capabilities), we discuss how to build a simple, yet accurate, performance model for dnns execution time. Today, dnns’ high computational complexity and sub optimal device utilization present a major roadblock to democratizing dnns. to reduce the execution time and.

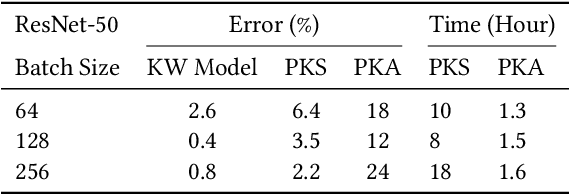

Table 2 From Path Forward Beyond Simulators Fast And Accurate Gpu Guided by our observations, we develop a fast, linear regression based dnns execution time predictor. our evaluation using various image classification models suggests our method can predict. This is the artifact for the paper "path forward beyond simulators: fast and accurate gpu execution time prediction for dnn workloads" to appear in micro 2023. Path forward beyond simulators: fast and accurate gpu execution time prediction for dnn workloads. Path forward beyond simulators: fast and accurate gpu execution time prediction for dnn workloads, in the proceedings of international symposium on microarchitecture (micro), toronto, canada, october 2023.

Execution Time Cpu Versus Gpu Implementation 2 Optimization 1 Compared Path forward beyond simulators: fast and accurate gpu execution time prediction for dnn workloads. Path forward beyond simulators: fast and accurate gpu execution time prediction for dnn workloads, in the proceedings of international symposium on microarchitecture (micro), toronto, canada, october 2023. Our observations on the dataset demonstrate prevalent linear relationships between the gpu kernel execution times, operation counts, and input output parameters of dnns layers. guided by our observations, we develop a fast, linear regression based dnns execution time predictor. Bibliographic details on path forward beyond simulators: fast and accurate gpu execution time prediction for dnn workloads. To break the gpu memory wall for scaling deep learning workloads, a variety of architecture and system techniques have been proposed recently. their typical approaches include memory extension with flash memory and direct storage access. Acta: automatic configuration of the tensor memory accelerator for high end gpus. what do we mean when we talk about trust in social media? a systematic review. ieee commun. mag.

The Execution Time Of The Gpu Algorithm On Three Different Gpu Our observations on the dataset demonstrate prevalent linear relationships between the gpu kernel execution times, operation counts, and input output parameters of dnns layers. guided by our observations, we develop a fast, linear regression based dnns execution time predictor. Bibliographic details on path forward beyond simulators: fast and accurate gpu execution time prediction for dnn workloads. To break the gpu memory wall for scaling deep learning workloads, a variety of architecture and system techniques have been proposed recently. their typical approaches include memory extension with flash memory and direct storage access. Acta: automatic configuration of the tensor memory accelerator for high end gpus. what do we mean when we talk about trust in social media? a systematic review. ieee commun. mag.

Total Gpu Execution Time Download Scientific Diagram To break the gpu memory wall for scaling deep learning workloads, a variety of architecture and system techniques have been proposed recently. their typical approaches include memory extension with flash memory and direct storage access. Acta: automatic configuration of the tensor memory accelerator for high end gpus. what do we mean when we talk about trust in social media? a systematic review. ieee commun. mag.

Variation Of The Average Gpu Execution Time A Gpu Throughput Without B

Comments are closed.