Pdf Language Agnostic Bert Sentence Embedding

Language Agnostic Bert Sentence Embedding Deepai View a pdf of the paper titled language agnostic bert sentence embedding, by fangxiaoyu feng and 4 other authors. Pdf | we adapt multilingual bert to produce language agnostic sentence embeddings for 109 languages.

Language Agnostic Bert Sentence Embedding Language agnostic bert sentence embedding. in proceedings of the 60th annual meeting of the association for computational linguistics (volume 1: long papers), pages 878–891, dublin, ireland. An easy and efficient method to extend existing sentence embedding models to new languages by using the original (monolingual) model to generate sentence embeddings for the source language and then training a new system on translated sentences to mimic the original model. Tl;dr: this article proposed a method to extend existing sentence embedding models to new languages by mapping a translated sentence to the same location in the vector space as the original sentence and then training a new system on translated sentences to mimic the original model. This paper presents a language agnostic bert sen tence embedding (labse) model supporting 109 languages. the model achieves state of the art per formance on various bi text retrieval mining tasks compare to the previous state of the art, while also providing increased language coverage.

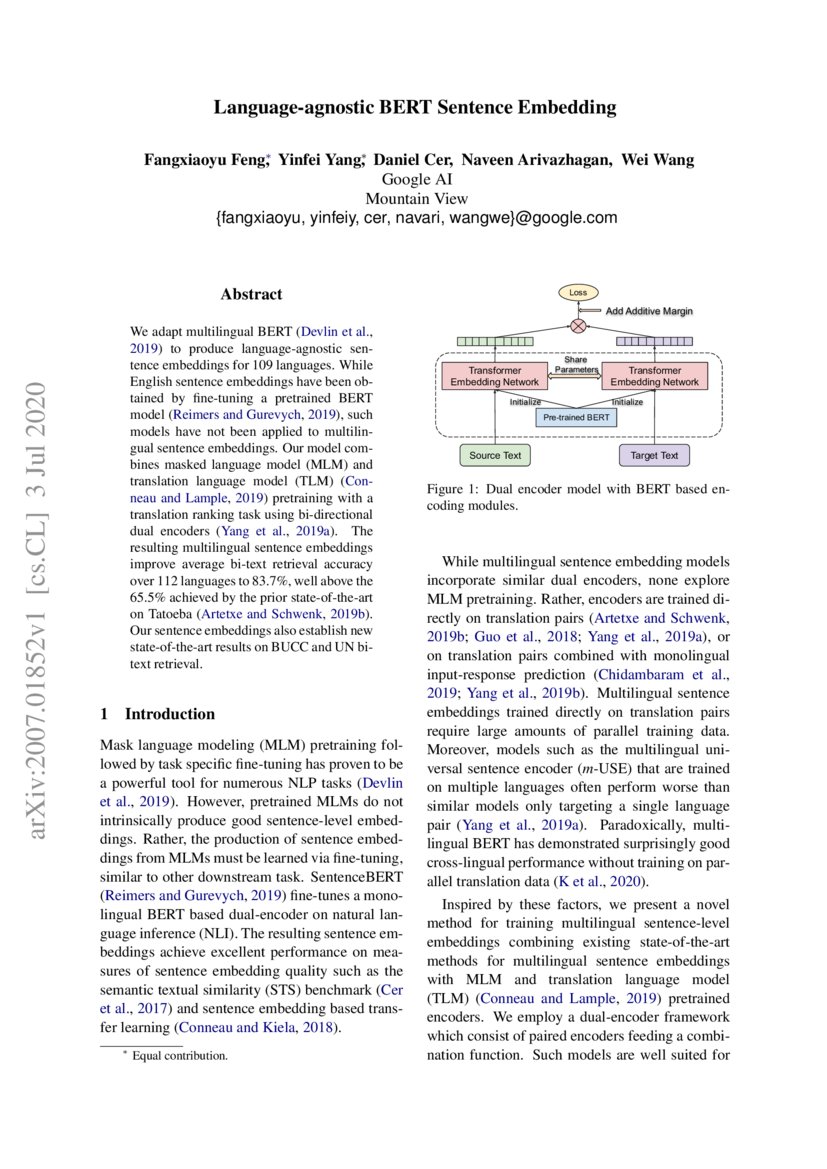

Language Agnostic Bert Sentence Embedding Tl;dr: this article proposed a method to extend existing sentence embedding models to new languages by mapping a translated sentence to the same location in the vector space as the original sentence and then training a new system on translated sentences to mimic the original model. This paper presents a language agnostic bert sen tence embedding (labse) model supporting 109 languages. the model achieves state of the art per formance on various bi text retrieval mining tasks compare to the previous state of the art, while also providing increased language coverage. In “ language agnostic bert sentence embedding ”, we present a multilingual bert embedding model, called labse, that produces language agnostic cross lingual sentence embeddings for 109 languages. A publicly released multilingual sentence em bedding model spanning 109 languages. thorough experiments and ablation studies to understand the impact of pre training, nega tive sampling strategies, vocabulary choice, data quality, and data quantity. While bert is an effective method for learning monolingual sentence embeddings for semantic similarity and embedding based transfer learning (reimers and gurevych, 2019), bert based cross lingual sentence embeddings have yet to be explored. This document describes a new model called labse that learns language agnostic sentence embeddings using bert. labse uses a dual encoder architecture with pre trained bert models to encode sentences instead of training encoders from scratch.

Language Agnostic Bert Sentence Embedding In “ language agnostic bert sentence embedding ”, we present a multilingual bert embedding model, called labse, that produces language agnostic cross lingual sentence embeddings for 109 languages. A publicly released multilingual sentence em bedding model spanning 109 languages. thorough experiments and ablation studies to understand the impact of pre training, nega tive sampling strategies, vocabulary choice, data quality, and data quantity. While bert is an effective method for learning monolingual sentence embeddings for semantic similarity and embedding based transfer learning (reimers and gurevych, 2019), bert based cross lingual sentence embeddings have yet to be explored. This document describes a new model called labse that learns language agnostic sentence embeddings using bert. labse uses a dual encoder architecture with pre trained bert models to encode sentences instead of training encoders from scratch.

Comments are closed.