Language Agnostic Bert Sentence Embedding

Language Agnostic Bert Sentence Embedding Deepai We show that introducing a pre trained multilingual language model dramatically reduces the amount of parallel training data required to achieve good performance by 80%. In “ language agnostic bert sentence embedding ”, we present a multilingual bert embedding model, called labse, that produces language agnostic cross lingual sentence embeddings for 109 languages.

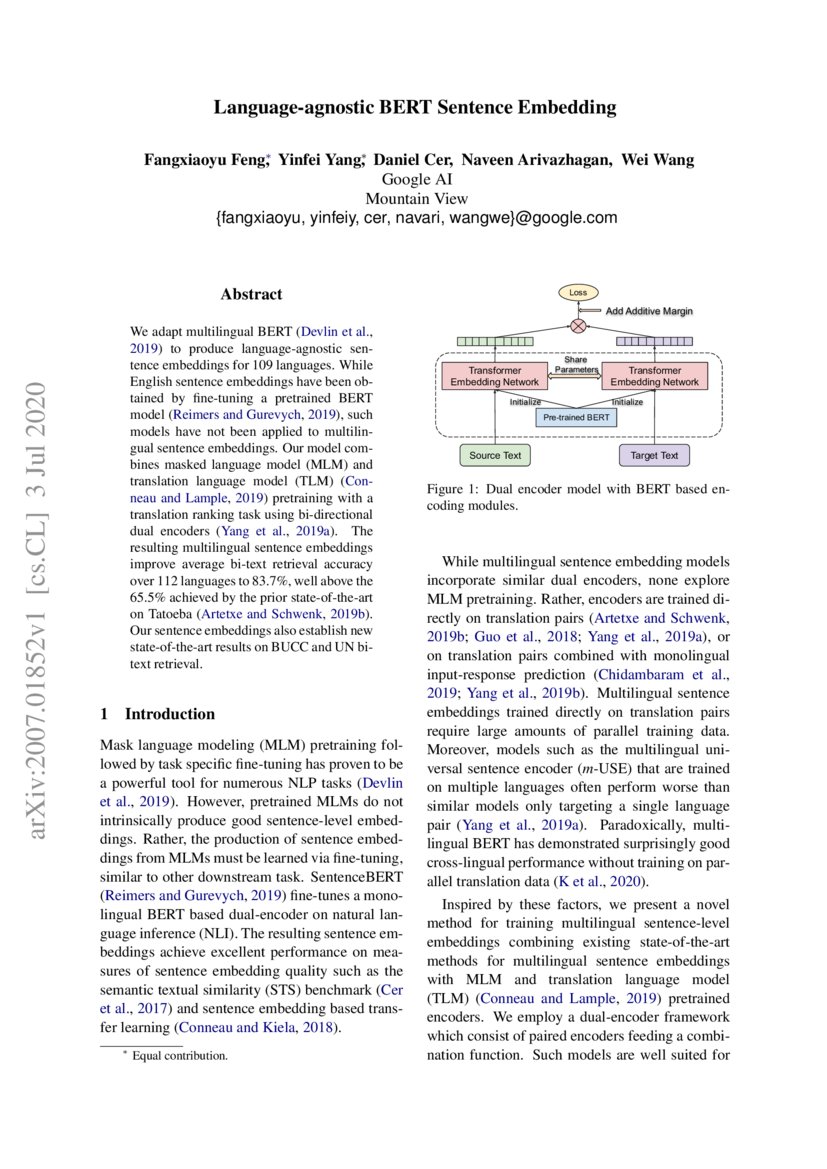

Language Agnostic Bert Sentence Embedding Pdf | we adapt multilingual bert to produce language agnostic sentence embeddings for 109 languages. Language agnostic bert sentence encoder (labse) is a bert based model trained for sentence embedding for 109 languages. the pre training process combines masked language modeling with translation language modeling. An easy and efficient method to extend existing sentence embedding models to new languages by using the original (monolingual) model to generate sentence embeddings for the source language and then training a new system on translated sentences to mimic the original model. While bert is an effective method for learning monolingual sentence embeddings for semantic similarity and embedding based transfer learning (reimers and gurevych, 2019), bert based cross lingual sentence embeddings have yet to be explored.

Language Agnostic Bert Sentence Embedding An easy and efficient method to extend existing sentence embedding models to new languages by using the original (monolingual) model to generate sentence embeddings for the source language and then training a new system on translated sentences to mimic the original model. While bert is an effective method for learning monolingual sentence embeddings for semantic similarity and embedding based transfer learning (reimers and gurevych, 2019), bert based cross lingual sentence embeddings have yet to be explored. We show that introducing a pre trained multilingual language model dramatically reduces the amount of parallel training data required to achieve good performance by 80%. A publicly released multilingual sentence em bedding model spanning 109 languages. thorough experiments and ablation studies to understand the impact of pre training, nega tive sampling strategies, vocabulary choice, data quality, and data quantity. Abstract: while bert is an effective method for learning monolingual sentence embeddings for semantic similarity and embedding based transfer learning (reimers and gurevych, 2019), bert based cross lingual sentence embeddings have yet to be explored. This paper presents a language agnostic bert sen tence embedding (labse) model supporting 109 languages. the model achieves state of the art per formance on various bi text retrieval mining tasks compare to the previous state of the art, while also providing increased language coverage.

Language Agnostic Bert Sentence Embedding We show that introducing a pre trained multilingual language model dramatically reduces the amount of parallel training data required to achieve good performance by 80%. A publicly released multilingual sentence em bedding model spanning 109 languages. thorough experiments and ablation studies to understand the impact of pre training, nega tive sampling strategies, vocabulary choice, data quality, and data quantity. Abstract: while bert is an effective method for learning monolingual sentence embeddings for semantic similarity and embedding based transfer learning (reimers and gurevych, 2019), bert based cross lingual sentence embeddings have yet to be explored. This paper presents a language agnostic bert sen tence embedding (labse) model supporting 109 languages. the model achieves state of the art per formance on various bi text retrieval mining tasks compare to the previous state of the art, while also providing increased language coverage.

Language Agnostic Bert Sentence Embedding Abstract: while bert is an effective method for learning monolingual sentence embeddings for semantic similarity and embedding based transfer learning (reimers and gurevych, 2019), bert based cross lingual sentence embeddings have yet to be explored. This paper presents a language agnostic bert sen tence embedding (labse) model supporting 109 languages. the model achieves state of the art per formance on various bi text retrieval mining tasks compare to the previous state of the art, while also providing increased language coverage.

Comments are closed.