Language Agnostic Multilingual Sentence Embedding Models For Semantic

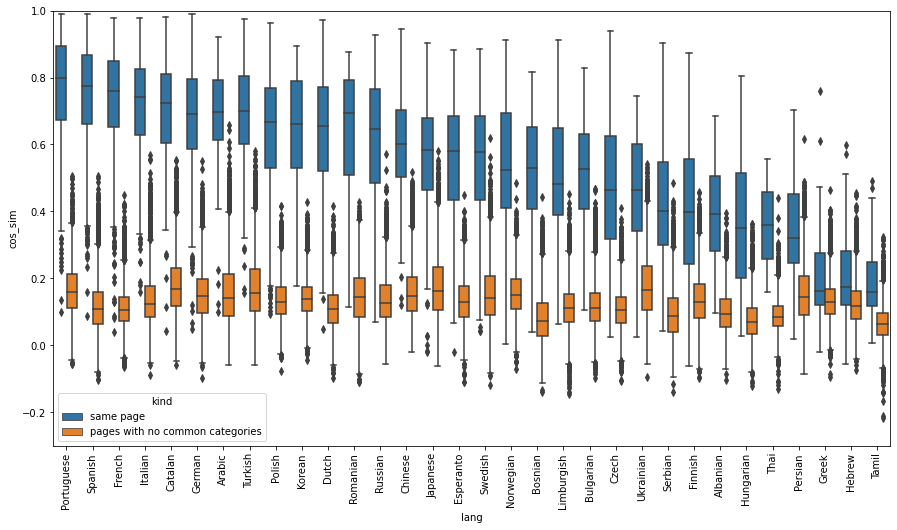

Language Agnostic Multilingual Sentence Embedding Models For Semantic Sentence embeddings have enabled us to compare semantics of sentences numerically, which are now essential for tasks such as semantic textual similarity, semantic search and sentence clustering. We show that introducing a pre trained multilingual language model dramatically reduces the amount of parallel training data required to achieve good performance by 80%.

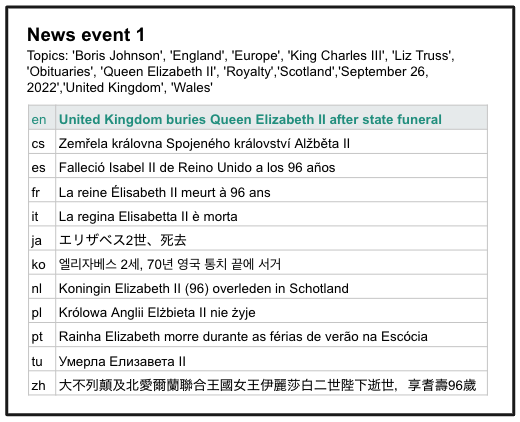

Language Agnostic Multilingual Sentence Embedding Models For Semantic In “ language agnostic bert sentence embedding ”, we present a multilingual bert embedding model, called labse, that produces language agnostic cross lingual sentence embeddings for 109 languages. We show that introducing a pre trained multilingual language model dramatically reduces the amount of parallel training data required to achieve good performance by 80%. Explore labse, google's open source multilingual sentence embedding model for semantic search and cross lingual retrieval across 109 languages. learn about its capabilities, applications, and implementation. Language agnostic bert sentence embedding (labse) is a multilingual dual encoder model that produces language independent, semantically meaningful sentence representations for over 100 languages.

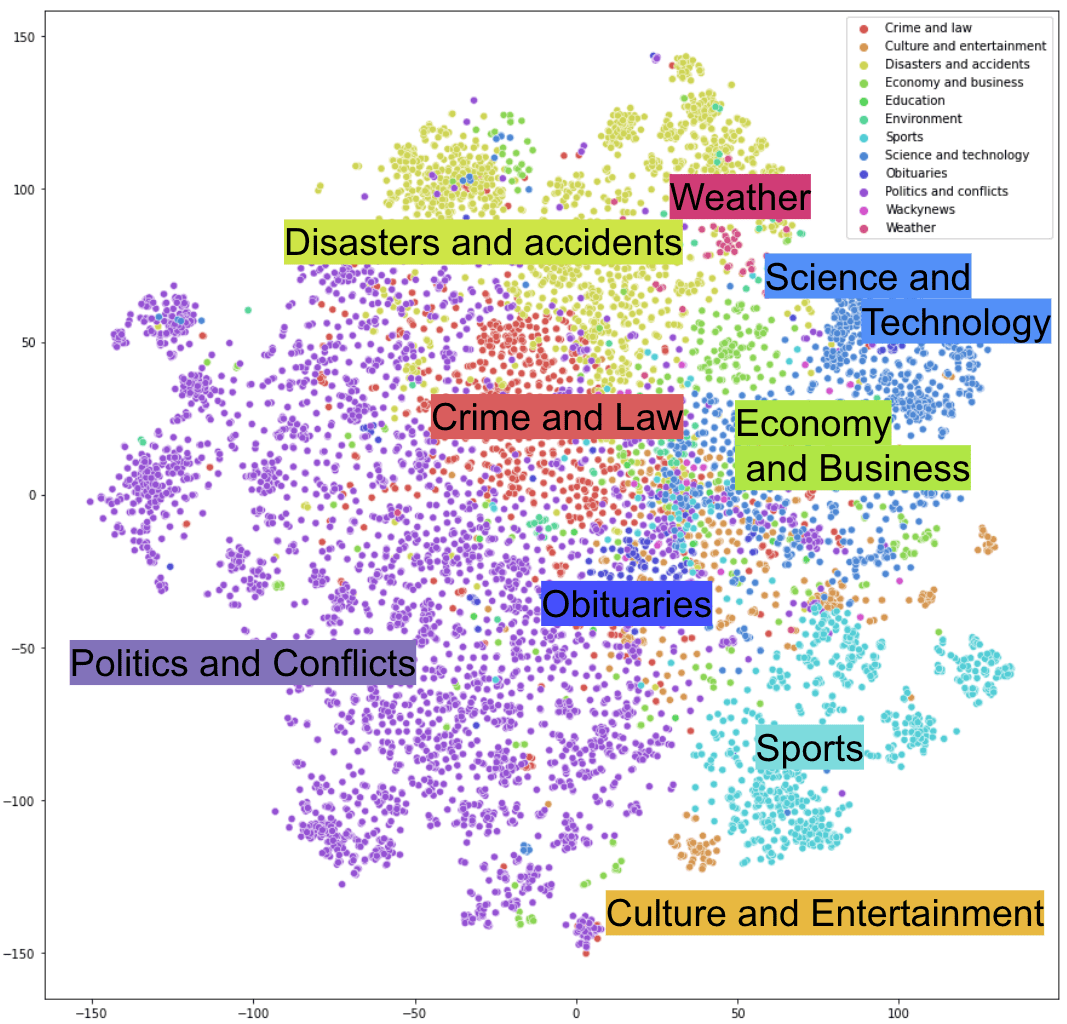

Language Agnostic Multilingual Sentence Embedding Models For Semantic Explore labse, google's open source multilingual sentence embedding model for semantic search and cross lingual retrieval across 109 languages. learn about its capabilities, applications, and implementation. Language agnostic bert sentence embedding (labse) is a multilingual dual encoder model that produces language independent, semantically meaningful sentence representations for over 100 languages. An easy and efficient method to extend existing sentence embedding models to new languages by using the original (monolingual) model to generate sentence embeddings for the source language and then training a new system on translated sentences to mimic the original model. While bert is an effective method for learning monolingual sentence embeddings for semantic similarity and embedding based transfer learning (reimers and gurevych, 2019), bert based cross lingual sentence embeddings have yet to be explored. Laser emphasizes language agnostic sentence embeddings, while transformer based models provide contextualized representations. the choice between the two depends on the specific. Our goal is to transfer knowledge learned from multilingual sts training data on some language pairs to other language pairs, thereby unlocking new value for low resource languages that do not have human sts annotations. our model achieves this goal through a two stage training pipeline.

Comments are closed.