Multilingual Embeddings

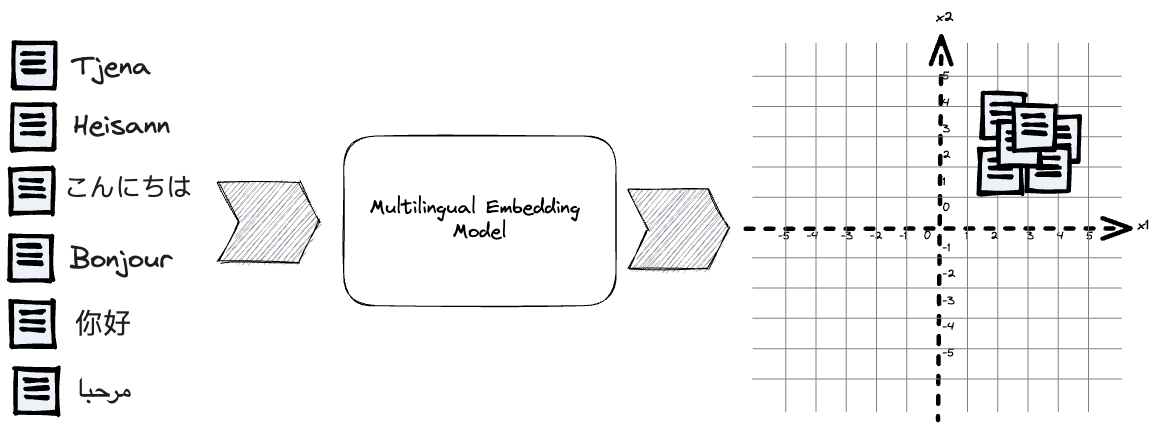

Simplify Search With Multilingual Embedding Models Vespa Blog A multilingual embedding model handles multiple languages in the same vector space. when used for retrieval, the model must find the correct document among tens of thousands of reviews in the same language that often discuss similar products and topics. Multilingual embeddings are continuous vector representations that map words from multiple languages into a shared space, ensuring semantically similar expressions are closely aligned. they utilize methods such as dictionary based linear alignment, geometric manifold rotations, and unsupervised adversarial training to integrate language specific features. evaluations on intrinsic (similarity.

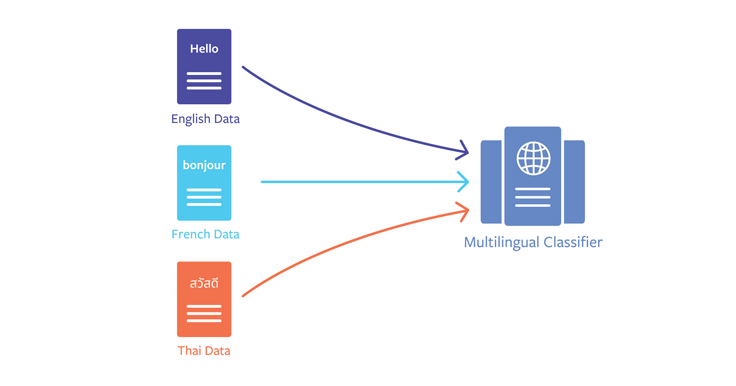

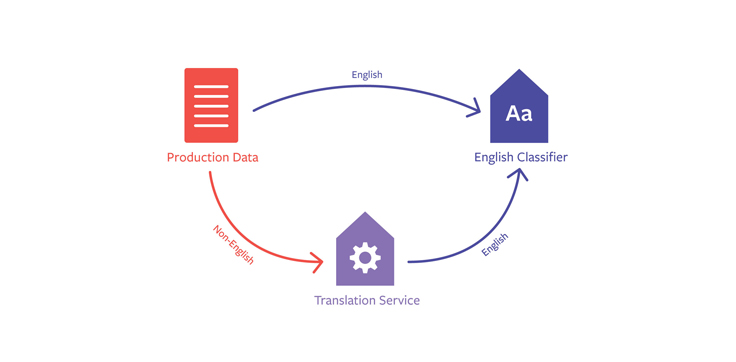

Under The Hood Multilingual Embeddings Engineering At Meta The best multilingual embedding model most embedding models were built for english and then internationalized as an afterthought. they were trained primarily on english text, fine tuned on some multilingual data, and shipped with claims of multilingual support that hold up reasonably well for western european languages and collapse under the weight of anything more demanding. zembed 1 by. The main aim of learning cross lingual embeddings is to preserve bilingual or multilingual translation equivalence in the learned shared space. the resulting shared space supplements the knowledge spaces of limited languages. Sentencetransformers documentation sentence transformers (a.k.a. sbert) is the go to python module for using and training state of the art embedding and reranker models. it can be used to compute embeddings from text, images, audio, or video using sentence transformer models (quickstart), to calculate similarity scores using cross encoder (a.k.a. reranker) models (quickstart), or to generate. Microsoft recently launched harrier oss v1, a breakthrough in open source multilingual embedding models designed to solve the ‘silent failure’ of semantic search. these embeddings function as high dimensional coordinate mappings that determine whether a search engine accurately recognizes user intent or simply scans for surface level keywords. by utilizing vector space similarity metrics.

Under The Hood Multilingual Embeddings Engineering At Meta Sentencetransformers documentation sentence transformers (a.k.a. sbert) is the go to python module for using and training state of the art embedding and reranker models. it can be used to compute embeddings from text, images, audio, or video using sentence transformer models (quickstart), to calculate similarity scores using cross encoder (a.k.a. reranker) models (quickstart), or to generate. Microsoft recently launched harrier oss v1, a breakthrough in open source multilingual embedding models designed to solve the ‘silent failure’ of semantic search. these embeddings function as high dimensional coordinate mappings that determine whether a search engine accurately recognizes user intent or simply scans for surface level keywords. by utilizing vector space similarity metrics. In this blog post, we summarize the history of embeddings research, detail the training regime of a modern embeddings model, present a new multilingual embedding benchmark, and investigate whether it is possible to fine tune in multilingual capability to a pretrained monolingual model. “gemini embedding 2 is the foundation for sparkonomony’s creative economic equality engine. its native multi modality slashes our latency by up to 70% by removing llm inference and nearly doubles semantic similarity scores for text image and text video pairs leaping from 0.4 to 0.8. The training procedure adheres to the english e5 model recipe, involving contrastive pre training on 1 billion multilingual text pairs, followed by fine tuning on a combination of labeled datasets. The data source is based on the european ai act, and models cover some of the latest openai and open source embeddings models (as of 02 2024) to deal with multilingual data:.

Comments are closed.