Building Multimodal Embeddings A Step By Step Guide

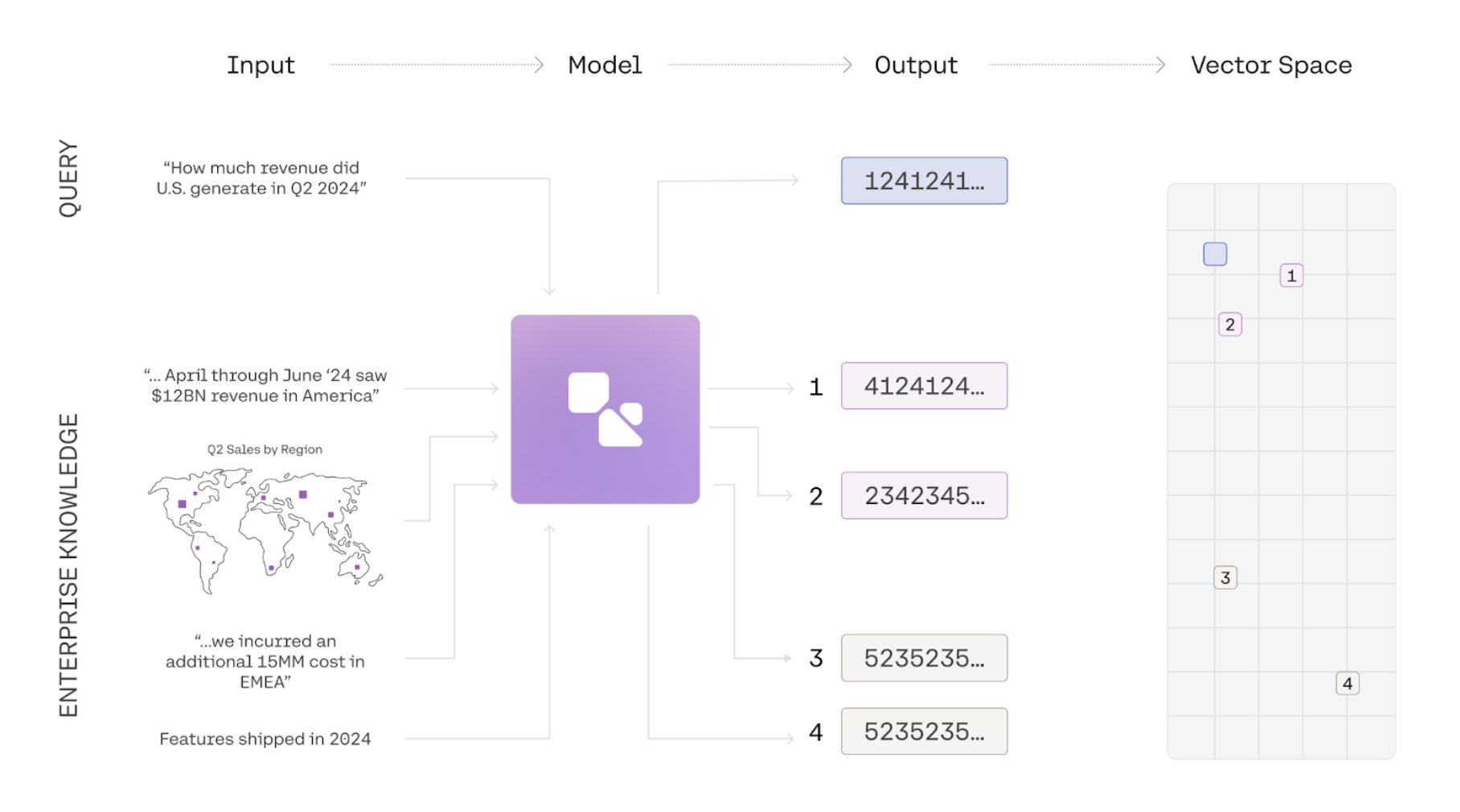

Multimodal Embeddings Unifying Visual And Text Data Cohere Blog Multimodal embeddings are transforming how machines understand and process data across text, images, audio, and more. by aligning these diverse data types into a shared space, these models enable applications like content retrieval, creative generation, and advanced reasoning tasks. Step by step guide to building a unified search system for text, images, and audio. learn to select models, build vector indexes, and create a cross modal query api.

Unlocking The Power Of Multimodal Embeddings Cohere Multimodal embeddings allow ai systems to search and reason across text, images, audio, and video in their native formats. this blog covers the key intuitions behind how this all works and walks through three practical implementations using weaviate and gemini. Step by step guide to building a unified search pipeline using gemini embedding 2 to index and query text, images, audio, video, and pdfs in one vector store. Learn how to extract, chunk, index, and search multimodal content using an indexer and skills. In this post, i demonstrated how to build a powerful multimodal search engine using amazon titan embeddings and langchain in a jupyter notebook environment. you explored key components like generating embeddings, text segmentation, vector storage, and image search capabilities.

The History Of Embeddings Multimodal Embeddings Ppt Free Download Learn how to extract, chunk, index, and search multimodal content using an indexer and skills. In this post, i demonstrated how to build a powerful multimodal search engine using amazon titan embeddings and langchain in a jupyter notebook environment. you explored key components like generating embeddings, text segmentation, vector storage, and image search capabilities. This example demonstrates how to create text to image embeddings using the diffusiondb dataset and the vertex ai multimodal embeddings model. the embeddings are uploaded to the vertex ai vector search service, which is a high scale, low latency solution to find similar vectors for a large corpus. Here’s a step by step guide to setting up multimodal search in opensearch. 1. create and deploy your multimodal embedding model. first, set up your multimodal embedding model. for this tutorial, we’ll use the titan multimodal embeddings model. a connector facilitates communication between opensearch and your external ml model. In this comprehensive guide, we’ll explore qwen3 multimodal embeddings, understand the mathematics behind them, build practical applications, and prepare you for both technical interviews and real world implementations. A step by step guide to building a real image matching and classification project using google's new gemini embedding 2.

Building Multimodal Embeddings A Step By Step Guide This example demonstrates how to create text to image embeddings using the diffusiondb dataset and the vertex ai multimodal embeddings model. the embeddings are uploaded to the vertex ai vector search service, which is a high scale, low latency solution to find similar vectors for a large corpus. Here’s a step by step guide to setting up multimodal search in opensearch. 1. create and deploy your multimodal embedding model. first, set up your multimodal embedding model. for this tutorial, we’ll use the titan multimodal embeddings model. a connector facilitates communication between opensearch and your external ml model. In this comprehensive guide, we’ll explore qwen3 multimodal embeddings, understand the mathematics behind them, build practical applications, and prepare you for both technical interviews and real world implementations. A step by step guide to building a real image matching and classification project using google's new gemini embedding 2.

Building Multimodal Embeddings A Step By Step Guide In this comprehensive guide, we’ll explore qwen3 multimodal embeddings, understand the mathematics behind them, build practical applications, and prepare you for both technical interviews and real world implementations. A step by step guide to building a real image matching and classification project using google's new gemini embedding 2.

Comments are closed.