Inducing Language Agnostic Multilingual Representations

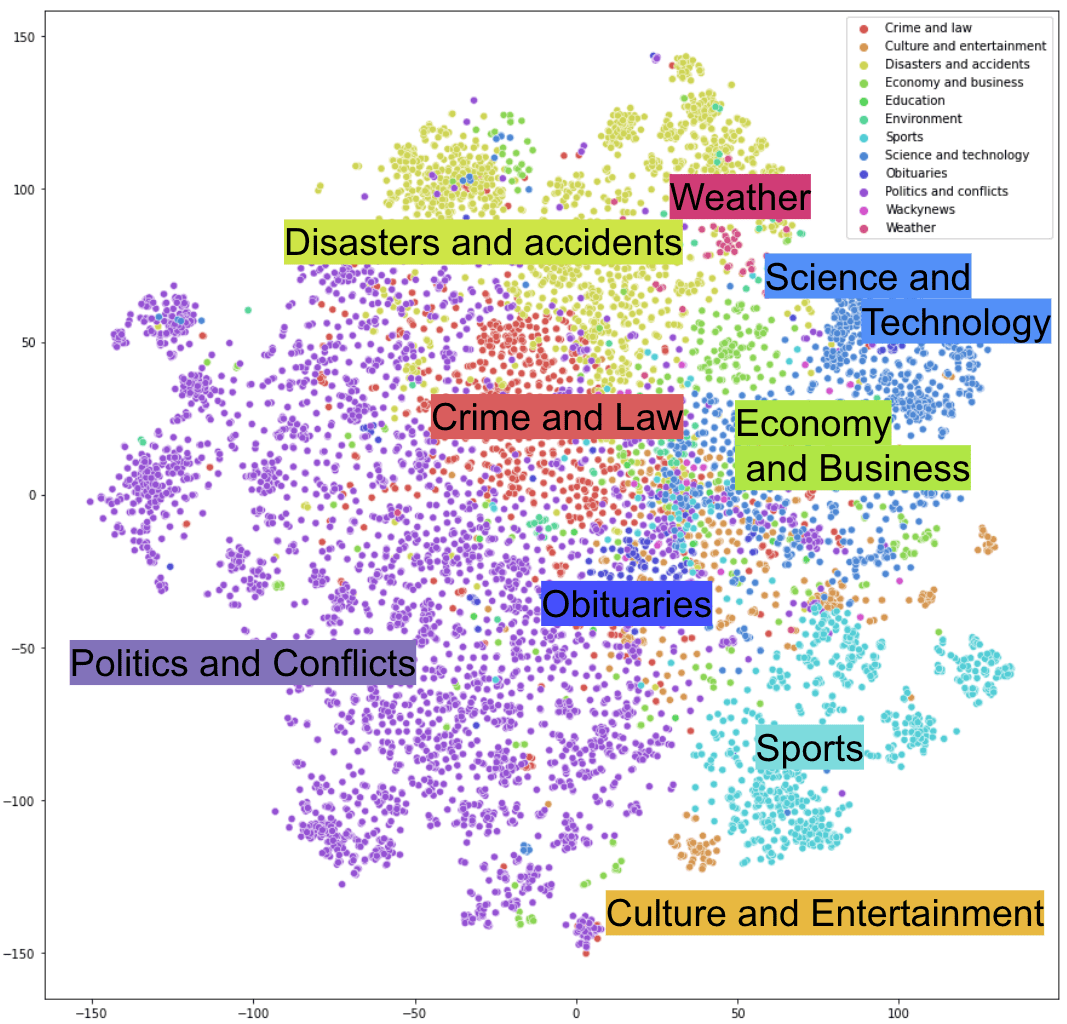

Underline Inducing Language Agnostic Multilingual Representations Cross lingual representations have the potential to make nlp techniques available to the vast majority of languages in the world. however, they currently require large pretraining corpora or access to typologically similar languages. Our experiments demonstrate the language agnostic behavior of our multilingual representations, which manifest the potential of zero shot cross lingual transfer to distant and low resource.

Towards Language Agnostic Universal Representations Deepai We comparatively evaluate three approaches to ad dress these challenges, removing language specific information from multilingual representations, thus learning language agnostic representations. Cross lingual representations have the potential to make nlp techniques available to the vast majority of languages in the world. however, they currently require large pretraining corpora, or assume access to typologically similar languages. Multilingual representations have the potential to make cross lingual systems available to the vast majority of languages in the world. however, they currently require large pretraining corpora, or assume access to typologically similar languages. Pre trained multilingual representations promise to make the current best nlp model available even for low resource languages.

Language Agnostic Multilingual Information Retrieval With Contrastive Multilingual representations have the potential to make cross lingual systems available to the vast majority of languages in the world. however, they currently require large pretraining corpora, or assume access to typologically similar languages. Pre trained multilingual representations promise to make the current best nlp model available even for low resource languages. Language agnostic models provide a versatile way to convert lin guistic units from different languages into a shared vector representation space. the rel evant work on multilingual sentence embed dings has reportedly reached low error rate in cross lingual similarity search tasks. As the internet user communities and the information on the web are increasing exponentially, meaningful search in a multilingual setting is required. it is crucial to develop a simple to use, granular, and adaptable knowledge representation system that could constitute the core of the semantic web. In this work, we provide a novel view of projecting away language specific factors from a multilingual embedding space. specifically, we discover that there exists a low rank subspace that primarily encodes information irrelevant to semantics (e.g., syntactic information). Bibliographic details on inducing language agnostic multilingual representations.

A Language Agnostic Multilingual Streaming On Device Asr System Deepai Language agnostic models provide a versatile way to convert lin guistic units from different languages into a shared vector representation space. the rel evant work on multilingual sentence embed dings has reportedly reached low error rate in cross lingual similarity search tasks. As the internet user communities and the information on the web are increasing exponentially, meaningful search in a multilingual setting is required. it is crucial to develop a simple to use, granular, and adaptable knowledge representation system that could constitute the core of the semantic web. In this work, we provide a novel view of projecting away language specific factors from a multilingual embedding space. specifically, we discover that there exists a low rank subspace that primarily encodes information irrelevant to semantics (e.g., syntactic information). Bibliographic details on inducing language agnostic multilingual representations.

Language Agnostic Multilingual Sentence Embedding Models For Semantic In this work, we provide a novel view of projecting away language specific factors from a multilingual embedding space. specifically, we discover that there exists a low rank subspace that primarily encodes information irrelevant to semantics (e.g., syntactic information). Bibliographic details on inducing language agnostic multilingual representations.

Comments are closed.