What Is Mixture Of Experts

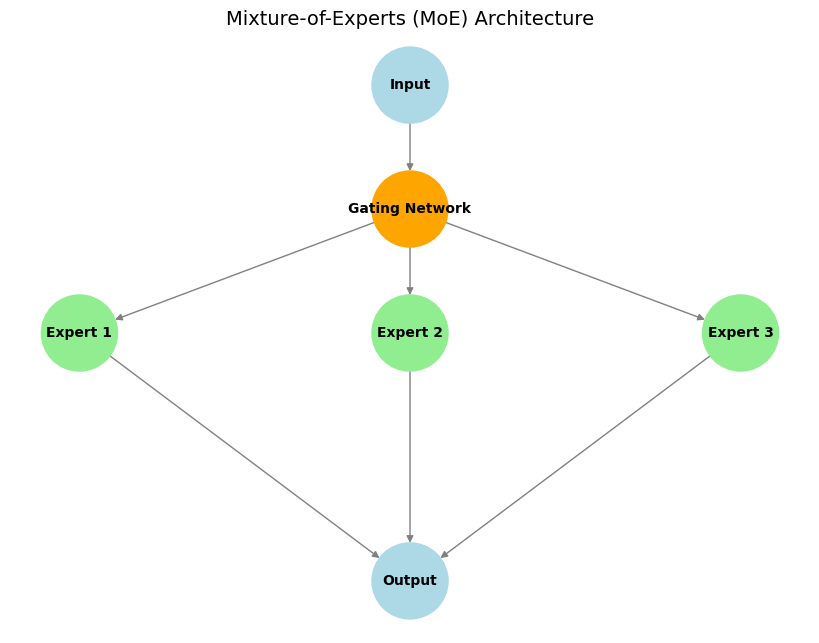

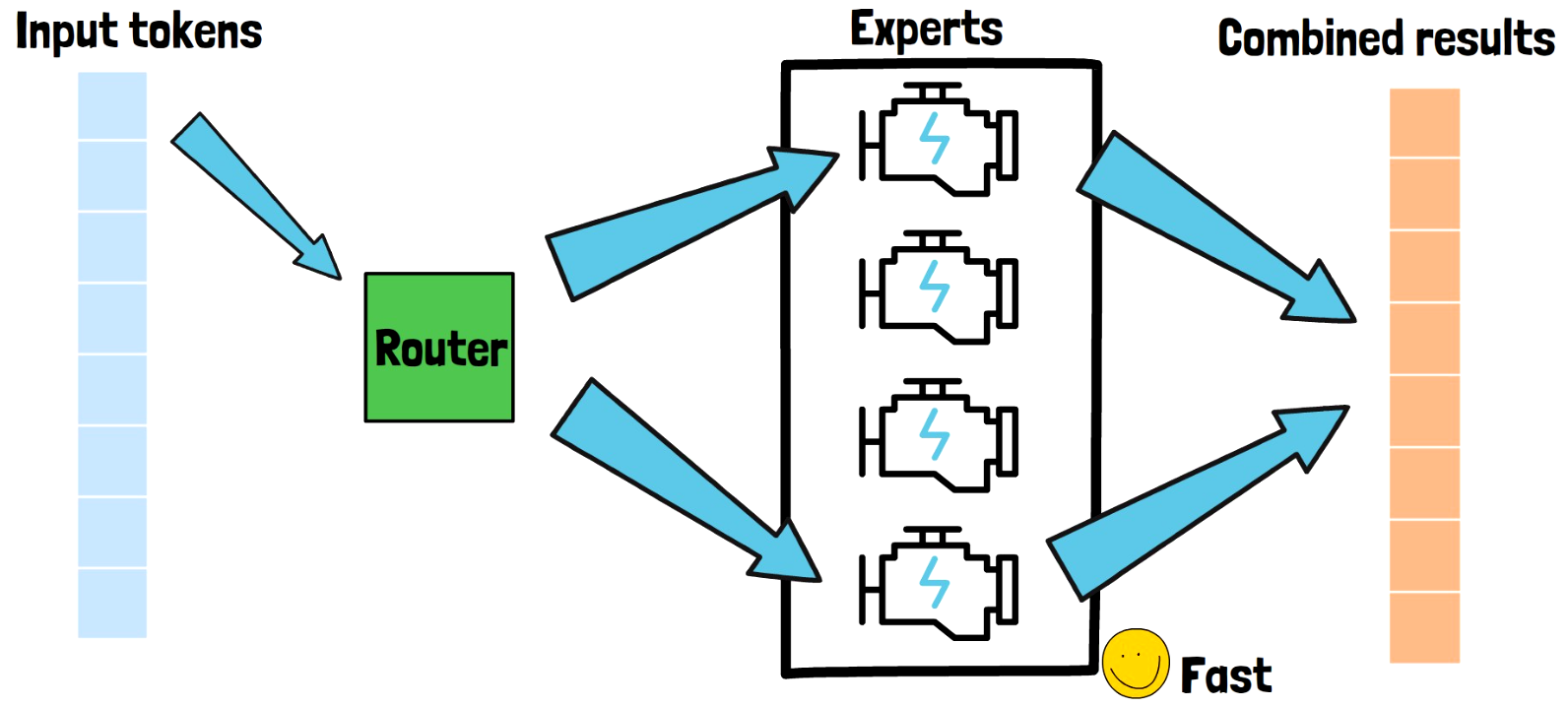

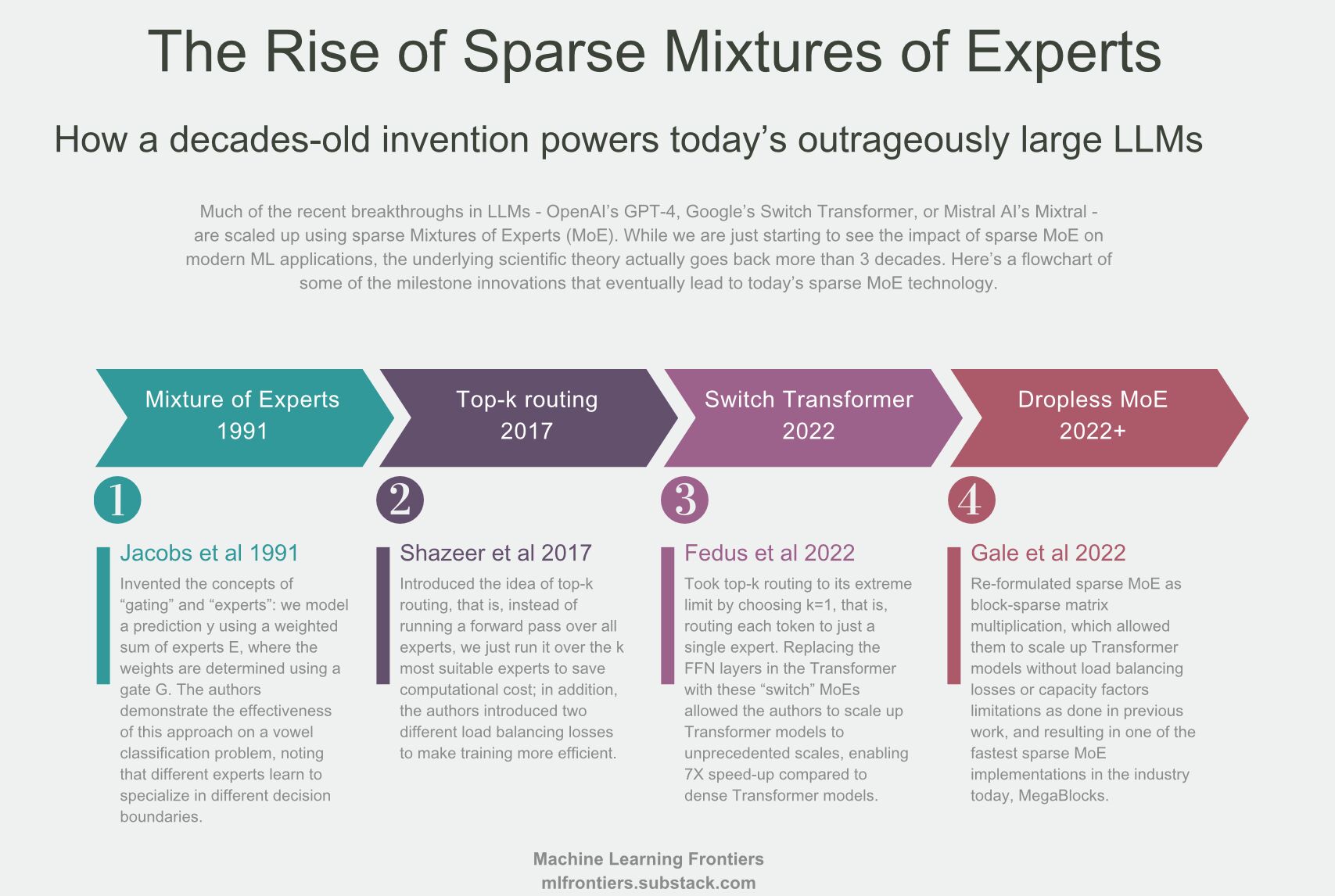

Understanding The Mixture Of Experts Moe Architecture Birow What is mixture of experts? mixture of experts (moe) is a machine learning approach that divides an artificial intelligence (ai) model into separate sub networks (or “experts”), each specializing in a subset of the input data, to jointly perform a task. In the context of transformer models, a moe consists of two main elements: sparse moe layers are used instead of dense feed forward network (ffn) layers. moe layers have a certain number of “experts” (e.g. 8), where each expert is a neural network.

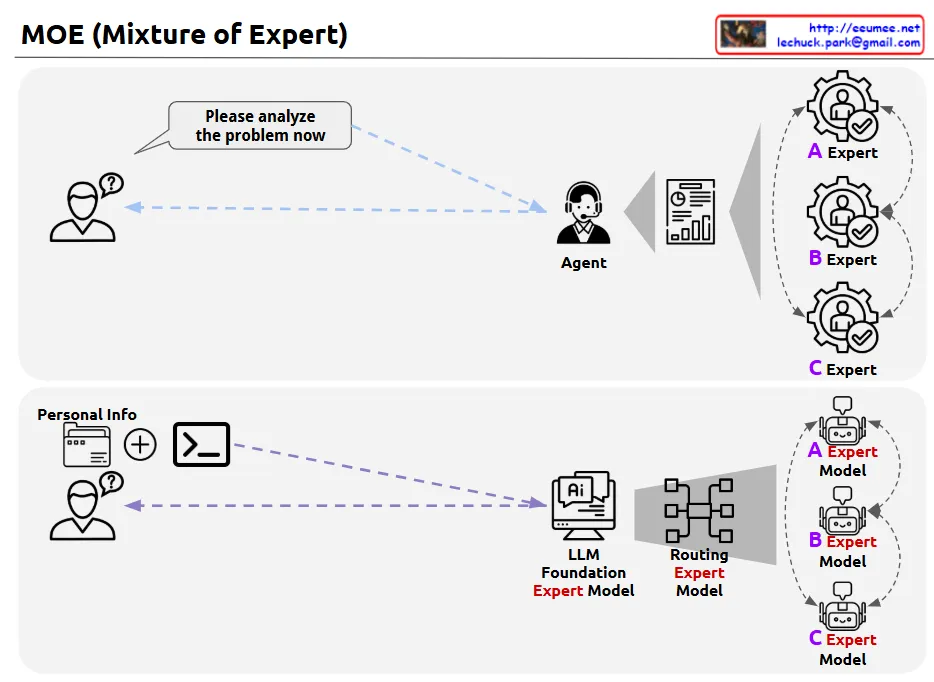

Mixture Of Experts Lechuck Park Mixture of experts (moe) is a machine learning technique where multiple expert networks (learners) are used to divide a problem space into homogeneous regions. [1]. What is mixture of experts (moe)? mixture of experts (moe) is a machine learning approach that divides a machine learning model into separate sub networks or experts where each of these experts specialize in a subset of the input data to jointly perform a task. Imagine an ai model as a team of specialists, each with their own unique expertise. a mixture of experts (moe) model operates on this principle by dividing a complex task among smaller, specialized networks known as “experts.”. What is a mixture of experts? mixture of experts (moe) is a machine learning technique that improves model performance by dividing tasks among multiple specialized sub models (experts).

Introduction To Mixture Of Experts Original Moe Paper Explained Imagine an ai model as a team of specialists, each with their own unique expertise. a mixture of experts (moe) model operates on this principle by dividing a complex task among smaller, specialized networks known as “experts.”. What is a mixture of experts? mixture of experts (moe) is a machine learning technique that improves model performance by dividing tasks among multiple specialized sub models (experts). What is mixture of experts? a mixture of experts (moe) model is any machine learning model composed of multiple smaller specialized models (experts) and a gating or routing network to select which expert is used for any given input. A mixture of experts model is a special kind of neural network where, instead of using one big network for every input, the model has many smaller networks called experts and only activates a few of them for each input. Simply put, mixture of experts (moe) is an advanced neural network architecture designed to improve model efficiency and scalability by dynamically selecting specialized sub models, or "experts," to handle different parts of an input. The mixture of experts (moe) model [8], [9] is based on the ensemble learning method and other processing algorithms. moe brings together multiple specialized sub models (i.e., “expert networks”) to collaboratively handle complex tasks.

Mixture Of Experts Preferhub What is mixture of experts? a mixture of experts (moe) model is any machine learning model composed of multiple smaller specialized models (experts) and a gating or routing network to select which expert is used for any given input. A mixture of experts model is a special kind of neural network where, instead of using one big network for every input, the model has many smaller networks called experts and only activates a few of them for each input. Simply put, mixture of experts (moe) is an advanced neural network architecture designed to improve model efficiency and scalability by dynamically selecting specialized sub models, or "experts," to handle different parts of an input. The mixture of experts (moe) model [8], [9] is based on the ensemble learning method and other processing algorithms. moe brings together multiple specialized sub models (i.e., “expert networks”) to collaboratively handle complex tasks.

What Is Mixture Of Experts Moe Simply put, mixture of experts (moe) is an advanced neural network architecture designed to improve model efficiency and scalability by dynamically selecting specialized sub models, or "experts," to handle different parts of an input. The mixture of experts (moe) model [8], [9] is based on the ensemble learning method and other processing algorithms. moe brings together multiple specialized sub models (i.e., “expert networks”) to collaboratively handle complex tasks.

Vinija S Notes Primers Mixture Of Experts

Comments are closed.