What Is Mixture Of Experts Moe How It Works And Use Cases Zilliz Learn

What Is Mixture Of Experts Moe How It Works And Use Cases Zilliz Learn Simply put, mixture of experts (moe) is an advanced neural network architecture designed to improve model efficiency and scalability by dynamically selecting specialized sub models, or "experts," to handle different parts of an input. In this article, we explored the mixture of experts (moe) technique, a sophisticated approach for scaling neural networks to handle complex tasks and diverse data.

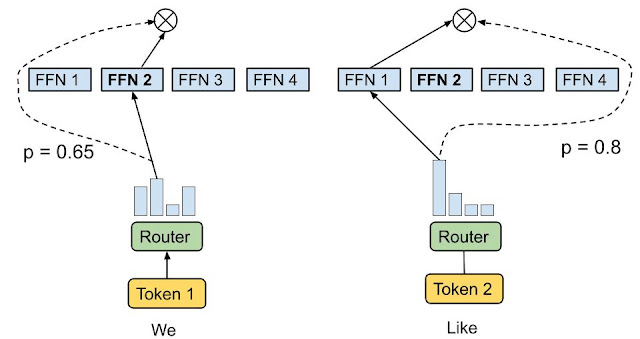

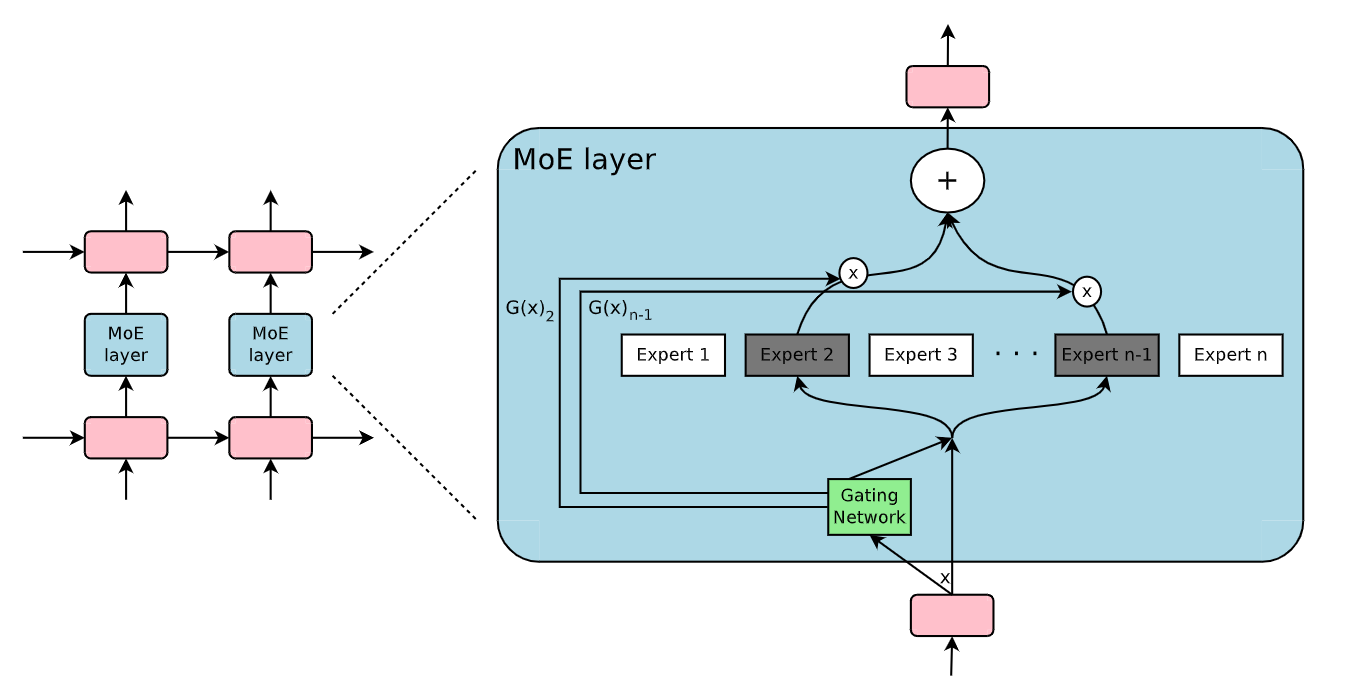

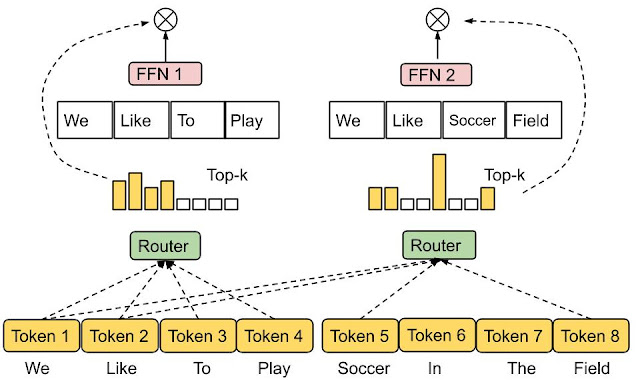

What Is Mixture Of Experts Moe How It Works And Use Cases Zilliz Learn What is mixture of experts (moe) in simple terms? moe is a model architecture that splits the model into multiple specialized sub networks called experts, then uses a router to activate only a few of them for each input token. In the context of transformer models, a moe consists of two main elements: sparse moe layers are used instead of dense feed forward network (ffn) layers. moe layers have a certain number of “experts” (e.g. 8), where each expert is a neural network. Mixture of experts (moe) is a machine learning approach that divides a machine learning model into separate sub networks or experts where each of these experts specialize in a subset of the input data to jointly perform a task. In this article, we’ll explore how this architecture works, the role of sparsity, routing strategies, and its real world application in the mixtral model. we’ll also discuss the challenges these systems face and the solutions developed to address them.

What Is Mixture Of Experts Moe How It Works And Use Cases Zilliz Learn Mixture of experts (moe) is a machine learning approach that divides a machine learning model into separate sub networks or experts where each of these experts specialize in a subset of the input data to jointly perform a task. In this article, we’ll explore how this architecture works, the role of sparsity, routing strategies, and its real world application in the mixtral model. we’ll also discuss the challenges these systems face and the solutions developed to address them. Learn how to implement mixture of experts (moe) models with this comprehensive guide covering architecture design. Mixture of experts (moe) is an ai model architecture that uses multiple, specialized submodels, or "experts," to handle tasks more efficiently than a single, monolithic model. Comprehensive guide to mixture of experts (moe) models, covering architecture, training, and real world implementations including deepseek v3, llama 4, mixtral, and other frontier moe systems as of 2026. In this article, we explored the mixture of experts (moe) approach and its advantages in building scalable, efficient machine learning models. moes enable conditional computation, allowing only a subset of the model to be active at a time.

What Is Mixture Of Experts Moe How It Works And Use Cases Zilliz Learn Learn how to implement mixture of experts (moe) models with this comprehensive guide covering architecture design. Mixture of experts (moe) is an ai model architecture that uses multiple, specialized submodels, or "experts," to handle tasks more efficiently than a single, monolithic model. Comprehensive guide to mixture of experts (moe) models, covering architecture, training, and real world implementations including deepseek v3, llama 4, mixtral, and other frontier moe systems as of 2026. In this article, we explored the mixture of experts (moe) approach and its advantages in building scalable, efficient machine learning models. moes enable conditional computation, allowing only a subset of the model to be active at a time.

What Is Mixture Of Experts Moe By Zilliz Oct 2024 Medium Comprehensive guide to mixture of experts (moe) models, covering architecture, training, and real world implementations including deepseek v3, llama 4, mixtral, and other frontier moe systems as of 2026. In this article, we explored the mixture of experts (moe) approach and its advantages in building scalable, efficient machine learning models. moes enable conditional computation, allowing only a subset of the model to be active at a time.

Comments are closed.