Mixture Of Experts Moe

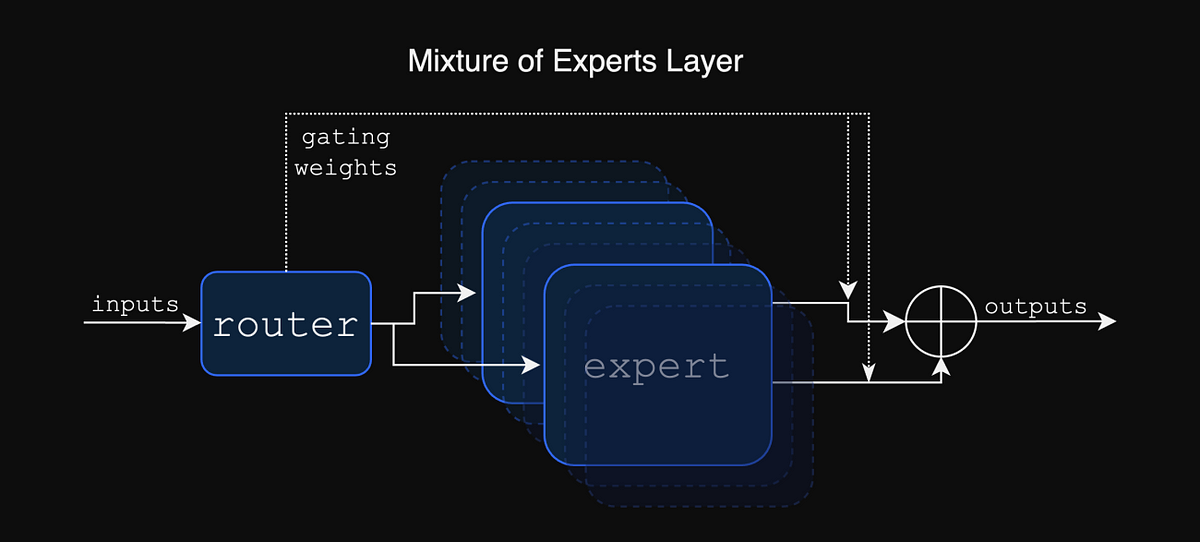

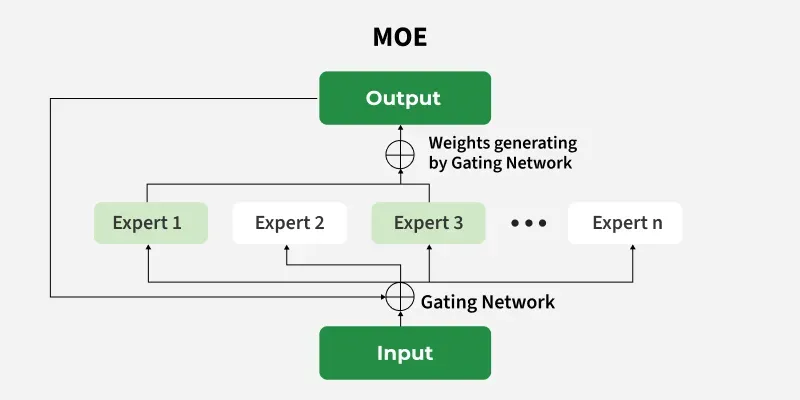

Mixture Of Experts Moe Mixture of experts (moe) is a machine learning technique where multiple expert networks (learners) are used to divide a problem space into homogeneous regions. [1]. What is a mixture of experts (moe)? the scale of a model is one of the most important axes for better model quality. given a fixed computing budget, training a larger model for fewer steps is better than training a smaller model for more steps.

Understand Mixture Of Experts Moe Architecture What is mixture of experts? mixture of experts (moe) is a machine learning approach that divides an artificial intelligence (ai) model into separate sub networks (or “experts”), each specializing in a subset of the input data, to jointly perform a task. In this visual guide, we will take our time to explore this important component, mixture of experts (moe) through more than 50 visualizations! in this visual guide, we will go through the two main components of moe, namely experts and the router, as applied in typical llm based architectures. What is mixture of experts (moe)? mixture of experts (moe) is a machine learning approach that divides a machine learning model into separate sub networks or experts where each of these experts specialize in a subset of the input data to jointly perform a task. In summary, this paper systematically elaborates on the principles, techniques, and applications of moe in big data processing, providing theoretical and practical references to further promote the application of moe in real scenarios.

Mixture Of Experts Moe Architecture With P Experts Download What is mixture of experts (moe)? mixture of experts (moe) is a machine learning approach that divides a machine learning model into separate sub networks or experts where each of these experts specialize in a subset of the input data to jointly perform a task. In summary, this paper systematically elaborates on the principles, techniques, and applications of moe in big data processing, providing theoretical and practical references to further promote the application of moe in real scenarios. Based on the different generation patterns of the data, moe divides the dataset into multiple parts and trains an independent model for each part. these intelligent models are called “experts”, and each of them is adept at handling a specific type of data. What is mixture of experts? mixture of experts (moe) is an ai model architecture that uses multiple, specialized submodels, or "experts," to handle tasks more efficiently than a single, monolithic model. Comprehensive guide to mixture of experts (moe) models, covering architecture, training, and real world implementations including deepseek v3, llama 4, mixtral, and other frontier moe systems as of 2026. Imagine an ai model as a team of specialists, each with their own unique expertise. a mixture of experts (moe) model operates on this principle by dividing a complex task among smaller, specialized networks known as “experts.”.

Mixture Of Experts Moe Architecture Download Scientific Diagram Based on the different generation patterns of the data, moe divides the dataset into multiple parts and trains an independent model for each part. these intelligent models are called “experts”, and each of them is adept at handling a specific type of data. What is mixture of experts? mixture of experts (moe) is an ai model architecture that uses multiple, specialized submodels, or "experts," to handle tasks more efficiently than a single, monolithic model. Comprehensive guide to mixture of experts (moe) models, covering architecture, training, and real world implementations including deepseek v3, llama 4, mixtral, and other frontier moe systems as of 2026. Imagine an ai model as a team of specialists, each with their own unique expertise. a mixture of experts (moe) model operates on this principle by dividing a complex task among smaller, specialized networks known as “experts.”.

What Is Mixture Of Experts Moe Geeksforgeeks Comprehensive guide to mixture of experts (moe) models, covering architecture, training, and real world implementations including deepseek v3, llama 4, mixtral, and other frontier moe systems as of 2026. Imagine an ai model as a team of specialists, each with their own unique expertise. a mixture of experts (moe) model operates on this principle by dividing a complex task among smaller, specialized networks known as “experts.”.

Original Mixture Of Experts Moe Architecture With 3 Experts And 1

Comments are closed.