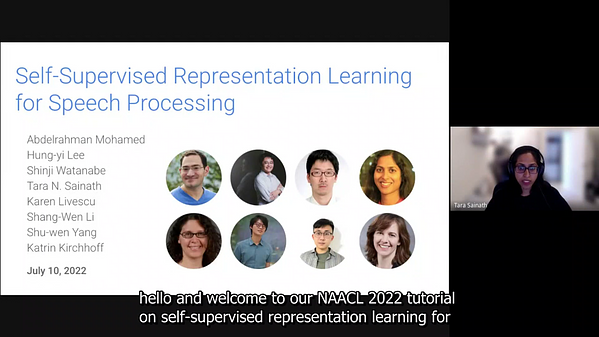

Self Supervised Representation Learning For Speech Processing Underline

Self Supervised Representation Learning For Speech Processing Underline Since many current methods focus solely on automatic speech recognition as a downstream task, we review recent efforts on benchmarking learned representations to extend the application beyond speech recognition. This review presents approaches for self supervised speech representation learning and their connection to other research areas.

Revisiting Self Supervised Visual Representation Learning Deepai In this thesis, we explore the use of self supervised learning a learning paradigm where the learning target is generated from the input itself for leveraging such easily scalable resources to improve the performance of spoken language technology. •since spoken utterances contain much richer information than the corresponding text transcriptions—e.g., speaker identity, style, emotion, surrounding noise, and communication channel noise—it is important to learn representations that disentangle these factors of variation. Self supervisedrepresentation learning (ssl) utilizes proxy supervised learning tasks, for example, distinguishing parts of the input signal from distractors, or generating masked input segments conditioned on the unmasked ones, to obtain training data from unlabeled corpora. Self supervised learning (ssl) has emerged as a promising paradigm for learning flexible speech representations from unlabeled data. by designing pretext tasks that exploit statistical regularities, ssl models can capture useful representations that are transferable to downstream tasks.

Exploring The Integration Of Speech Separation And Recognition With Self supervisedrepresentation learning (ssl) utilizes proxy supervised learning tasks, for example, distinguishing parts of the input signal from distractors, or generating masked input segments conditioned on the unmasked ones, to obtain training data from unlabeled corpora. Self supervised learning (ssl) has emerged as a promising paradigm for learning flexible speech representations from unlabeled data. by designing pretext tasks that exploit statistical regularities, ssl models can capture useful representations that are transferable to downstream tasks. Propose an effi cient self supervised model to learn speech repre sentations. instead of focusing on the model perfor mance in downstream tasks, the proposed model focuses pri. arily on computational costs, limiting the resources available for pretraining. we set a pretraining limi. based on cramming (geiping . Abstract: self supervised learning has achieved remarkable success for learning speech representations from unlabeled data. the masking strategy plays an important role in the self supervised learning algorithm. To address the limitations of existing models in content centric tasks, we propose a novel self supervised contrastive representation learning (sscrl) framework for disentangling content specific features from speech signals. Self supervised representation learning methods promise a single universal model that would benefit a wide variety of tasks and domains. such methods have shown success in natural language processing and computer vision domains, achieving new levels of performance while reducing the number of labels required for many downstream scenarios.

Comments are closed.