Self Supervised Representation Learning

Self Supervised Representation Learning Introduction Advances And Self supervised representation learning methods aim to provide powerful deep feature learning without the requirement of large annotated datasets, thus alleviating the annotation bottleneck that is one of the main barriers to practical deployment of deep learning today. Self supervised learning starts from the opposite stance: most of the structure of the world is already in the image. spatial layout, object co occurrence, multi view consistency, and predictable continuations are all "free" learning signals — we just have to design a task that forces the network to extract them.

Self Supervised Visual Representation Learning Agi Representation learning is the cornerstone of self supervised learning's success. it's the process by which models learn to encode input data into meaningful, lower dimensional representations that capture essential features and patterns. Self supervised representation learning is a paradigm used in computer science for iterative refinement of images or data without requiring external supervision. it involves algorithms that learn meaningful representations from the data itself, leading to improved classification or analysis tasks. In this work, we introduce a self supervised learning framework for multimodal criminology, which enables the fully automatic learning of effective features for unlabelled video, text, and graph datasets and the completion of crime analysis tasks, including anomaly detection, crime report classification, and high risk node prediction via. Cpc (van den oord et al., 2018) is an influential self supervised representation learning technique which is applicable to a wide variety of input modalities such as text, speech and images.

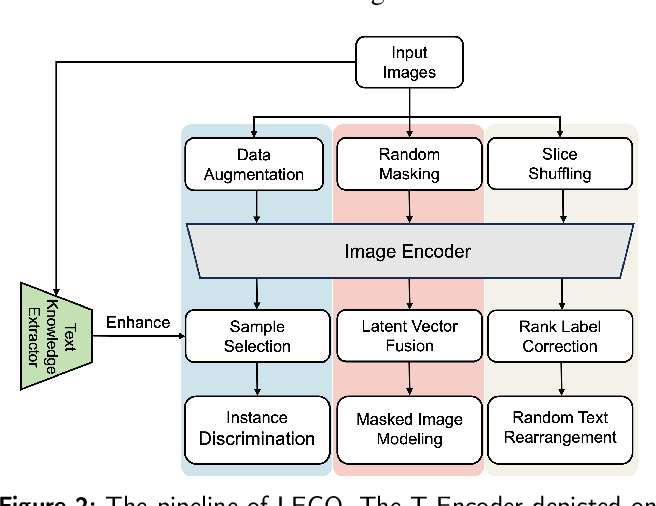

Lego Self Supervised Representation Learning For Scene Text Images In this work, we introduce a self supervised learning framework for multimodal criminology, which enables the fully automatic learning of effective features for unlabelled video, text, and graph datasets and the completion of crime analysis tasks, including anomaly detection, crime report classification, and high risk node prediction via. Cpc (van den oord et al., 2018) is an influential self supervised representation learning technique which is applicable to a wide variety of input modalities such as text, speech and images. Self supervised representation learning (ssrl) methods aim to provide powerful, deep feature learning without the requirement of large annotated data sets, thus alleviating the annotation bottleneck—one of the main barriers to the practical deployment of deep learning today. In this article, we focus on self supervised algorithms and applications that address learning general purpose features— or representations—that can be reused to improve learning in downstream tasks. In this study, we investigate the application of state of the art self supervised learning methods to bulk gene expression data for phenotype prediction. Self supervised learning (ssl) methods harness the concept of semantic invariance by utilizing data augmentation strategies to produce similar representations for different deformations of the same input.

Self Supervised Representation Learning For Cad Deepai Self supervised representation learning (ssrl) methods aim to provide powerful, deep feature learning without the requirement of large annotated data sets, thus alleviating the annotation bottleneck—one of the main barriers to the practical deployment of deep learning today. In this article, we focus on self supervised algorithms and applications that address learning general purpose features— or representations—that can be reused to improve learning in downstream tasks. In this study, we investigate the application of state of the art self supervised learning methods to bulk gene expression data for phenotype prediction. Self supervised learning (ssl) methods harness the concept of semantic invariance by utilizing data augmentation strategies to produce similar representations for different deformations of the same input.

Self Supervised Ranking For Representation Learning Deepai In this study, we investigate the application of state of the art self supervised learning methods to bulk gene expression data for phenotype prediction. Self supervised learning (ssl) methods harness the concept of semantic invariance by utilizing data augmentation strategies to produce similar representations for different deformations of the same input.

Comments are closed.