Self Supervised Speech Representation Learning

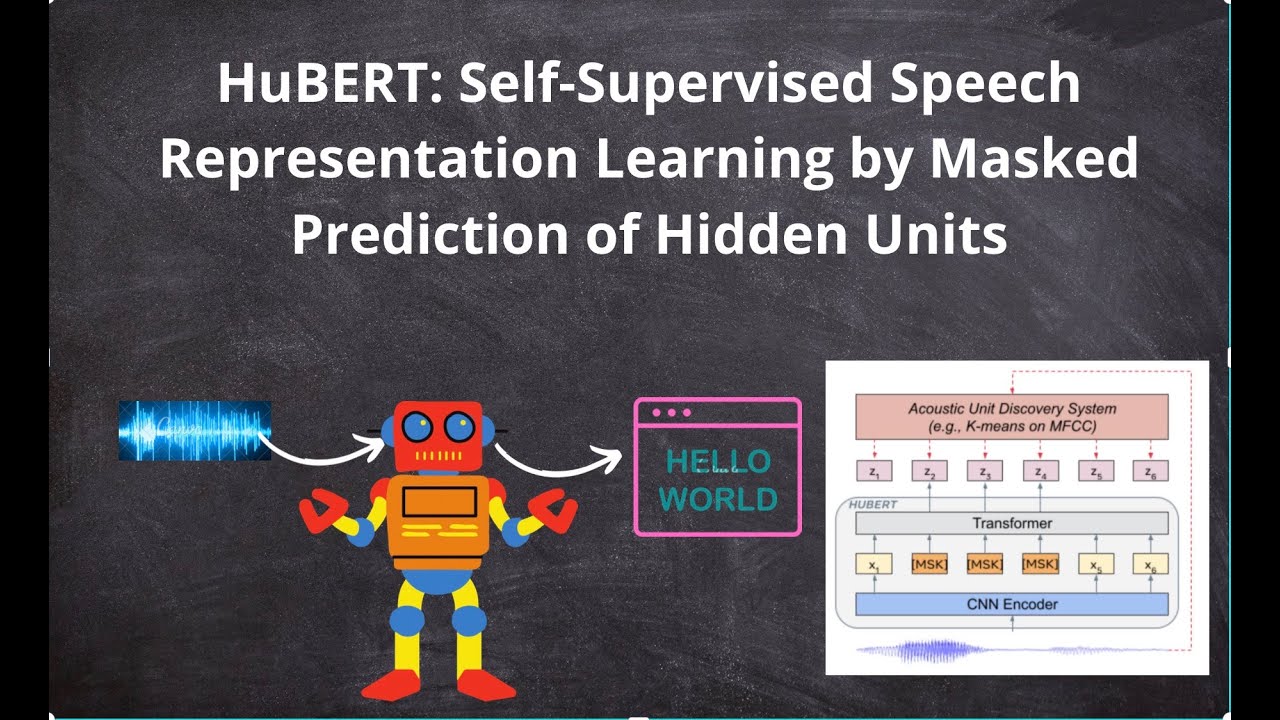

Hubert Self Supervised Speech Representation Learning By Masked Although self supervised speech representation is still a nascent research area, it is closely related to acoustic word embedding and learning with zero lexical resources, both of which have seen active research for many years. This review presents approaches for self supervised speech representation learning and their connection to other research areas.

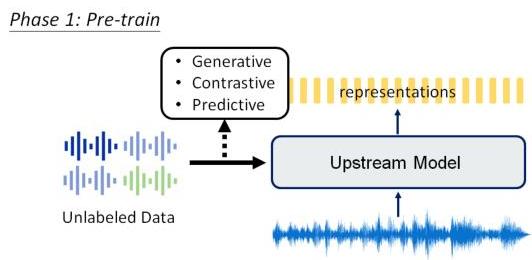

Self Supervised Speech Representation Learning A Review Alphaxiv We highly recommend to consider the newly released official repo of s3prl vc which is developed and actively maintained by wen chin huang. the standalone repo contains much more recepies for the vc experiments. in s3prl we only include the any to one recipe for reproducing the superb results. Hubert: self supervised speech representation learning by masked prediction of hidden units published in: ieee acm transactions on audio, speech, and language processing ( volume: 29 ). Self supervised learning (ssl) has emerged as a promising paradigm for learning flexible speech representations from unlabeled data. by designing pretext tasks that exploit statistical regularities, ssl models can capture useful representations that are transferable to downstream tasks. •since spoken utterances contain much richer information than the corresponding text transcriptions—e.g., speaker identity, style, emotion, surrounding noise, and communication channel noise—it is important to learn representations that disentangle these factors of variation.

Pdf Self Supervised Speech Representation Learning A Review Self supervised learning (ssl) has emerged as a promising paradigm for learning flexible speech representations from unlabeled data. by designing pretext tasks that exploit statistical regularities, ssl models can capture useful representations that are transferable to downstream tasks. •since spoken utterances contain much richer information than the corresponding text transcriptions—e.g., speaker identity, style, emotion, surrounding noise, and communication channel noise—it is important to learn representations that disentangle these factors of variation. Self supervised learning enables the training of large neural models without the need for large, labeled datasets. it has been generating breakthroughs in several fields, including computer vision, natural language processing, biology, and speech. Self supervisedrepresentation learning (ssl) utilizes proxy supervised learning tasks, for example, distinguishing parts of the input signal from distractors, or generating masked input segments conditioned on the unmasked ones, to obtain training data from unlabeled corpora. Self supervised representation learning methods promise a single universal model that would benefit a wide variety of tasks and domains. such methods have shown success in natural language processing and computer vision domains, achieving new levels of performance while reducing the number of labels required for many down stream scenarios. This paper provides a comprehensive review of audio–visual self supervised learning, a promising alternative that uses vast amounts of unlabeled data. it holds the potential to reshape areas like computer vision, and speech recognition.

Comments are closed.