12 Self Supervised Learning Introduction To Speech Processing

12 Self Supervised Learning Introduction To Speech Processing Self supervised learning (ssl) refers to a family of artificial neural network models that are used to learn useful signal representations from data without any supporting information, such as task specific data labels. In this thesis, we explore the use of self supervised learning—a learning paradigm where the learning target is generated from the input itself—for leverag ing such easily scalable resources to improve the performance of spoken language technology.

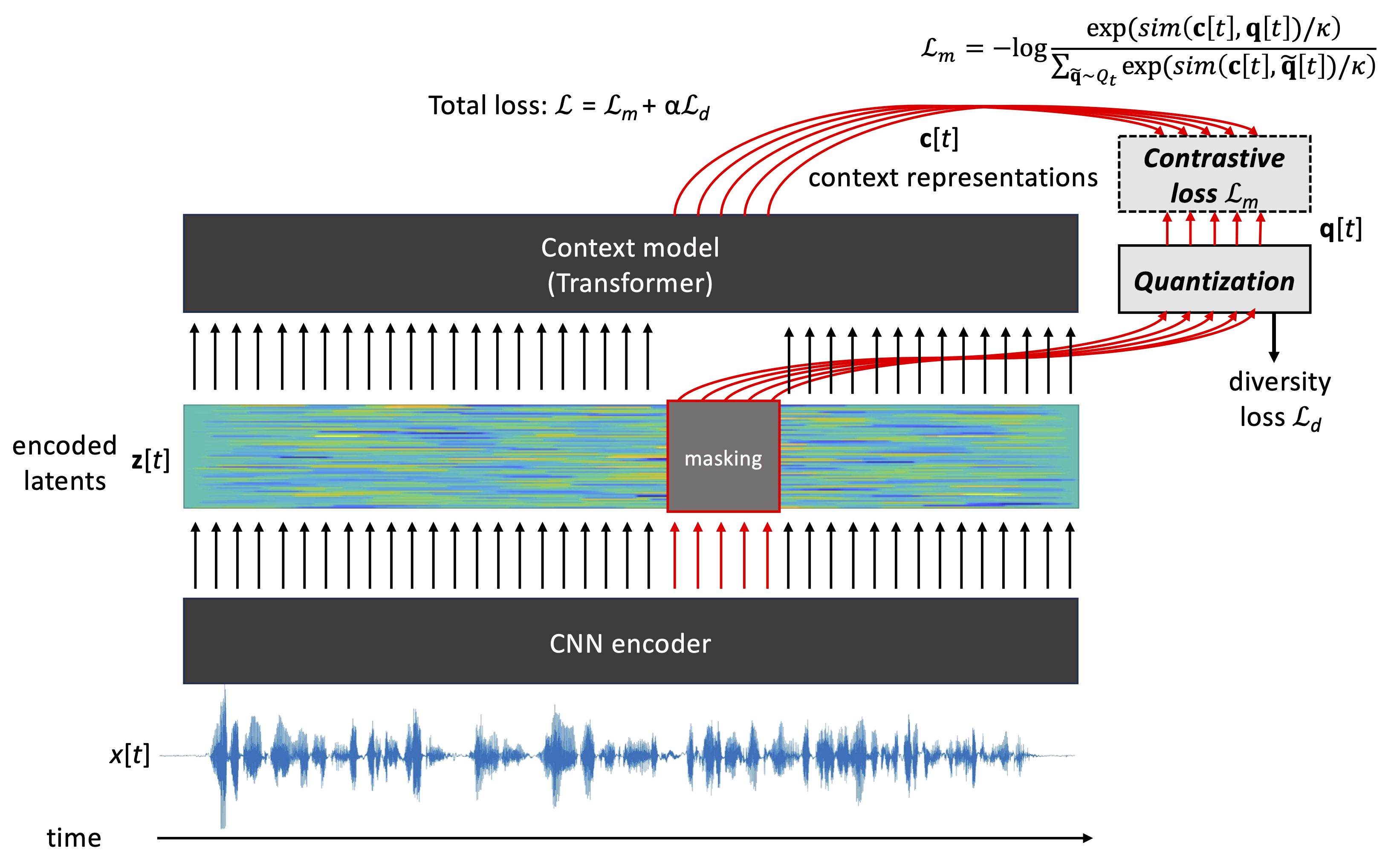

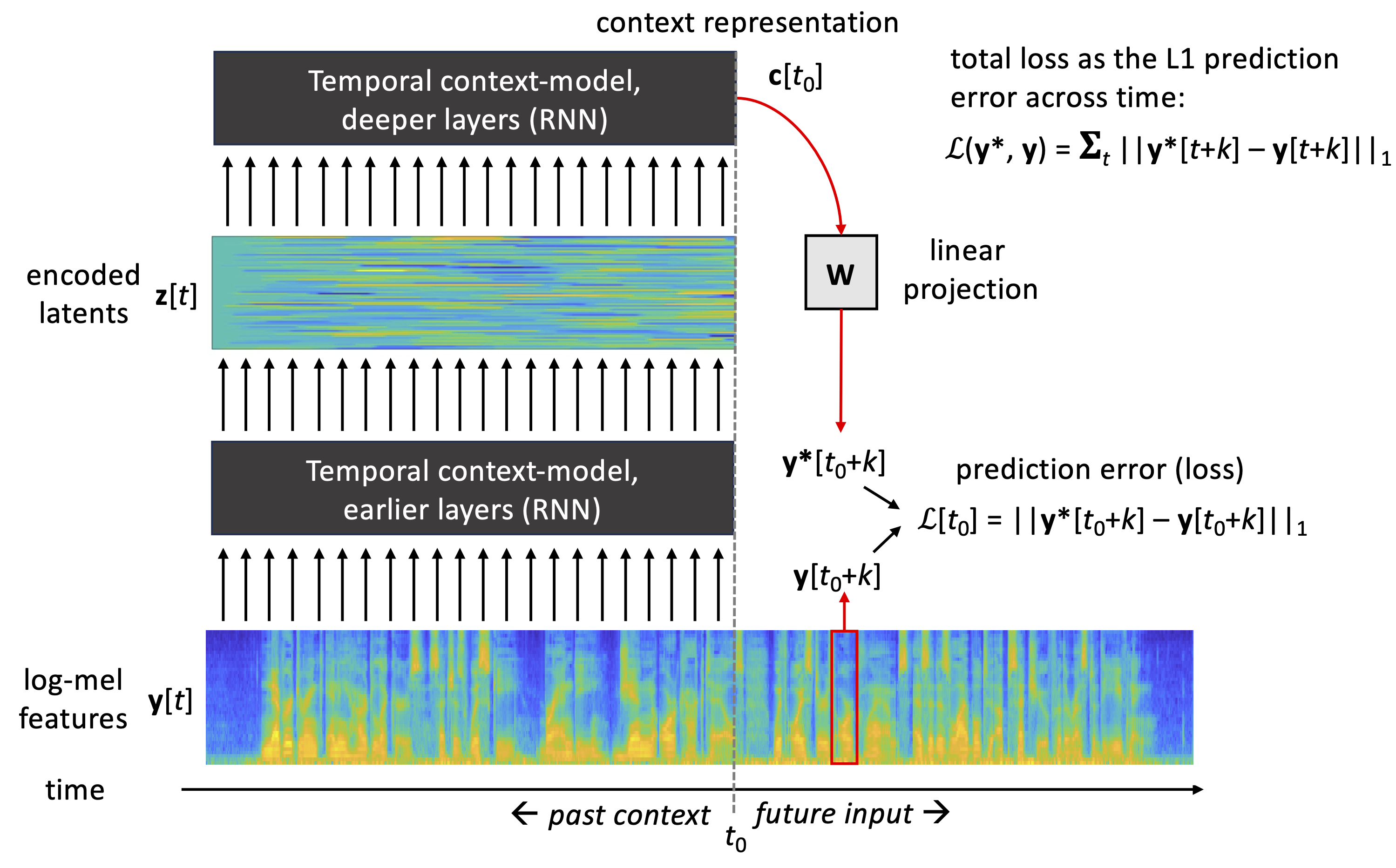

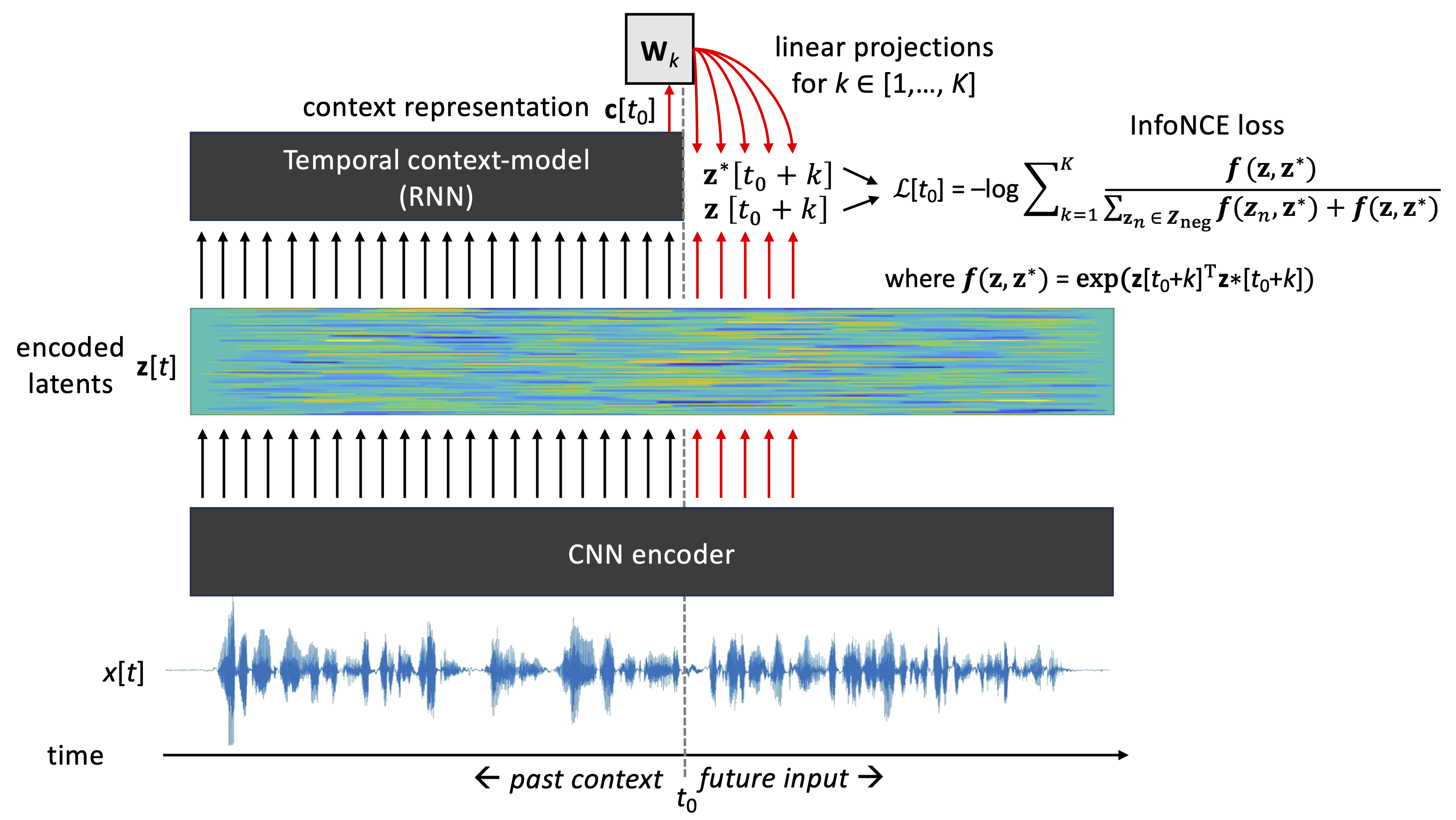

12 Self Supervised Learning Introduction To Speech Processing Abstract—although supervised deep learning has revolution ized speech and audio processing, it has necessitated the building of specialist models for individual tasks and application scenarios. Self supervised learning (ssl) models have demonstrated state of the art (sota) performance for speech processing tasks [1, 2, 3, 4]. ssl models are pre trained on unlabeled data to learn hidden features of the input audio. Self supervised learning (ssl) has emerged as a promising paradigm for learning flexible speech representations from unlabeled data. by designing pretext tasks that exploit statistical regularities, ssl models can capture useful representations that are transferable to downstream tasks. Train by sampling random chunks from large and diverse unlabelled training data (containing speech from a very large variety of speakers): choose random chunk c (anchor).

12 Self Supervised Learning Introduction To Speech Processing Self supervised learning (ssl) has emerged as a promising paradigm for learning flexible speech representations from unlabeled data. by designing pretext tasks that exploit statistical regularities, ssl models can capture useful representations that are transferable to downstream tasks. Train by sampling random chunks from large and diverse unlabelled training data (containing speech from a very large variety of speakers): choose random chunk c (anchor). With this modularization, we have achieved close integration with the general speech processing toolkit espnet, enabling the use of ssl models for a broader range of speech processing tasks and corpora to achieve state of the art (sota) results (kudos to the espnet team):. Although supervised deep learning has revolutionized speech and audio processing, it has necessitated the building of specialist models for individual tasks and application scenarios. it is likewise difficult to apply this to dialects and languages for which only limited labeled data is available. Adapting a self supervised model for a task takes trial and error: which model to use, how to fine tune, what kind of linguistic information is encoded in each model, and in each layer? how is linguistic information distributed across time? how does the pretext task affect what is learned?. Referencing 2nd edition this is an open access and creative commons book of speech processing, intended as pedagogical material for engineering students. hosted by aalto university. instructions for using this book. table of contents.

Comments are closed.