Rdma Read Errors Without Ucx Memtype Cache N Issue 7575

Rdma Read Errors Without Ucx Memtype Cache N Issue 7575 After whole alloc and memtype cache changes from #7128 , we should now not need to set ucx memtype cache=n anymore. in most cases that works fine, but for some dask workflows we see rdma read errors such as bellow when we don't set any ucx memtype cache. Below we demonstrate setting ucx memtype cache to n and checking the configuration: when programmatically configuring ucx py, the ucx prefix is not used. for novice users we recommend using ucx py defaults, see the next section for details.

How To Properly Utilize Rdma In Ucx Openucx Ucx Discussion 9885 This plugin replaces the default nccl internal inter node communication with rdma based transports. it implements both point to point transport (net) (ib verbs (default) and ucx), and collective transport (collnet) (including sharp collective transport). Ucx memory optimization known issues. ucx py regularly sets this to n – toggles whether ucx library intercepts cu*alloc* calls. values: n. values: put zcopy. It’s recommended to not define this variable and instead let ucx determine the closest infiniband device, note that this required the cuda context to be created before ucx ucxx are initialized. Have you tried a simple model first? this could be a model solver specific issue. you could also try using the latest solver, just in case this issue was taken care of in the newer version.

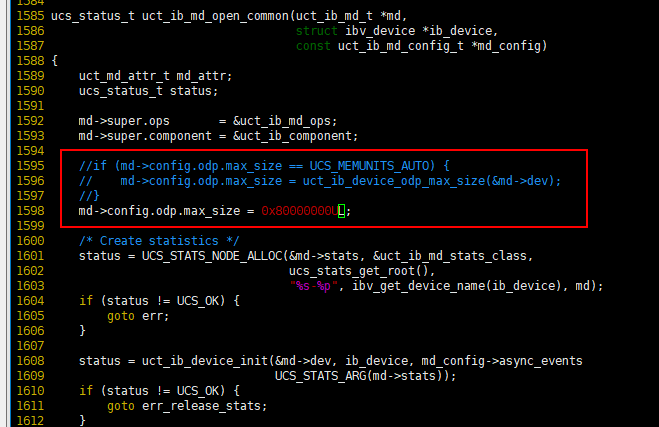

The Odp Test Problem When The Data Size Is Bigger Than 1kb The Single It’s recommended to not define this variable and instead let ucx determine the closest infiniband device, note that this required the cuda context to be created before ucx ucxx are initialized. Have you tried a simple model first? this could be a model solver specific issue. you could also try using the latest solver, just in case this issue was taken care of in the newer version. @brandon cook found that a fortran mpi openacc program which relies on cuda awareness in openmpi was crashing:. In such case user must explicitly disable memory type cache feature in ucx to prevent wrong memory type detection and program fails. please add the following variable to the command line:. The default of ucx ib rcache max regions=inf does not necessarily impact all workloads: the majority of mpi jobs run on darwin have not encountered the mr cache exhaustion discussed herein. The gpudirect rdma technology exposes gpu memory to i o devices by enabling the direct communication path between gpus in two remote systems. this feature eliminates the need to use the system cpus to stage gpu data in and out intermediate system memory buffers.

The Odp Test Problem When The Data Size Is Bigger Than 1kb The Single @brandon cook found that a fortran mpi openacc program which relies on cuda awareness in openmpi was crashing:. In such case user must explicitly disable memory type cache feature in ucx to prevent wrong memory type detection and program fails. please add the following variable to the command line:. The default of ucx ib rcache max regions=inf does not necessarily impact all workloads: the majority of mpi jobs run on darwin have not encountered the mr cache exhaustion discussed herein. The gpudirect rdma technology exposes gpu memory to i o devices by enabling the direct communication path between gpus in two remote systems. this feature eliminates the need to use the system cpus to stage gpu data in and out intermediate system memory buffers.

The Odp Test Problem When The Data Size Is Bigger Than 1kb The Single The default of ucx ib rcache max regions=inf does not necessarily impact all workloads: the majority of mpi jobs run on darwin have not encountered the mr cache exhaustion discussed herein. The gpudirect rdma technology exposes gpu memory to i o devices by enabling the direct communication path between gpus in two remote systems. this feature eliminates the need to use the system cpus to stage gpu data in and out intermediate system memory buffers.

Comments are closed.