Q Cuda And Memtype Cache Problems Issue 4767 Openucx Ucx Github

Q Cuda And Memtype Cache Problems Issue 4767 Openucx Ucx Github I have found a problem with the memtype cache, which happens in 1.7.0, but seems to be gone in the master. since i have not found an issue, which would report this i wanted to ask, if this is known and has been solved, or is there another reason why i'm not seeing it in master. Below we demonstrate setting ucx memtype cache to n and checking the configuration: when programmatically configuring ucx py, the ucx prefix is not used. for novice users we recommend using ucx py defaults, see the next section for details.

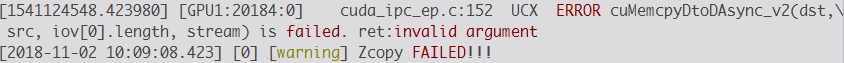

No Cudaipcclosememhandle Called In Cuda Ipc Issue 3030 Openucx Ucx Moreover, if the address cannot be found in memtype cache, it may also be mistakenly classified as a host type. so, may i ask, what is the function of ucx memtype cahe? thank you very much for your answer!. Ucx memory optimization known issues. ucx py regularly sets this to n – toggles whether ucx library intercepts cu*alloc* calls. values: n. values: put zcopy. @brandon cook found that a fortran mpi openacc program which relies on cuda awareness in openmpi was crashing:. Error handling enables ucx to handle errors that occur due to algorithms with fault recovery logic. to handle such errors, a new mode was added, guaranteeing an accurate status on every sent message.

No Cudaipcclosememhandle Called In Cuda Ipc Issue 3030 Openucx Ucx @brandon cook found that a fortran mpi openacc program which relies on cuda awareness in openmpi was crashing:. Error handling enables ucx to handle errors that occur due to algorithms with fault recovery logic. to handle such errors, a new mode was added, guaranteeing an accurate status on every sent message. The first appears to be about special optimizations for open mpi using cuda, which is an api for gpus, just like you said. some software included with qiime2 does use openmpi, but i don't think anything uses cuda. Ucx memory hooks are set during the initialization ucx. cuda memory allocations done before that are intercepted and populated in the memory type cache. enabling acs (access control services) on plx pci e switches might be a reason for this. please refer this for more details. As a result, memory type cache works incorrectly and can lead to segfault data corruption this is the most common issue reported by gpu users proposed solution: disable memtype cache by default (will hurt micro benchmarks) validate buffer id in memory registration cache how can we do this for rocm? better memory hooks?. With cuda 6.5, you can build all versions of cuda aware open mpi without doing anything special. however, with cuda 7.0 and cuda 7.5, you need to pass in some specific compiler flags for things to work correctly.

Comments are closed.