Ucx Gpu Direct Rdma Performance Degradation When Compared To Cuda Copy

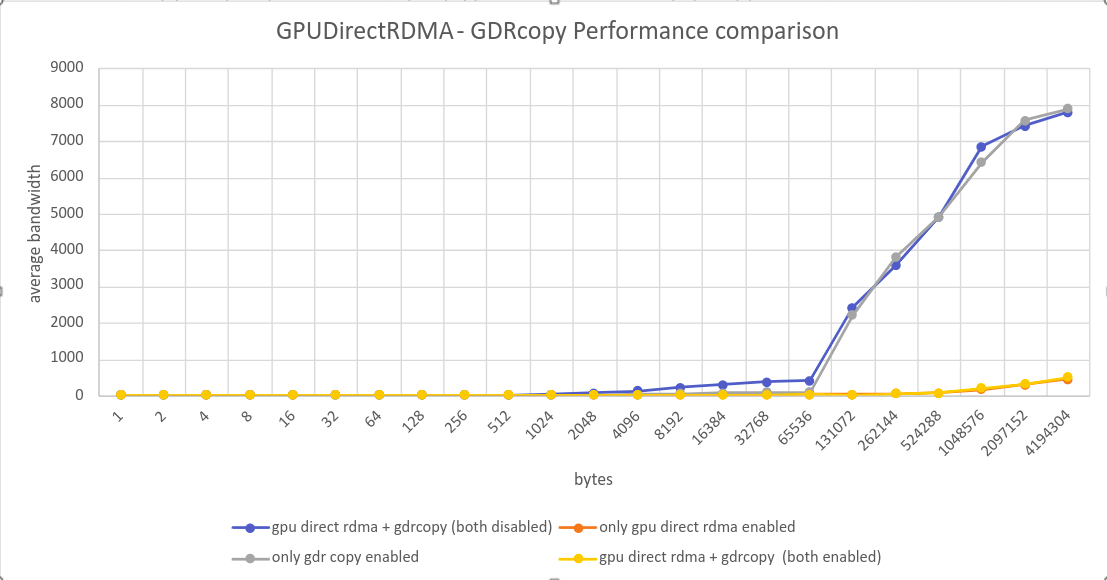

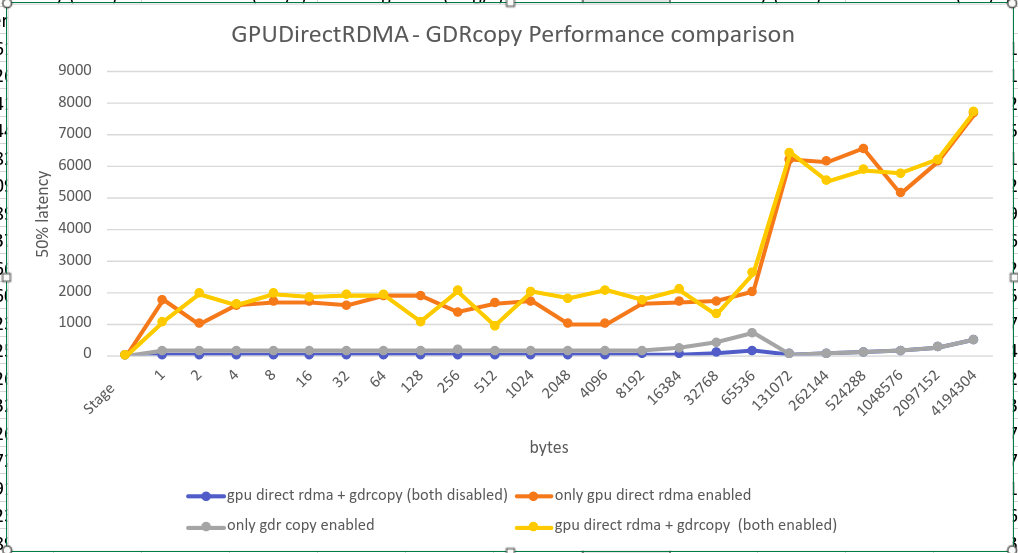

Ucx Gpu Direct Rdma Performance Degradation When Compared To Cuda Copy While doing the comparative study of gpu direct rdma latency and bandwidth with default cuda path, performance degradation is observed with gpu direct rdma enabled irrespective of the message size. To make it fast you need an rdma capable fabric (infiniband or roce), peer memory support on the nic driver, and a transport (ucx, nccl, libfabric) built with ` with gdrcopy` and ` with verbs.

Ucx Gpu Direct Rdma Performance Degradation When Compared To Cuda Copy This post walk through the configuration and execution of the gpudirect rdma and gdr copy features. The device to host and host to device transfers occur when gpu stages the data (to be sent) via cpu memory into cuda pinned buffers. but with the gpu direct rdma driver installed, these staging transfers should be eliminated. We are assuming that ucx has some logic to decide if it will stage through host memory or use rdma based on the topology. but given, that rdma seems to work across numa nodes, we were wondering if this can be either configured or if there is another problem. I have conducted some experiments and found that setting ucx ib gpu direct rdma=0 does indeed cause the network card to no longer directly manipulate gpu memory data, but instead copy it to the cpu through cuda copy before executing the rdma operation.

Ucx Gpu Direct Rdma Performance Degradation When Compared To Cuda Copy We are assuming that ucx has some logic to decide if it will stage through host memory or use rdma based on the topology. but given, that rdma seems to work across numa nodes, we were wondering if this can be either configured or if there is another problem. I have conducted some experiments and found that setting ucx ib gpu direct rdma=0 does indeed cause the network card to no longer directly manipulate gpu memory data, but instead copy it to the cpu through cuda copy before executing the rdma operation. Open mpi offers two flavors of cuda support: via ucx. this is the preferred mechanism. since ucx will be providing the cuda support, it is important to ensure that ucx itself is built with cuda support. One downside of the gpu messaging api is that performance may degrade from the delay in posting the receive for the incoming gpu data, which arises from the receiver not knowing which ucx tag was used until the host side message arrives. It covers transport protocol selection, memory management optimization, rdma tuning, ucx configuration, and environment variable tuning to achieve maximum throughput and minimum latency. Changes in cuda 12.2 2. design considerations 2.1. lazy unpinning optimization 2.2. registration cache 2.3. unpin callback 2.4. supported systems 2.5. pci bar sizes 2.6. tokens usage 2.7. synchronization and memory ordering 3. how to perform specific tasks 3.1. displaying gpu bar space 3.2. pinning gpu memory 3.3. unpinning gpu memory 3.4.

Comments are closed.