Nvidia Gpudirect Nvidia Developer

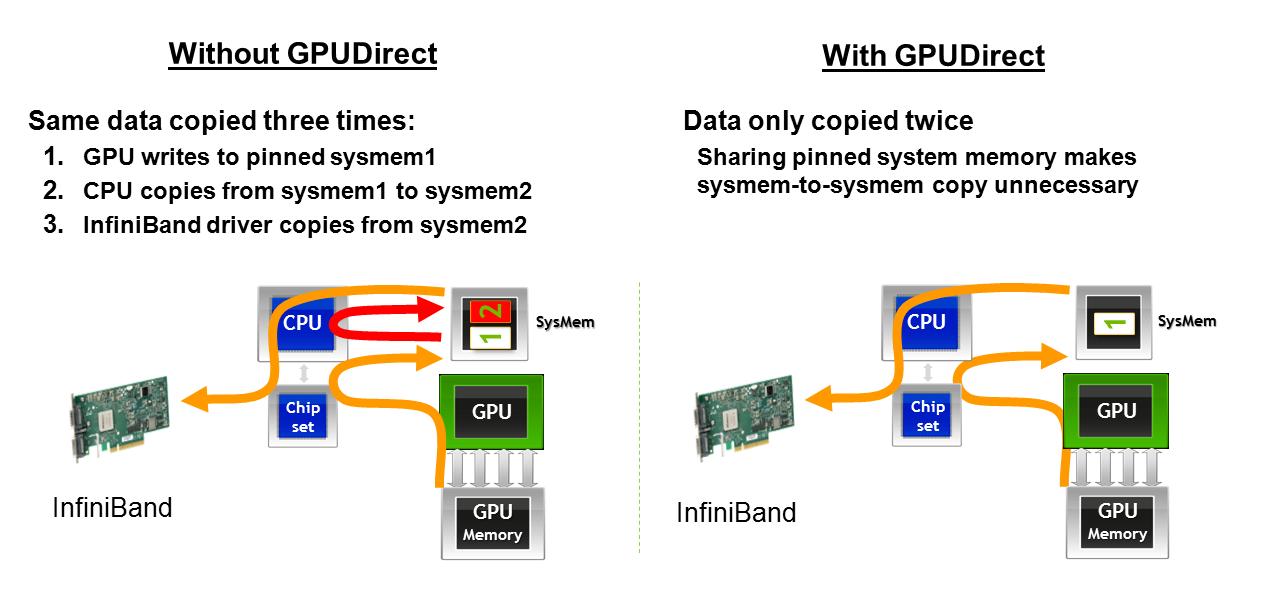

Nvidia Gpudirect Nvidia Developer Designed specifically for the needs of gpu acceleration, gpudirect rdma provides direct communication between nvidia gpus in remote systems. this eliminates the system cpus and the required buffer copies of data via the system memory, resulting in 10x better performance. Gpudirect for video technology is available to application developers through 3rd party partner sdks and gives developers full control to stream video in and out of the gpu at sub frame transfer times in opengl, directx or cuda on windows or linux.

Nvidia Gpudirect Nvidia Developer Earlier releases: only necessary when using nvidia drivers older than v270.41.19 developed jointly by nvidia and mellanox new interfaces in the cuda and mellanox drivers linux kernel patch installation instructions at developer.nvidia gpudirect supported for tesla m and s datacenter products on rhel only. The nvidia gpu driver package provides a kernel module, nvidia peermem, which provides nvidia infiniband based hcas (host channel adapters) direct peer to peer read and write access to the nvidia gpu’s video memory. My next goal is to directly send this data to gpu memory (tesla k80) using gpudirect rdma. i have reviewed the following document and also learned that the same task can be accomplished using gpudirect async. Gpudirect® storage creates a direct data path between local or remote storage, such as nvme or nvme over fabrics (nvme of), and gpu memory. by enabling a direct memory access (dma) engine near the network adapter or storage, it moves data into or out of gpu memory—without burdening the cpu.

24 Mayo 2022 Profesional Review My next goal is to directly send this data to gpu memory (tesla k80) using gpudirect rdma. i have reviewed the following document and also learned that the same task can be accomplished using gpudirect async. Gpudirect® storage creates a direct data path between local or remote storage, such as nvme or nvme over fabrics (nvme of), and gpu memory. by enabling a direct memory access (dma) engine near the network adapter or storage, it moves data into or out of gpu memory—without burdening the cpu. The purpose of the design guide is to show oems, csps and odms how to design their servers to take advantage of gpudirect storage and to help application developers understand where gpudirect storage can bring value to application performance. Gpudirect® storage (gds) enables a direct data path for direct memory access (dma) transfers between gpu memory and storage, which avoids a bounce buffer through the cpu. using this direct path can relieve system bandwidth bottlenecks and decrease the latency and utilization load on the cpu. As a result of the nvidia co development effort with mellanox technologies, mellanox provides support for gpudirect technology, that eliminates cpu bandwidth and latency bottlenecks using direct memory access (dma) between gpus and mellanox hcas, resulting in significantly improved rdma applications such as mpi. Gpudirect storage is designed for workloads that need to move large amounts of data efficiently between storage and gpus. by avoiding unnecessary cpu copies, it helps improve throughput, reduce latency, and free cpu resources for other work.

Comments are closed.