Mujin Kwun Carles Domingo Enrich Energy Based Fine Tuning Of Language Models

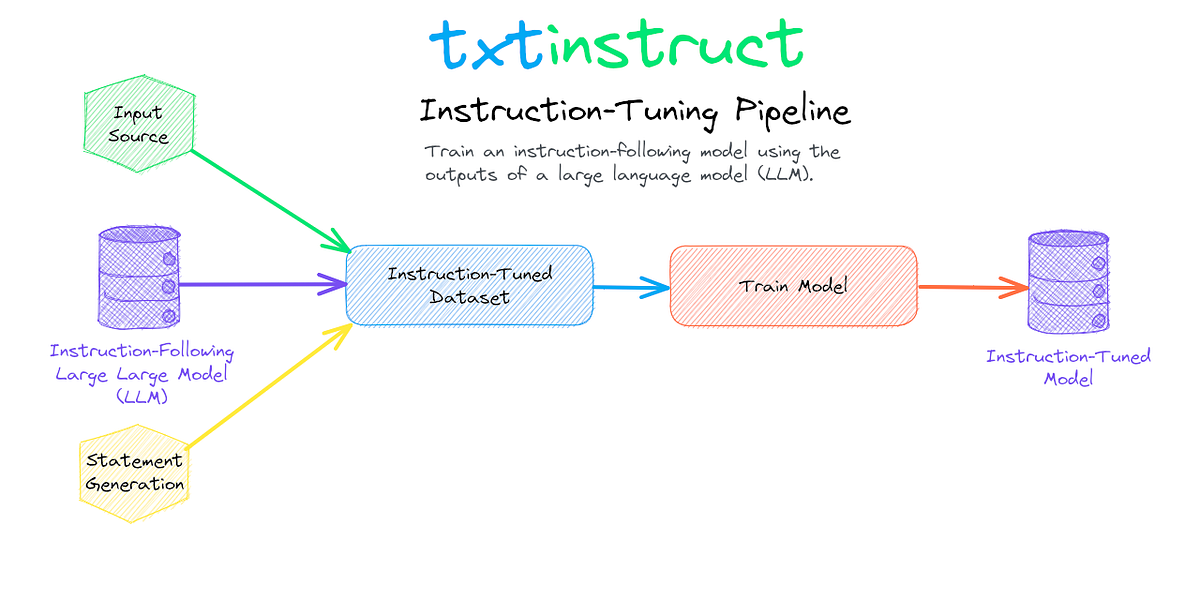

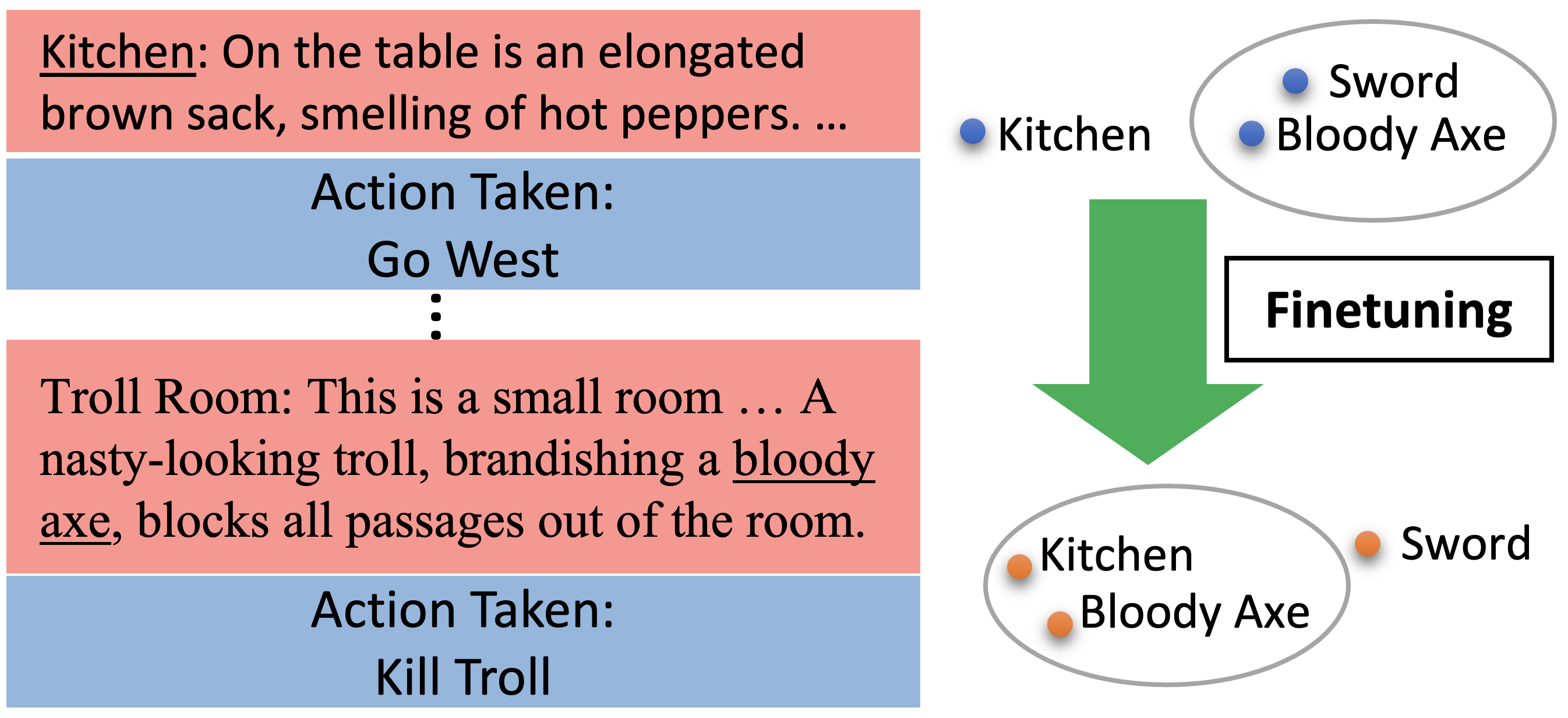

Fine Tuning Language Models For Factuality Pdf Accuracy And We introduce a feature matching objective for language model fine tuning that targets sequence level statistics of the completion distribution, providing dense semantic feedback without requiring a task specific verifier or preference model. Energy based fine tuning (ebft) introduces a feature matching objective that measures whether the statistics of model generated completions match those of ground truth completions in the activation space of a frozen pre trained model.

Fine Tuning Language Models Efficiently Datatunnel In our experiments, ebft matches rlvr on accuracy without needing any reward or verifier, while also improving perplexity and the quality of the model’s output distribution. it works on any text data, scales consistently from 1.5b to 7b parameters, and generalizes better out of distribution. This study proposes a novel fine tuning approach for llms that is specifically designed for the translation task, eliminating the need for the abundant parallel data that traditional translation models usually depend on. Accessible explanation of "matching features, not tokens: energy based fine tuning of language models" by samy jelassi, mujin kwun, rosie zhao, yuanzhi li, nicolo fusi, yilun du, sham m. kakade, carles domingo enrich. this paper introduces energy based fine tuning (ebft), a novel method that optimi…. I am a senior researcher at microsoft research new england, based in cambridge, ma. i work on generative ai models (diffusion and flow models, language models) and related topics at the intersection of machine learning, statistics, and ai for science.

Fine Tuning Large Language Models Accessible explanation of "matching features, not tokens: energy based fine tuning of language models" by samy jelassi, mujin kwun, rosie zhao, yuanzhi li, nicolo fusi, yilun du, sham m. kakade, carles domingo enrich. this paper introduces energy based fine tuning (ebft), a novel method that optimi…. I am a senior researcher at microsoft research new england, based in cambridge, ma. i work on generative ai models (diffusion and flow models, language models) and related topics at the intersection of machine learning, statistics, and ai for science. We introduce a feature matching objective for language model fine tuning that targets sequence level statistics of the completion distribution, providing dense semantic feedback without requiring a task specific verifier or preference model. These datasets and experimental steps highlight the comprehensive evaluation of the proposed energy based fine tuning (ebft) method across various coding and translation tasks. Energy based modeling: an energy function assigns a scalar score to each possible output — low energy = good, high energy = bad. you don’t need it to be a proper probability (normalized over all sequences), which is what makes it tractable.

Fine Tuning Large Language Models We introduce a feature matching objective for language model fine tuning that targets sequence level statistics of the completion distribution, providing dense semantic feedback without requiring a task specific verifier or preference model. These datasets and experimental steps highlight the comprehensive evaluation of the proposed energy based fine tuning (ebft) method across various coding and translation tasks. Energy based modeling: an energy function assigns a scalar score to each possible output — low energy = good, high energy = bad. you don’t need it to be a proper probability (normalized over all sequences), which is what makes it tractable.

On The Effects Of Fine Tuning Language Models For Text Based Energy based modeling: an energy function assigns a scalar score to each possible output — low energy = good, high energy = bad. you don’t need it to be a proper probability (normalized over all sequences), which is what makes it tractable.

Fine Tuning Language Models For Business Tasks Datafloq

Comments are closed.