Fine Tuning Language Models For Text Generation Peerdh

Fine Tuning Language Models For Factuality Pdf Accuracy And By following these steps, you can effectively fine tune a language model for text generation. this process not only enhances the model's performance but also tailors it to your specific needs. A thorough look at the best free open source ai models available in 2025 across text, code, and image generation. whether you want to run models locally, self host on your own server, or try them instantly through a browser, this article breaks down which models actually deliver, what licenses let you use them commercially, and how to access them without spending a dollar.

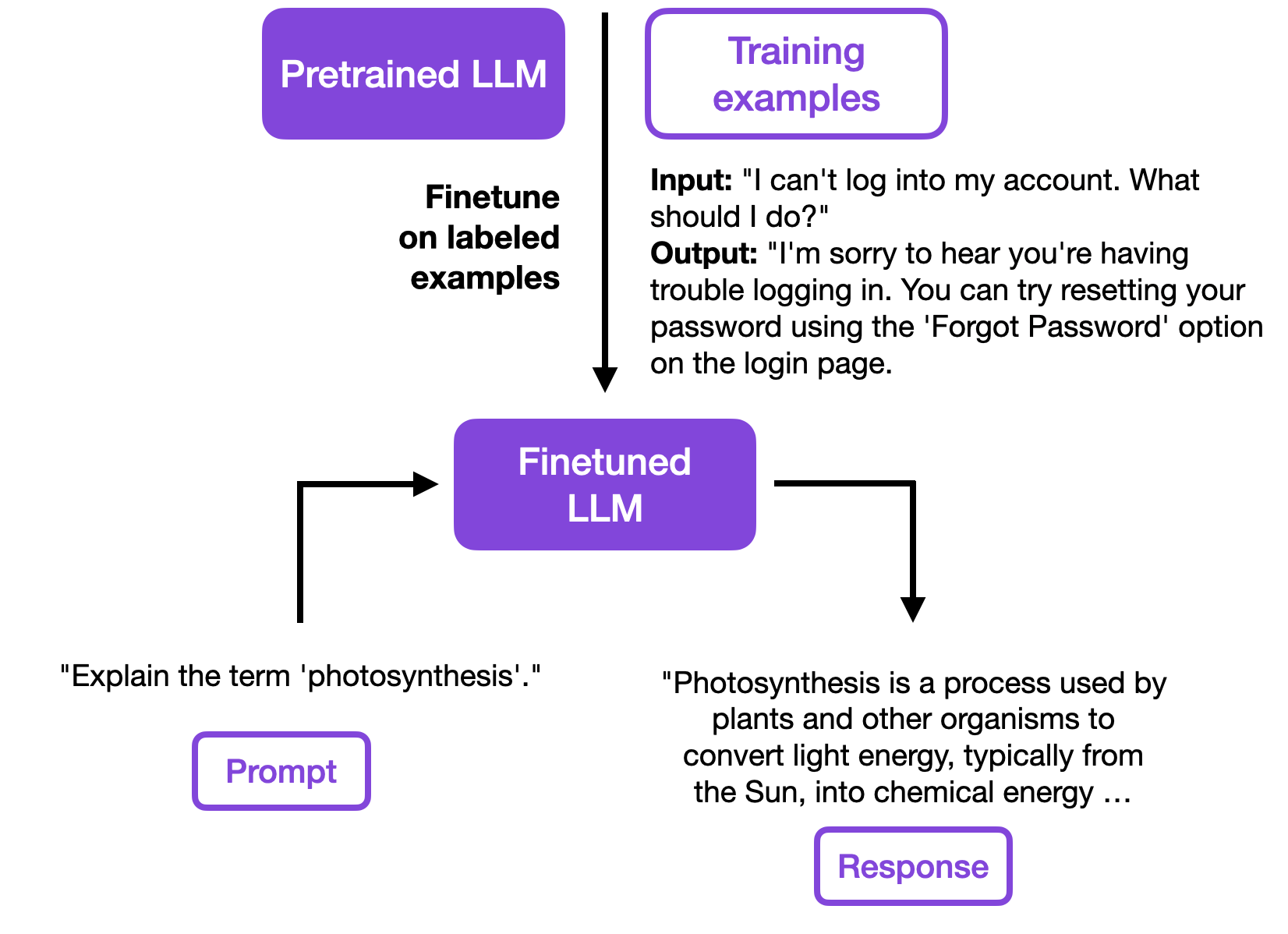

Fine Tuning Language Models For Text Generation Peerdh This technical report thoroughly examines the process of fine tuning large language models (llms), integrating theoretical insights and practical applications. it begins by tracing the historical development of llms, emphasising their evolution from traditional natural language processing (nlp) models and their pivotal role in modern ai systems. This study presents a comprehensive literature review of transformer based models for text generation in multiple languages and application domains. the primary objective was to identify the techniques employed, the languages and domains represented, and the extent to which the proposed models qualify as foundation models. Fine tuning refers to the process of taking a pre trained model and adapting it to a specific task by training it further on a smaller, domain specific dataset. In this blog post, we will explore the fundamental concepts, usage methods, common practices, and best practices for fine tuning gpt 2 for text generation using pytorch.

Fine Tuning Large Language Models Fine tuning refers to the process of taking a pre trained model and adapting it to a specific task by training it further on a smaller, domain specific dataset. In this blog post, we will explore the fundamental concepts, usage methods, common practices, and best practices for fine tuning gpt 2 for text generation using pytorch. In this work, we develop and release llama 2, a collection of pretrained and fine tuned large language models (llms) ranging in scale from 7 billion to 70 billion parameters. our fine tuned llms, called llama 2 chat, are optimized for dialogue use cases. our models outperform open source chat models on most benchmarks we tested, and based on our human evaluations for helpfulness and safety. Discover llama 4's class leading ai models, scout and maverick. experience top performance, multimodality, low costs, and unparalleled efficiency. This project focuses on fine tuning state of the art ai language models for text generation tasks using various techniques and libraries. the goal is to leverage pre trained models and adapt them to specific text generation tasks, such as summarization, question answering, or dialogue generation. This study aims to bridge this gap by developing a robust framework that utilizes fine tuned language models, classification techniques, and contextual story generation to generate and classify children’s stories based on their suitability.

Fine Tuning Large Language Models In this work, we develop and release llama 2, a collection of pretrained and fine tuned large language models (llms) ranging in scale from 7 billion to 70 billion parameters. our fine tuned llms, called llama 2 chat, are optimized for dialogue use cases. our models outperform open source chat models on most benchmarks we tested, and based on our human evaluations for helpfulness and safety. Discover llama 4's class leading ai models, scout and maverick. experience top performance, multimodality, low costs, and unparalleled efficiency. This project focuses on fine tuning state of the art ai language models for text generation tasks using various techniques and libraries. the goal is to leverage pre trained models and adapt them to specific text generation tasks, such as summarization, question answering, or dialogue generation. This study aims to bridge this gap by developing a robust framework that utilizes fine tuned language models, classification techniques, and contextual story generation to generate and classify children’s stories based on their suitability.

Exploring Parameter Efficient Fine Tuning Techniques For Code This project focuses on fine tuning state of the art ai language models for text generation tasks using various techniques and libraries. the goal is to leverage pre trained models and adapt them to specific text generation tasks, such as summarization, question answering, or dialogue generation. This study aims to bridge this gap by developing a robust framework that utilizes fine tuned language models, classification techniques, and contextual story generation to generate and classify children’s stories based on their suitability.

Comments are closed.