Mmlongbench Doc A Comprehensive Benchmark For Evaluating Long Context

Mmlongbench Doc A Comprehensive Benchmark For Evaluating Long Context This work presents mmlongbench doc, a long context, multi modal benchmark comprising 1,062 expert annotated questions. distinct from previous datasets, it is constructed upon 130 lengthy pdf formatted documents with an average of 49.4 pages and 20,971 textual tokens. To bridge this gap, we construct mmlongbench doc which comprises 135 documents and 1091 qustions (each accompanied by a short, deterministic reference answer and detailed meta information.).

Mmlongbench Doc A Comprehensive Benchmark For Evaluating Long Context This work presents mmlongbench doc, a long context, multi modal benchmark comprising 1,091 expert annotated questions. distinct from previous datasets, it is constructed upon 135 lengthy pdf formatted documents with an average of 47.5 pages and 21,214 textual tokens. This work presents mmlongbench doc, a long context, multimodal benchmark comprising 1,082 expert annotated questions. distinct from previous datasets, it is constructed upon 135 lengthy pdf formatted documents with an average of 47.5 pages and 21,214 textual tokens. To our best knowledge, mmlongbench doc is the first comprehensive, qualified, and easy to use benchmark on the long context du task. more detailed descriptions and comparisons are presented in table 1. This work presents mmlongbench doc, a long context, multi modal benchmark comprising 1,062 expert annotated questions. distinct from previous datasets, it is constructed upon 130 lengthy pdf formatted documents with an average of 49.4 pages and 20,971 textual tokens.

Github Mnismt Llms Long Context Benchmark A Visualization Website To our best knowledge, mmlongbench doc is the first comprehensive, qualified, and easy to use benchmark on the long context du task. more detailed descriptions and comparisons are presented in table 1. This work presents mmlongbench doc, a long context, multi modal benchmark comprising 1,062 expert annotated questions. distinct from previous datasets, it is constructed upon 130 lengthy pdf formatted documents with an average of 49.4 pages and 20,971 textual tokens. Lm for document efficient: no need for document parsing effective: thorough perception on layout structures and visualized contexts (charts, table, diagram, etc.) • there lacks a benchmark to evaluating the long context document understanding capabilities of vlms. we propose mmlongbench doc !. In this work, we introduce mmlongbench, the first benchmark covering a diverse set of long context vision language tasks, to evaluate lcvlms effectively and thoroughly. This paper introduces longbench, the first bilingual, multi task benchmark for long context understanding, enabling a more rigorous evaluation of long context understandings of large language models. This work introduces mmlongbench doc, a comprehensive benchmark to evaluate large vision language models' understanding of long, multi modal documents.

Llms Long Context Comprehension Benchmark Lm for document efficient: no need for document parsing effective: thorough perception on layout structures and visualized contexts (charts, table, diagram, etc.) • there lacks a benchmark to evaluating the long context document understanding capabilities of vlms. we propose mmlongbench doc !. In this work, we introduce mmlongbench, the first benchmark covering a diverse set of long context vision language tasks, to evaluate lcvlms effectively and thoroughly. This paper introduces longbench, the first bilingual, multi task benchmark for long context understanding, enabling a more rigorous evaluation of long context understandings of large language models. This work introduces mmlongbench doc, a comprehensive benchmark to evaluate large vision language models' understanding of long, multi modal documents.

Researchers Introduce Mmlongbench A Comprehensive Benchmark For Long This paper introduces longbench, the first bilingual, multi task benchmark for long context understanding, enabling a more rigorous evaluation of long context understandings of large language models. This work introduces mmlongbench doc, a comprehensive benchmark to evaluate large vision language models' understanding of long, multi modal documents.

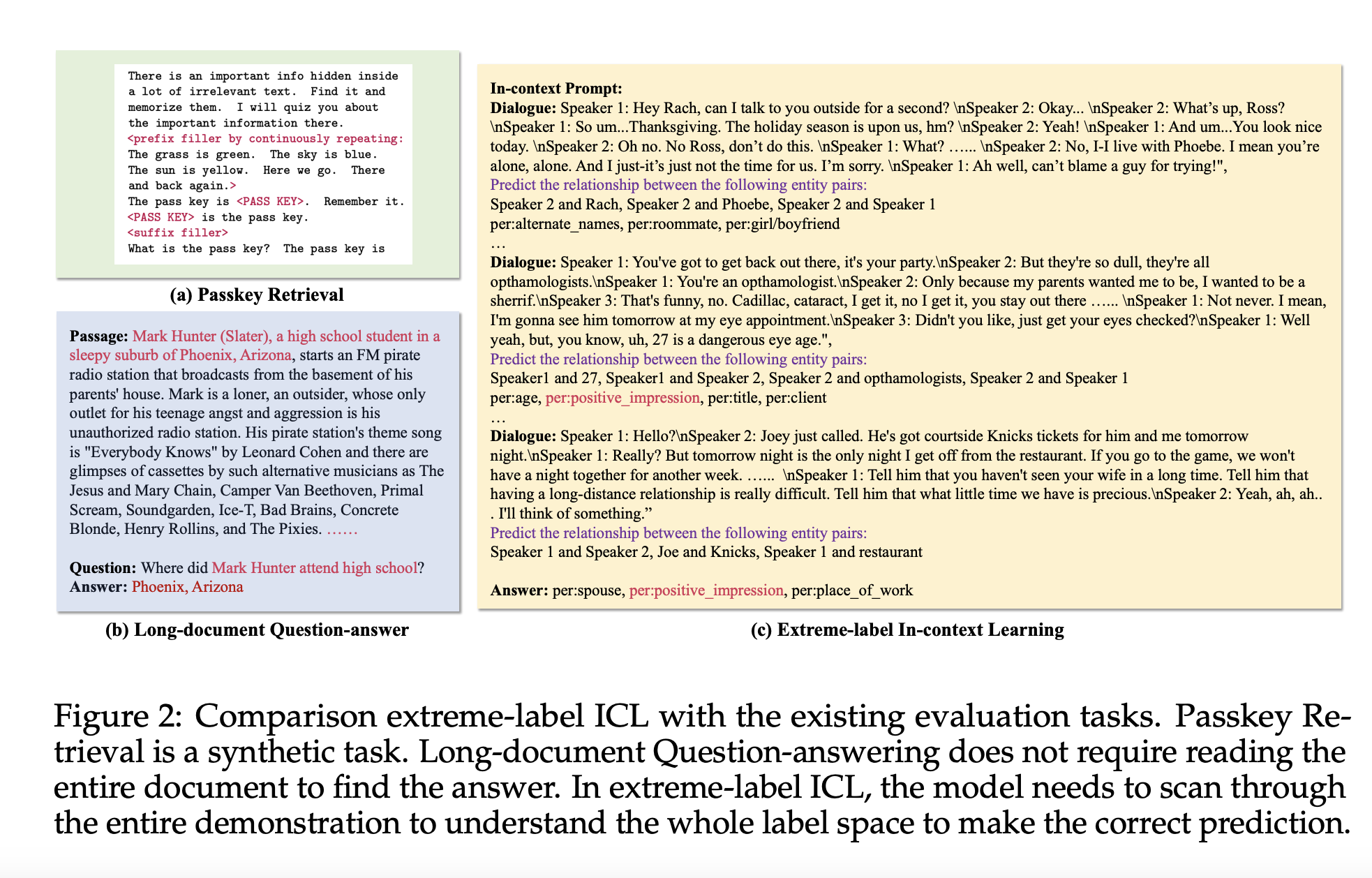

Longiclbench Benchmark Evaluating Large Language Models On Long In

Comments are closed.