Github Mrconter1 The Long Multiplication Benchmark Evaluating Llms

Github Mnismt Llms Long Context Benchmark A Visualization Website This repository offers a scalable method to validate the capability of future llms to not only read long contexts but also to constructively use them. by leveraging long multiplication as a benchmark, it provides a straightforward way to evaluate how well llms utilize long contexts meaningfully. Evaluating llms' context handling and text generation using long multiplication. the long multiplication benchmark benchmark.py at main · mrconter1 the long multiplication benchmark.

Github Strivin0311 Long Llms Learning A Repository Sharing The The scope of llm reasoning for differential privacy. we present an initial benchmark for evaluating llms’ ability to determine whether an algorithm satisfies a stated dp guarantee, a core capability underpinning future end to end systems for designing, validating, and deploying differentially private algorithms. The long multiplication benchmark evaluates large language models (llms) on their ability to handle and utilize long contexts to solve multiplication problems. Longbench v2 is designed to assess the ability of llms to handle long context problems requiring deep understanding and reasoning across real world multitasks. longbench v2 has the following features: (1) length: context length ranging from 8k to 2m words, with the majority under 128k. The long multiplication benchmark reviews and mentions posts with mentions or reviews of the long multiplication benchmark. we have used some of these posts to build our list of alternatives and similar projects.

Github Mrconter1 The Long Multiplication Benchmark Evaluating Llms Longbench v2 is designed to assess the ability of llms to handle long context problems requiring deep understanding and reasoning across real world multitasks. longbench v2 has the following features: (1) length: context length ranging from 8k to 2m words, with the majority under 128k. The long multiplication benchmark reviews and mentions posts with mentions or reviews of the long multiplication benchmark. we have used some of these posts to build our list of alternatives and similar projects. The long multiplication benchmark evaluates large language models (llms) on their ability to handle and utilize long contexts to solve multiplication problems. By leveraging long multiplication as a benchmark, it provides a straightforward way to evaluate how well llms utilize long contexts meaningfully. the results show that newer models can better handle longer contexts, emphasizing the need for continuous improvement. However, existing real task based long context evaluation benchmarks have a few major shortcomings. for instance, some needle in a haystack like benchmarks are too synthetic, and therefore do not represent the real world usage of llms. In this paper, we introduce longbench, the first bilingual, multi task benchmark for long context understanding, enabling a more rigorous evaluation of long context understanding.

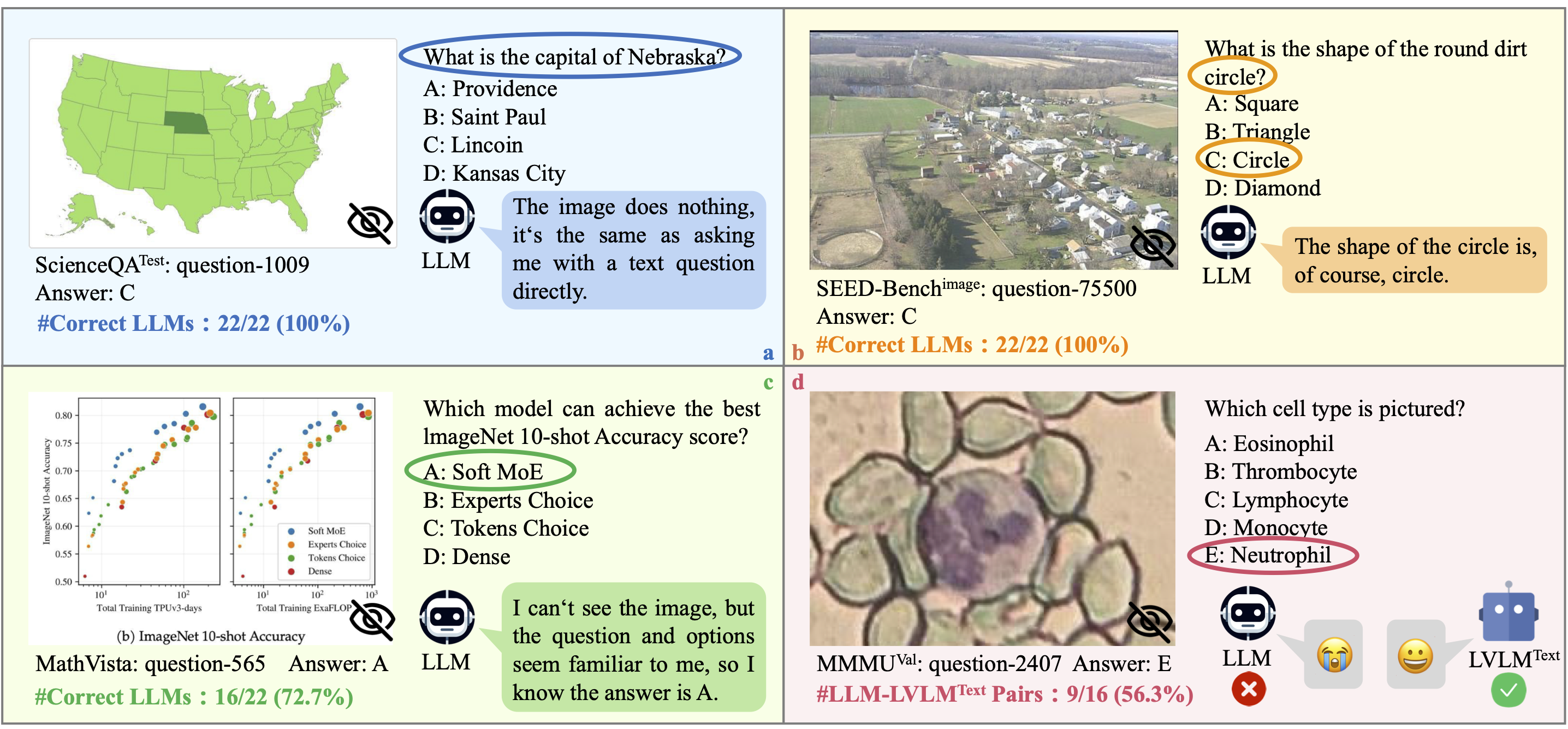

Mmstar The long multiplication benchmark evaluates large language models (llms) on their ability to handle and utilize long contexts to solve multiplication problems. By leveraging long multiplication as a benchmark, it provides a straightforward way to evaluate how well llms utilize long contexts meaningfully. the results show that newer models can better handle longer contexts, emphasizing the need for continuous improvement. However, existing real task based long context evaluation benchmarks have a few major shortcomings. for instance, some needle in a haystack like benchmarks are too synthetic, and therefore do not represent the real world usage of llms. In this paper, we introduce longbench, the first bilingual, multi task benchmark for long context understanding, enabling a more rigorous evaluation of long context understanding.

Evaluating Llms Part I Benchmarking Strategies However, existing real task based long context evaluation benchmarks have a few major shortcomings. for instance, some needle in a haystack like benchmarks are too synthetic, and therefore do not represent the real world usage of llms. In this paper, we introduce longbench, the first bilingual, multi task benchmark for long context understanding, enabling a more rigorous evaluation of long context understanding.

Comments are closed.