Benchmark Llms Litellm

Benchmark Llms Litellm 🤝 schedule a 1 on 1 session: book a 1 on 1 session with krrish and ishaan, the founders, to discuss any issues, provide feedback, or explore how we can improve litellm for you. Compare ai models in one ai leaderboard with rankings for top ai models, best ai models, and best llms by price, speed, and performance.

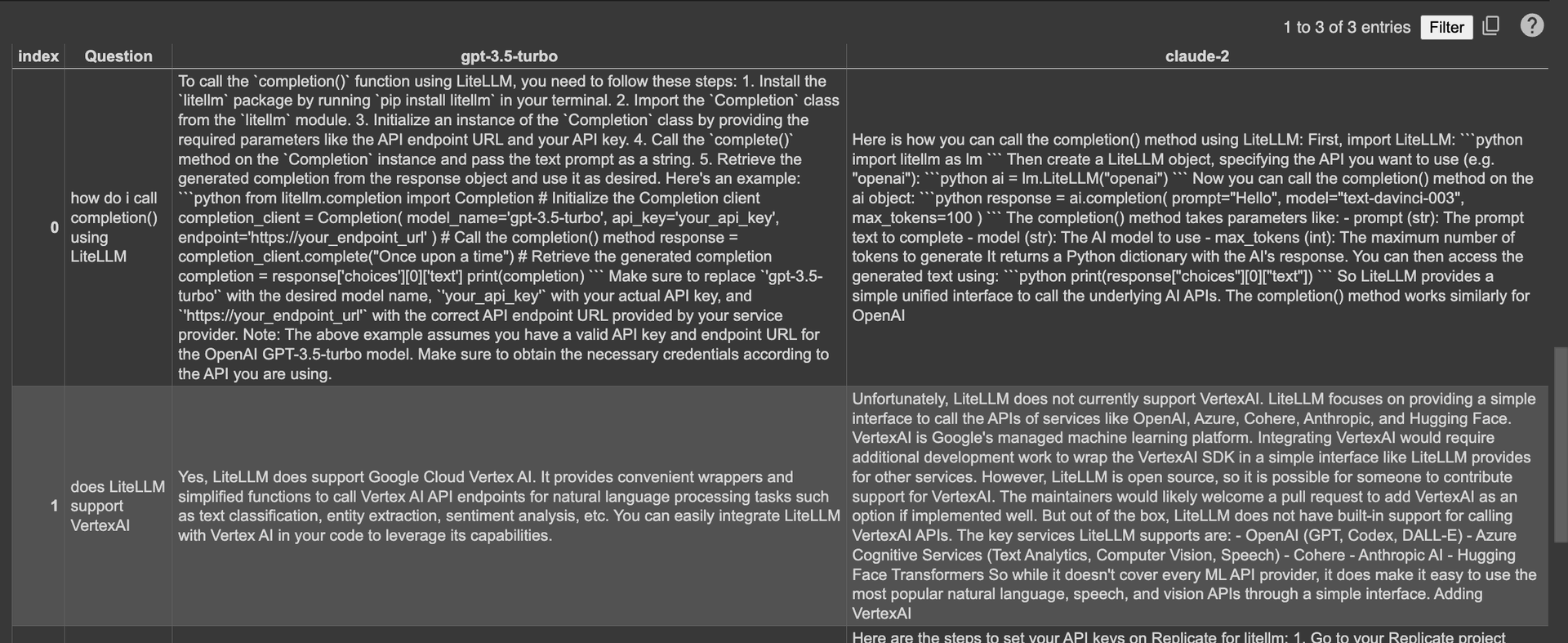

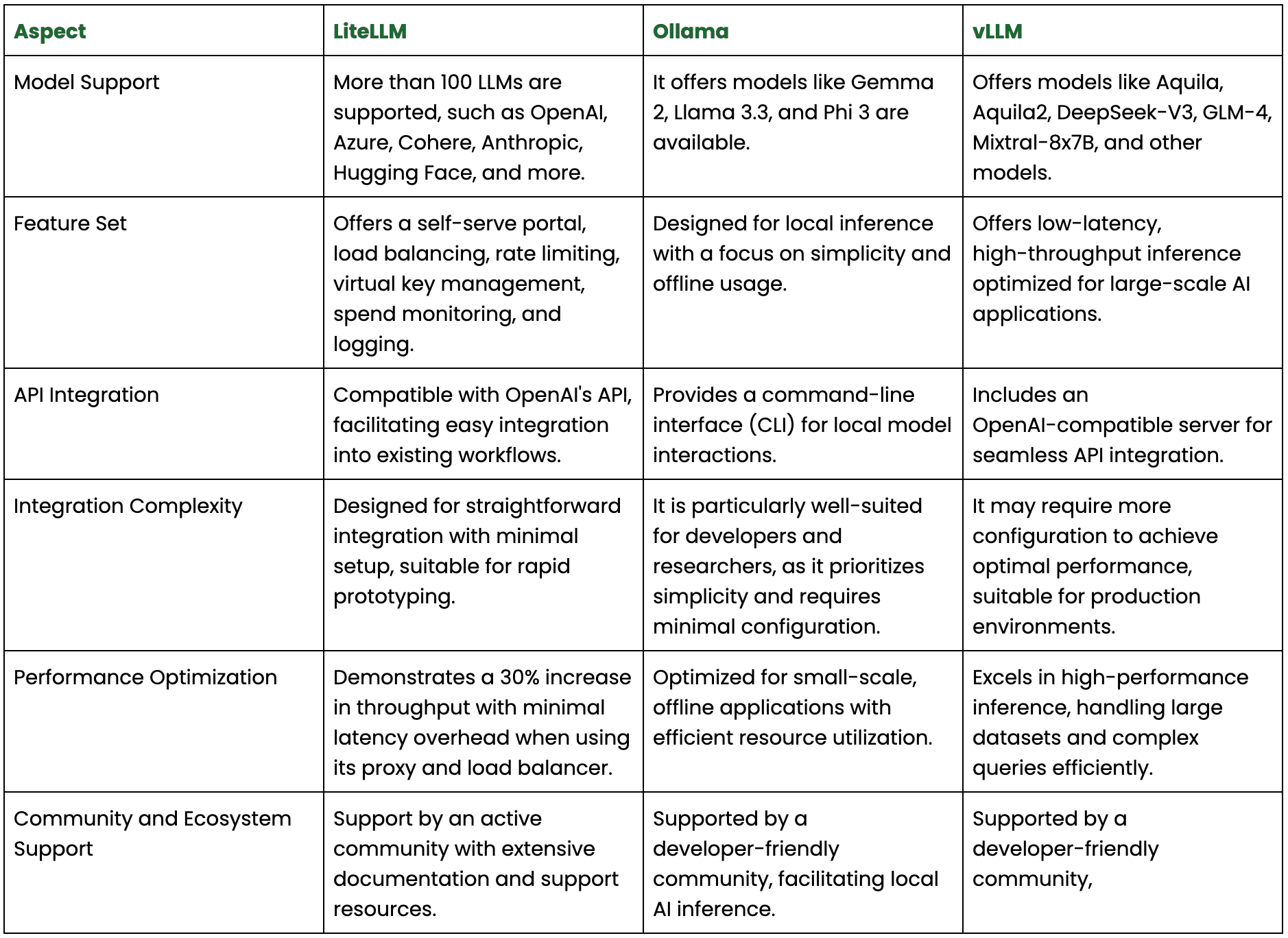

Comparing Llms On A Test Set Using Litellm Litellm Comparison and ranking the performance of over 100 ai models (llms) across key metrics including intelligence, price, performance and speed (output speed tokens per second & latency ttft), context window & others. Compare 109 ranked models and 194 tracked ai models across 152 benchmarks with benchlm scoring, pricing, context window, and runtime tradeoffs. rankings and head to head comparisons for gpt 5, claude, gemini, deepseek, llama, and more. Litellm removes that friction: unified api — one interface for 100 llms, no provider specific sdk juggling drop in openai compatibility — swap providers without rewriting your code production ready gateway — virtual keys, spend tracking, guardrails, load balancing, and an admin dashboard out of the box 8ms p95 latency at 1k rps (benchmarks). Litellm is the fastest way to route python calls across 100 llm providers without rewriting your integration layer. but “best” depends on what you’re comparing.

Litellm Configs Reliably Call 100 Llms Hackernoon Litellm removes that friction: unified api — one interface for 100 llms, no provider specific sdk juggling drop in openai compatibility — swap providers without rewriting your code production ready gateway — virtual keys, spend tracking, guardrails, load balancing, and an admin dashboard out of the box 8ms p95 latency at 1k rps (benchmarks). Litellm is the fastest way to route python calls across 100 llm providers without rewriting your integration layer. but “best” depends on what you’re comparing. Compare the latest llm benchmarks for gpt, claude, gemini and more. updated rankings across reasoning, coding, math, and multilingual tasks with pricing and speed data. Benchmarks for litellm gateway (proxy server) tested against a fake openai endpoint. Compare the best llms in one llm leaderboard with llm rankings, pricing, speed, context windows, and benchmark scores today. Send your llm requests, responses, costs, and performance data to elasticsearch for analytics and monitoring using opentelemetry.

рџ ґ Use Litellm To Benchmark 100 Llms 92 Faster Try It Here With Lm Compare the latest llm benchmarks for gpt, claude, gemini and more. updated rankings across reasoning, coding, math, and multilingual tasks with pricing and speed data. Benchmarks for litellm gateway (proxy server) tested against a fake openai endpoint. Compare the best llms in one llm leaderboard with llm rankings, pricing, speed, context windows, and benchmark scores today. Send your llm requests, responses, costs, and performance data to elasticsearch for analytics and monitoring using opentelemetry.

Litellm Manage 100 Llms Seamlessly With Ease Efficiency Compare the best llms in one llm leaderboard with llm rankings, pricing, speed, context windows, and benchmark scores today. Send your llm requests, responses, costs, and performance data to elasticsearch for analytics and monitoring using opentelemetry.

Litellm Manage 100 Llms Seamlessly With Ease Efficiency

Comments are closed.