Longiclbench Benchmark Evaluating Large Language Models On Long In

Benchmarking Large Language Models In Retrieval Augmented Generation We developed longiclbench, which serves as a complement to earlier benchmarks that concentrated on tasks like long document summarization, question answering (qa), or retrieval, focusing instead on long in context learning. We created longiclbench to conduct comprehensive evaluations of large language models (llms) on extreme label classification challenges with in context learning.

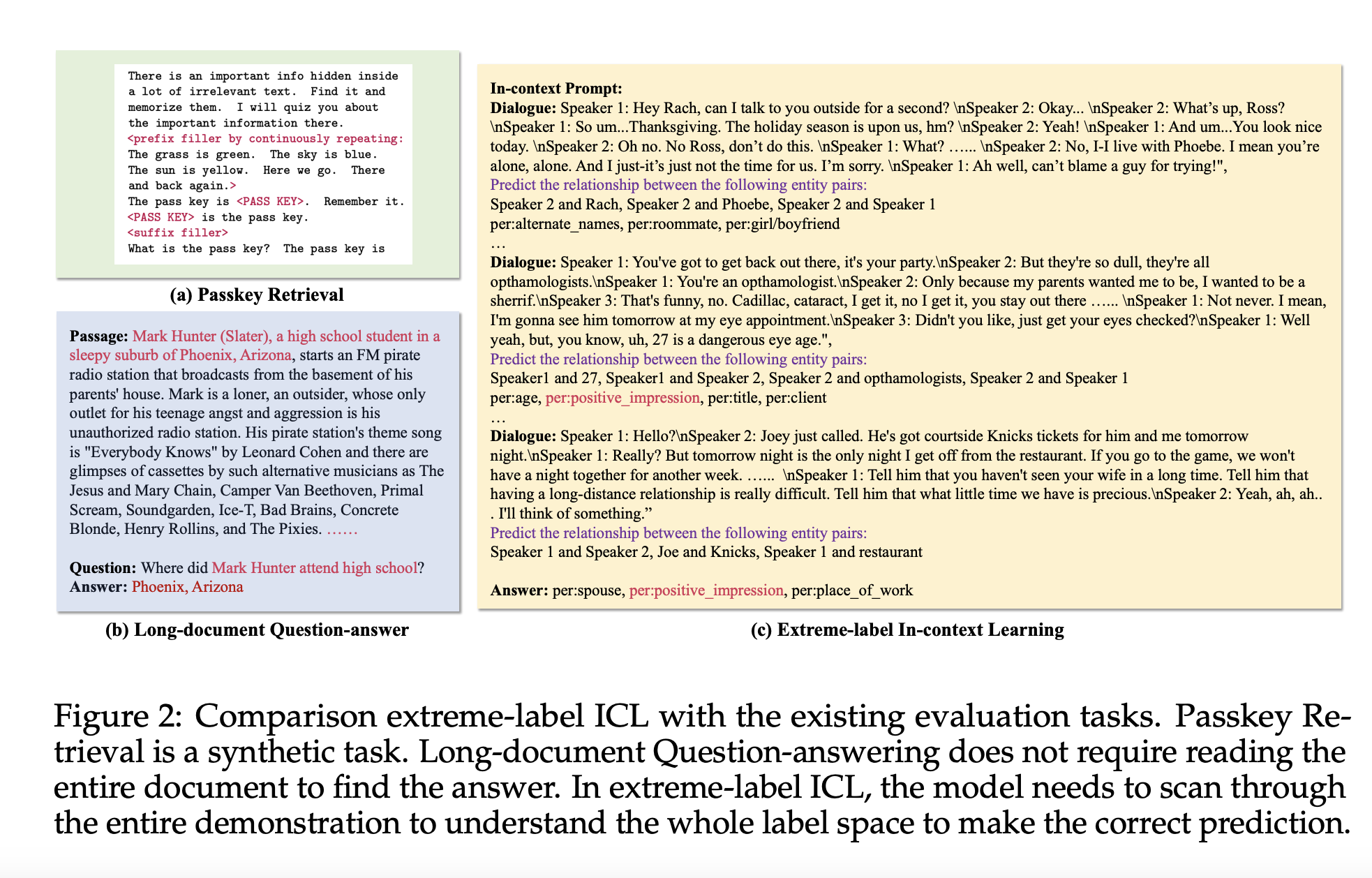

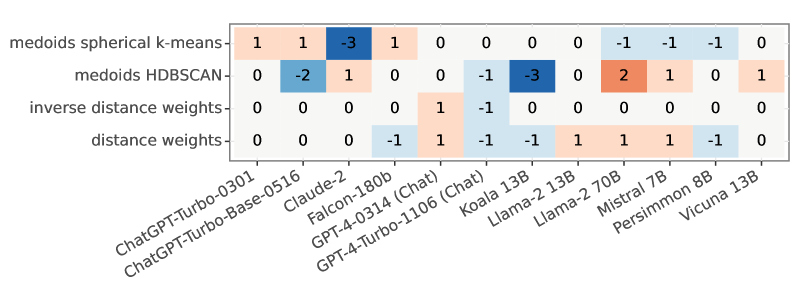

Longiclbench Benchmark Evaluating Large Language Models On Long In This paper proposes longiclbench, a new benchmark for evaluating long context llms. the core idea is to use extreme label classification datasets to construct the benchmark. Researchers from the university of waterloo, carnegie mellon university, and vector institute, toronto, have introduced longiclbench, a benchmark specifically developed for evaluating llms in processing long context sequences for extreme label classification tasks. Our benchmark requires llms to comprehend the entire input to recognize the massive label spaces to make correct predictions. we evaluate 13 long context llms on our benchmarks. In summary, longiclbench is a significant tool for evaluating large language models (llms) in long, in context learning for extreme label classification tasks. the testing across various models and datasets revealed that while llms perform well on less complex tasks, there is a need for improvement regarding longer, more complex sequences.

Longiclbench Benchmark Evaluating Large Language Models On Long In Our benchmark requires llms to comprehend the entire input to recognize the massive label spaces to make correct predictions. we evaluate 13 long context llms on our benchmarks. In summary, longiclbench is a significant tool for evaluating large language models (llms) in long, in context learning for extreme label classification tasks. the testing across various models and datasets revealed that while llms perform well on less complex tasks, there is a need for improvement regarding longer, more complex sequences. The long context capabilities of large language models (llms) have been a hot topic in recent years. to evaluate the performance of llms in different scenarios, various assessment benchmarks have emerged. We introduce a benchmark (longiclbench) for long in context learning in extreme label classification using six datasets with 28 to 174 classes and input lengths from 2k to 50k tokens. Our benchmark requires llms to comprehend the entire input to recognize the massive label spaces to make correct predictions. we evaluate on 15 long context llms and find that they perform well on less challenging classification tasks with smaller label space and shorter demonstrations. The paper introduces longiclbench, a benchmark showing that llms struggle with long in context tasks, particularly as task complexity increases. it evaluates 13 models across six datasets with token lengths from 2k to 50k, uncovering a distinct drop in accuracy and a bias towards end sequence labels.

Benchmarking Benchmark Leakage In Large Language Models Ai Research The long context capabilities of large language models (llms) have been a hot topic in recent years. to evaluate the performance of llms in different scenarios, various assessment benchmarks have emerged. We introduce a benchmark (longiclbench) for long in context learning in extreme label classification using six datasets with 28 to 174 classes and input lengths from 2k to 50k tokens. Our benchmark requires llms to comprehend the entire input to recognize the massive label spaces to make correct predictions. we evaluate on 15 long context llms and find that they perform well on less challenging classification tasks with smaller label space and shorter demonstrations. The paper introduces longiclbench, a benchmark showing that llms struggle with long in context tasks, particularly as task complexity increases. it evaluates 13 models across six datasets with token lengths from 2k to 50k, uncovering a distinct drop in accuracy and a bias towards end sequence labels.

Longiclbench Benchmark Evaluating Large Language Models On Long In Our benchmark requires llms to comprehend the entire input to recognize the massive label spaces to make correct predictions. we evaluate on 15 long context llms and find that they perform well on less challenging classification tasks with smaller label space and shorter demonstrations. The paper introduces longiclbench, a benchmark showing that llms struggle with long in context tasks, particularly as task complexity increases. it evaluates 13 models across six datasets with token lengths from 2k to 50k, uncovering a distinct drop in accuracy and a bias towards end sequence labels.

Comments are closed.