Maximum Likelihood Estimation And Bayesian Estimation Coding Ninjas

Maximum Likelihood Estimation And Bayesian Estimation Coding Ninjas A course i instructed in spring 2026. estimation and hypothesis testing. maximum likelihood and method of moments estimation. efficiency, unbiasedness, and asymptotic distribution of estimators. neyman pearson lemma. goodness of fit tests. correlation and regression. experimental design and analysis of variance. nonparametric methods. This blog briefly explains and contrasts the 2 most widely used parameter estimation techniques, maximum likelihood estimation and bayesian estimation. optimal conditions for both the techniques and their key differences.

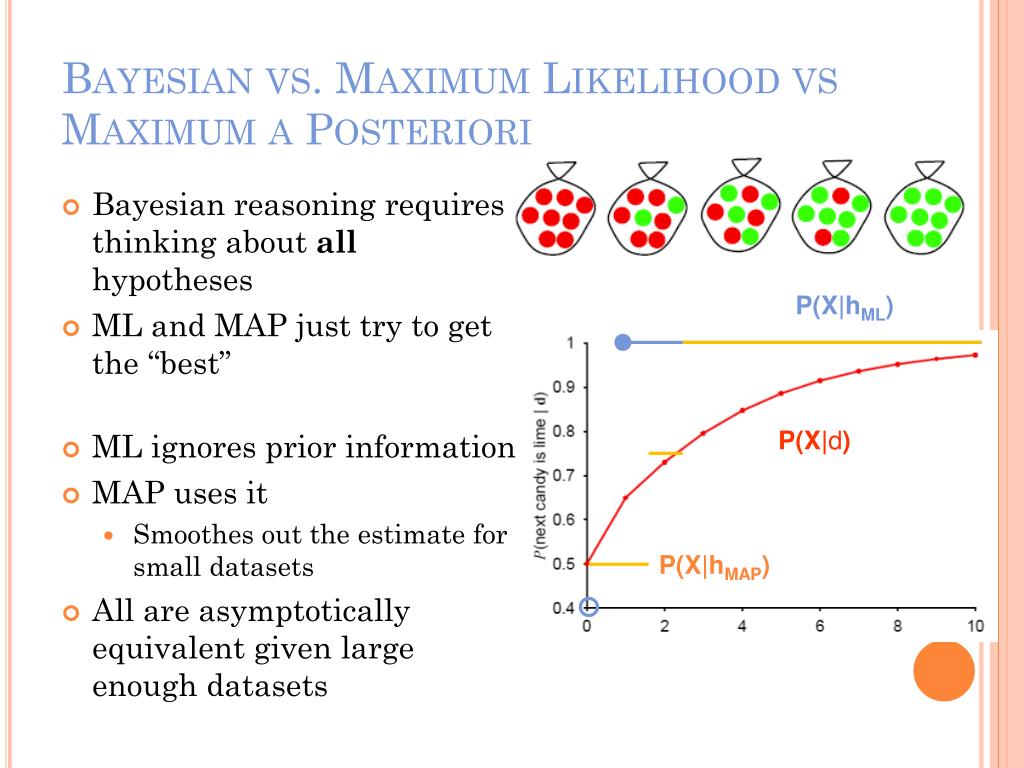

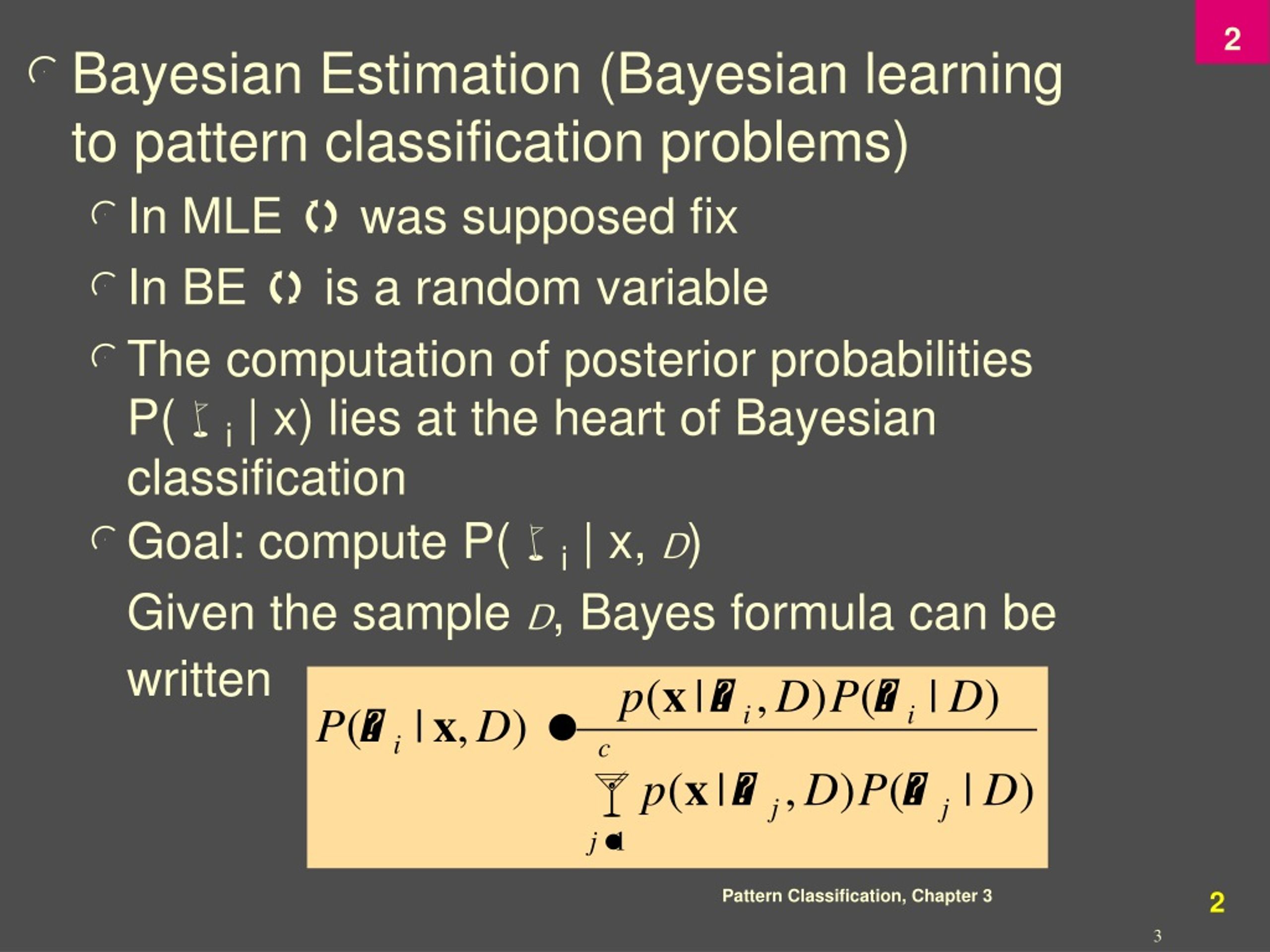

Essential Probability Statistics Ppt Download Maximum likelihood estimation (mle), the frequentist view, and bayesian estimation, the bayesian view, are perhaps the two most widely used methods for parameter estimation, the process by which, given some data, we are able to estimate the model that produced that data. Parameter estimation story so far at this point: if you are provided with a model and all the necessary probabilities, you can make predictions! but how do we infer the probabilities for a given model? ~poi 5. Mega is an integrated tool for conducting automatic and manual sequence alignment, inferring phylogenetic trees, mining web based databases, estimating rates of molecular evolution, and testing evolutionary hypotheses. The key difference: mle gives you a single point estimate, while bayesian inference gives you an entire posterior distribution over parameter values.

Ppt Introduction To Machine Learning Powerpoint Presentation Free Mega is an integrated tool for conducting automatic and manual sequence alignment, inferring phylogenetic trees, mining web based databases, estimating rates of molecular evolution, and testing evolutionary hypotheses. The key difference: mle gives you a single point estimate, while bayesian inference gives you an entire posterior distribution over parameter values. Regresi logistik multinomial klasik menggunakan metode maximum likelihood untuk mengestimasi parameter parameternya. sedangkan pada regresi logistik multinomial dengan menggunakan metode bayesian, distribusi prior dipadukan dengan likelihood datanya untuk mendapatkan distribusi posterior. The maximum likelihood method seeks to find the parameter value that is best supported by the training data, i.e., maximizes the probability of obtaining the samples actually observed. There are different methods to estimate these parameters, like maximum likelihood estimation (mle) and bayesian inference. in this article, we'll break down what parameter estimation is, how it works, and why it matters. This paper discussed bayesian implementation of the widely popular tar geted maximum likelihood estimation (tmle) algorithm for uncertainty quantification of the causal efect using a sample based probability distri bution as opposed to statistical confidence intervals.

Ppt Chapter 3 Maximum Likelihood And Bayesian Parameter Estimation Regresi logistik multinomial klasik menggunakan metode maximum likelihood untuk mengestimasi parameter parameternya. sedangkan pada regresi logistik multinomial dengan menggunakan metode bayesian, distribusi prior dipadukan dengan likelihood datanya untuk mendapatkan distribusi posterior. The maximum likelihood method seeks to find the parameter value that is best supported by the training data, i.e., maximizes the probability of obtaining the samples actually observed. There are different methods to estimate these parameters, like maximum likelihood estimation (mle) and bayesian inference. in this article, we'll break down what parameter estimation is, how it works, and why it matters. This paper discussed bayesian implementation of the widely popular tar geted maximum likelihood estimation (tmle) algorithm for uncertainty quantification of the causal efect using a sample based probability distri bution as opposed to statistical confidence intervals.

Cohort 8 Lectures Week 13 Maximum Likelihood Estimation And Naive There are different methods to estimate these parameters, like maximum likelihood estimation (mle) and bayesian inference. in this article, we'll break down what parameter estimation is, how it works, and why it matters. This paper discussed bayesian implementation of the widely popular tar geted maximum likelihood estimation (tmle) algorithm for uncertainty quantification of the causal efect using a sample based probability distri bution as opposed to statistical confidence intervals.

Comments are closed.