Pattern Recognition 6 Bayesian Estimation And Maximum Likelihood

Maximum Likelihood Estimation And Bayesian Estimation Coding Ninjas Bayesian learning of the mean of normal distributions in one and two dimensions. the posterior distribution estimates are labeled by the number of training samples used in the estimation. And using bayes formula, we obtain the bayesian classification rule: max p ( j | x, d max p ( x | j , d j ) p ( j ) j j f• 3.5 bayesian parameter estimation: general theory – p (x | d) computation can be applied to any situation in which the unknown density can be parameterized: the basic assumptions are:.

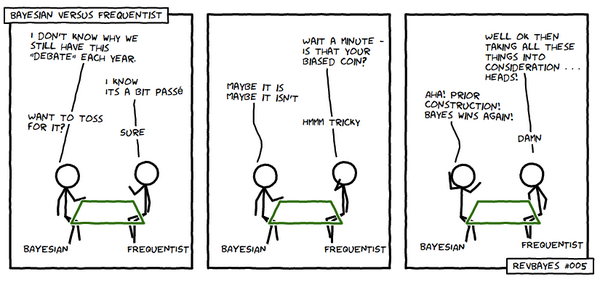

Maximum Likelihood Estimation V S Bayesian Estimation By Jocelyn Guo Designers generally choose the model based on knowledge of the problem domain rather than on the subsequent estimation method, and thus the model error in maximum likelihood and bayes methods rarely differ. Maximum likelihood estimation (mle), the frequentist view, and bayesian estimation, the bayesian view, are perhaps the two most widely used methods for parameter estimation, the process by which, given some data, we are able to estimate the model that produced that data. Linear models for regression, parameter estimation methods maximum likelihood method and maximum a posteriori method; regularization, ridge regression, lasso, bias variance decomposition, bayesian linear regression. The three most famous algorithms for optimal estimation of model parameters in a probabilistic framework are: (1) maximum likelihood (ml); (2) maximum a posteriori (map); and (3) bayesian.

Topology Recovered By Bayesian Inference And Maximum Likelihood Linear models for regression, parameter estimation methods maximum likelihood method and maximum a posteriori method; regularization, ridge regression, lasso, bias variance decomposition, bayesian linear regression. The three most famous algorithms for optimal estimation of model parameters in a probabilistic framework are: (1) maximum likelihood (ml); (2) maximum a posteriori (map); and (3) bayesian. This document discusses bayesian inference, focusing on bayesian models, maximum likelihood estimation (mle), and maximum a posteriori (map) estimation. it includes examples and practice problems to illustrate these concepts in the context of pattern recognition and deep learning. 4.3.1 maximum likelihood estimation with respect to observed data. in the context of parameter estimation, the likelihood is naturally viewed as a function of the parameters θ to be estimated, and is defined as in equation (2.29)—the joint probability of a set. Bayesian parameter estimation: general theory p(x | d) computation can be applied to any situation in which unknown density can be parameterized. P(x|θ) can be easily computed (we have both form and parameters of distribution, e.g. gaussian) need to estimate the parameter posterior density given the training set:.

Comments are closed.