Chapter 3 Maximumlikelihood Bayesian Parameter Estimation Introduction

Chapter 3 Maximumlikelihood Bayesian Parameter Estimation Introduction If p(xk | ωj) (j = 1, 2, , c) is supposed to be gaussian in a d dimensional feature space; then we can estimate the vector θ = (θ1, θ2, , θc)t and perform an optimal classification!. Parameters in ml estimation are fixed but unknown! best parameters are obtained by maximizing the probability of obtaining the samples observed. bayesian methods view the parameters as random variables having some known distribution.

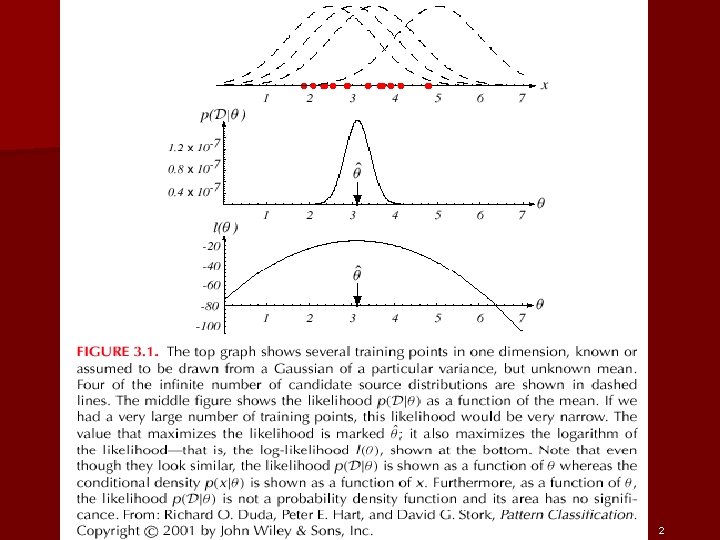

Chapter 3 Maximumlikelihood Bayesian Parameter Estimation Introduction Estimation techniques maximum likelihood (ml) and the bayesian estimations results are nearly identical, but the approaches are different 1 parameters in ml estimation are fixed but unknown! best parameters are obtained by maximizing the probability of obtaining the samples observed. Estimation techniques maximum likelihood (ml) find parameters that maximize probability of observations bayesian estimation parameters are random variables with known prior distribution sharpened by observations results nearly identical, but approaches are different. § parameters in ml estimation are fixed but unknown! § best parameters are obtained by maximizing the probability of obtaining the samples observed § bayesian methods view the parameters as random variables having some known distribution § in either approach, we use p ( i | x) for our classification rule! § we can use estimation for other. Introduction the previous chapter assumed that the population parameters were known and showed how the population moments could be calculated. this chapter explores how to estimate the parameter values on the basis of observations on y. this chapter follows chapter 5 in hamilton.

Ppt Lecture 09 Maximum Likelihood Estimation Powerpoint Presentation § parameters in ml estimation are fixed but unknown! § best parameters are obtained by maximizing the probability of obtaining the samples observed § bayesian methods view the parameters as random variables having some known distribution § in either approach, we use p ( i | x) for our classification rule! § we can use estimation for other. Introduction the previous chapter assumed that the population parameters were known and showed how the population moments could be calculated. this chapter explores how to estimate the parameter values on the basis of observations on y. this chapter follows chapter 5 in hamilton. Bayesian parameter estimation to compute posterior probability , we need to know: prior probability:. Used to model the prior distribution of the precision matrix (inverse covariance matrix, i.e. Λ = Σ−1). t is the prior covariance. Pattern classification, chapter 3. parameters in ml estimation are fixed but unknown! • best parameters are obtained by maximizing the probability of obtaining the samples observed • bayesian methods view the parameters as random variables having some known distribution • in either approach, we use p ( i | x)for our classification rule!. Pattern classification, chapter 3 6 in ml estimation parameters are assumed to be fixed but unknown!.

1 Chapter 3 Maximumlikelihood And Bayesian Parameter Estimation Bayesian parameter estimation to compute posterior probability , we need to know: prior probability:. Used to model the prior distribution of the precision matrix (inverse covariance matrix, i.e. Λ = Σ−1). t is the prior covariance. Pattern classification, chapter 3. parameters in ml estimation are fixed but unknown! • best parameters are obtained by maximizing the probability of obtaining the samples observed • bayesian methods view the parameters as random variables having some known distribution • in either approach, we use p ( i | x)for our classification rule!. Pattern classification, chapter 3 6 in ml estimation parameters are assumed to be fixed but unknown!.

1 Chapter 3 Maximumlikelihood And Bayesian Parameter Estimation Pattern classification, chapter 3. parameters in ml estimation are fixed but unknown! • best parameters are obtained by maximizing the probability of obtaining the samples observed • bayesian methods view the parameters as random variables having some known distribution • in either approach, we use p ( i | x)for our classification rule!. Pattern classification, chapter 3 6 in ml estimation parameters are assumed to be fixed but unknown!.

Comments are closed.