Using Runpod The Ai Cloud Platform For Running Full Stack Ai Apps

Using Runpod The Ai Cloud Platform For Running Full Stack Ai Apps Ai infrastructure with on demand gpus and serverless compute. run training, inference, and batch workloads on the cloud with runpod. By providing both development environments and production infrastructure, runpod enables teams to build and deploy complete ai applications without switching between different platforms.

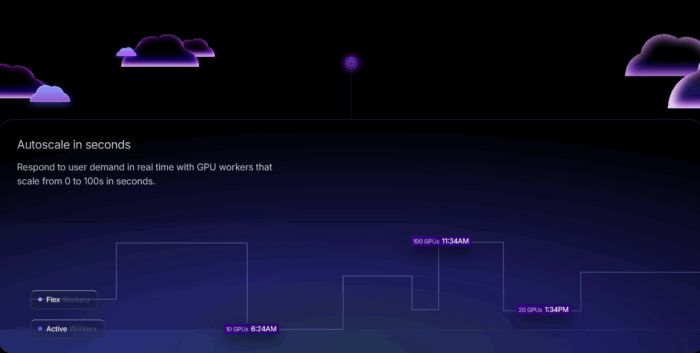

Using Runpod The Ai Cloud Platform For Running Full Stack Ai Apps Ease of use & deployment: runpod simplifies the entire ai lifecycle. its serverless deployment allows you to run ai applications without managing any backend servers, letting you focus purely on your code. Getting started with runpod: beginner's ai guide start using runpod for ai workloads with this beginner guide. account setup, gpu selection, pod configuration, and cost management explained. In this comprehensive review, we dive deep into runpod’s technology, pricing, features, and overall value proposition for developers, researchers, and enterprises who require scalable, on demand gpu compute power without the hassles of traditional infrastructure management. Overall, runpod has been useful for us — especially for serving less mainstream models at a lower cost — but there are still gaps in support and serverless reliability.

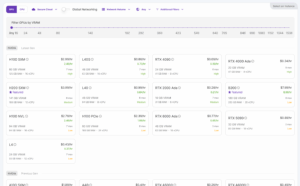

Using Runpod The Ai Cloud Platform For Running Full Stack Ai Apps In this comprehensive review, we dive deep into runpod’s technology, pricing, features, and overall value proposition for developers, researchers, and enterprises who require scalable, on demand gpu compute power without the hassles of traditional infrastructure management. Overall, runpod has been useful for us — especially for serving less mainstream models at a lower cost — but there are still gaps in support and serverless reliability. Runpod hub is a marketplace of pre packaged ai models and applications that can be deployed with one click. the hub includes popular open source models like stable diffusion xl, llama variants, whisper, and various lora adapters. In this article, we have looked into a cloud platform named runpod that provides gpu serverless services. step by step, we have seen how to create an account with runpod and then how to create a gpu instance within it. I tested runpod for running stable diffusion, comfyui and flux online. read my runpod review with pros, cons, pricing, and alternatives. I’ll walk through what changed on runpod this year, what it unlocks for real projects, and a few short recipes you can copy. i’ll keep it conversational — because building with gpus already has enough jargon.

Using Runpod The Ai Cloud Platform For Running Full Stack Ai Apps Runpod hub is a marketplace of pre packaged ai models and applications that can be deployed with one click. the hub includes popular open source models like stable diffusion xl, llama variants, whisper, and various lora adapters. In this article, we have looked into a cloud platform named runpod that provides gpu serverless services. step by step, we have seen how to create an account with runpod and then how to create a gpu instance within it. I tested runpod for running stable diffusion, comfyui and flux online. read my runpod review with pros, cons, pricing, and alternatives. I’ll walk through what changed on runpod this year, what it unlocks for real projects, and a few short recipes you can copy. i’ll keep it conversational — because building with gpus already has enough jargon.

Using Runpod The Ai Cloud Platform For Running Full Stack Ai Apps I tested runpod for running stable diffusion, comfyui and flux online. read my runpod review with pros, cons, pricing, and alternatives. I’ll walk through what changed on runpod this year, what it unlocks for real projects, and a few short recipes you can copy. i’ll keep it conversational — because building with gpus already has enough jargon.

Comments are closed.