Runpod The Cloud Platform For Ai Development And Scaling Creati Ai

Runpod The Cloud Platform For Ai Development And Scaling Creati Ai Ai infrastructure with on demand gpus and serverless compute. run training, inference, and batch workloads on the cloud with runpod. Runpod is a globally distributed gpu cloud computing service designed for developing, training, and scaling ai models. it provides a comprehensive platform with on demand gpus, serverless computing options, and a full software management stack to ensure seamless ai application deployment.

Runpod Vs Google Cloud Ai In Depth Feature Pricing And Performance Runpod is a cloud computing platform built for ai, machine learning, and general compute needs. whether you’re training or fine tuning ai models, or deploying cloud based applications for inference , runpod provides scalable, high performance gpu and cpu resources to power your workloads. In an era dominated by trillion dollar hyperscalers like aws and google cloud, runpod’s developer first, community driven approach has carved out a significant stronghold in the competitive market of ai model training and inference. In this article, we’ll explore how modern ai development workflows can benefit from runpod’s cloud tools, break down key features that simplify integration, and help you launch your own containerized projects with gpu support in minutes. Runpod is more than just a hosting service — it’s an entire ecosystem designed for modern ai development. from gpus on demand to collaborative container environments and scalable inference endpoints, it’s the perfect platform for researchers, developers, and production teams.

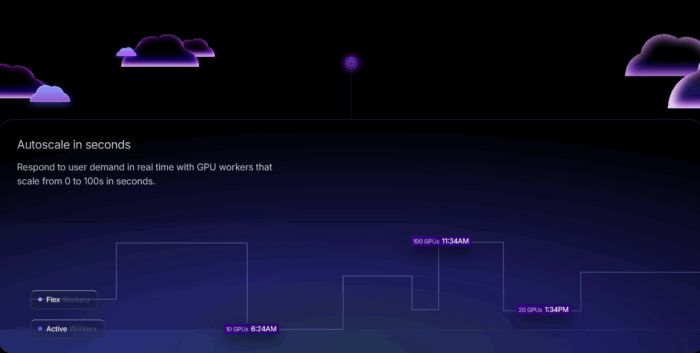

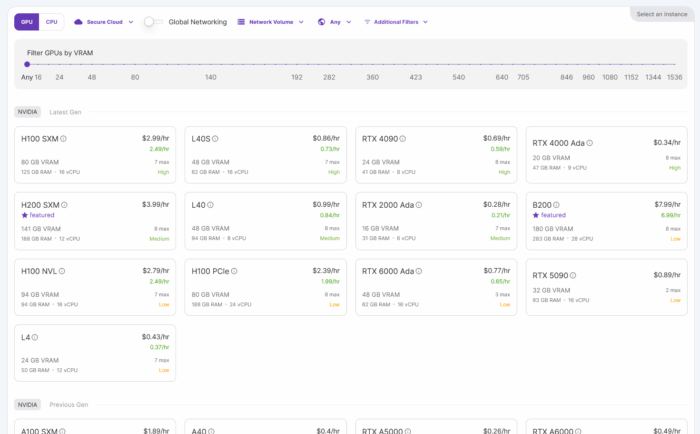

Using Runpod The Ai Cloud Platform For Running Full Stack Ai Apps In this article, we’ll explore how modern ai development workflows can benefit from runpod’s cloud tools, break down key features that simplify integration, and help you launch your own containerized projects with gpu support in minutes. Runpod is more than just a hosting service — it’s an entire ecosystem designed for modern ai development. from gpus on demand to collaborative container environments and scalable inference endpoints, it’s the perfect platform for researchers, developers, and production teams. Think of runpod as your personal, super powered computer lab in the cloud, specifically engineered for ai. at its core, runpod allows you to easily create and rent "pods" – virtual machines equipped with powerful graphics processing units (gpus) that excel at the intensive mathematical computations ai requires. Runpod integrates with webhooks, apis, and custom event triggers, enabling seamless execution of ai ml workloads in response to external events. you can set up gpu powered functions that automatically run on demand, scaling dynamically without persistent instance management. In this comprehensive review, we dive deep into runpod’s technology, pricing, features, and overall value proposition for developers, researchers, and enterprises who require scalable, on demand gpu compute power without the hassles of traditional infrastructure management. Access cloud gpu instances on runpod, from rtx 4090s to h100s, with on demand capacity and per second billing.

Using Runpod The Ai Cloud Platform For Running Full Stack Ai Apps Think of runpod as your personal, super powered computer lab in the cloud, specifically engineered for ai. at its core, runpod allows you to easily create and rent "pods" – virtual machines equipped with powerful graphics processing units (gpus) that excel at the intensive mathematical computations ai requires. Runpod integrates with webhooks, apis, and custom event triggers, enabling seamless execution of ai ml workloads in response to external events. you can set up gpu powered functions that automatically run on demand, scaling dynamically without persistent instance management. In this comprehensive review, we dive deep into runpod’s technology, pricing, features, and overall value proposition for developers, researchers, and enterprises who require scalable, on demand gpu compute power without the hassles of traditional infrastructure management. Access cloud gpu instances on runpod, from rtx 4090s to h100s, with on demand capacity and per second billing.

Using Runpod The Ai Cloud Platform For Running Full Stack Ai Apps In this comprehensive review, we dive deep into runpod’s technology, pricing, features, and overall value proposition for developers, researchers, and enterprises who require scalable, on demand gpu compute power without the hassles of traditional infrastructure management. Access cloud gpu instances on runpod, from rtx 4090s to h100s, with on demand capacity and per second billing.

Runpod Ai Model Deployment Platform

Comments are closed.