The Memory Usage Of Tensorrt Algorithm Model Is Different On Different

The Memory Usage Of Tensorrt Algorithm Model Is Different On Different I used tensorrt’s python api to load the swin tiny segmentation model on different hardware, and found that the memory size occupied by the model on the host side was different. The memory requirement is computed based on an optimized tensorrt graph, one profile’s memory usage is computed by using the max tensor shape, and the memory requirement of one engine is computed by the maximum size between different profiles.

Tensorrt Model Memory Usage In Nvinfer Vs Nvinferserver Plugin This article explores advanced troubleshooting for tensorrt issues, including precision mismatch errors, memory bottlenecks, unsupported layer conversions, and deployment inconsistencies across different gpu architectures. Performance tuning guide # torch tensorrt compiles pytorch models to tensorrt engines, but getting the best performance requires understanding how trt optimization works and measuring correctly. this guide covers why compiled models can appear slow and how to extract maximum speedup. This document provides an overview of the primary model optimization techniques available in the nvidia tensorrt model optimizer. these techniques can be applied individually or combined to achieve optimal model performance for deployment scenarios. From the conversion logs of trtexec, i noticed that executing each model with tensorrt inference approximately consumes around 1.6gb of memory, so i thought allocating 2gb for each instance would be sufficient.

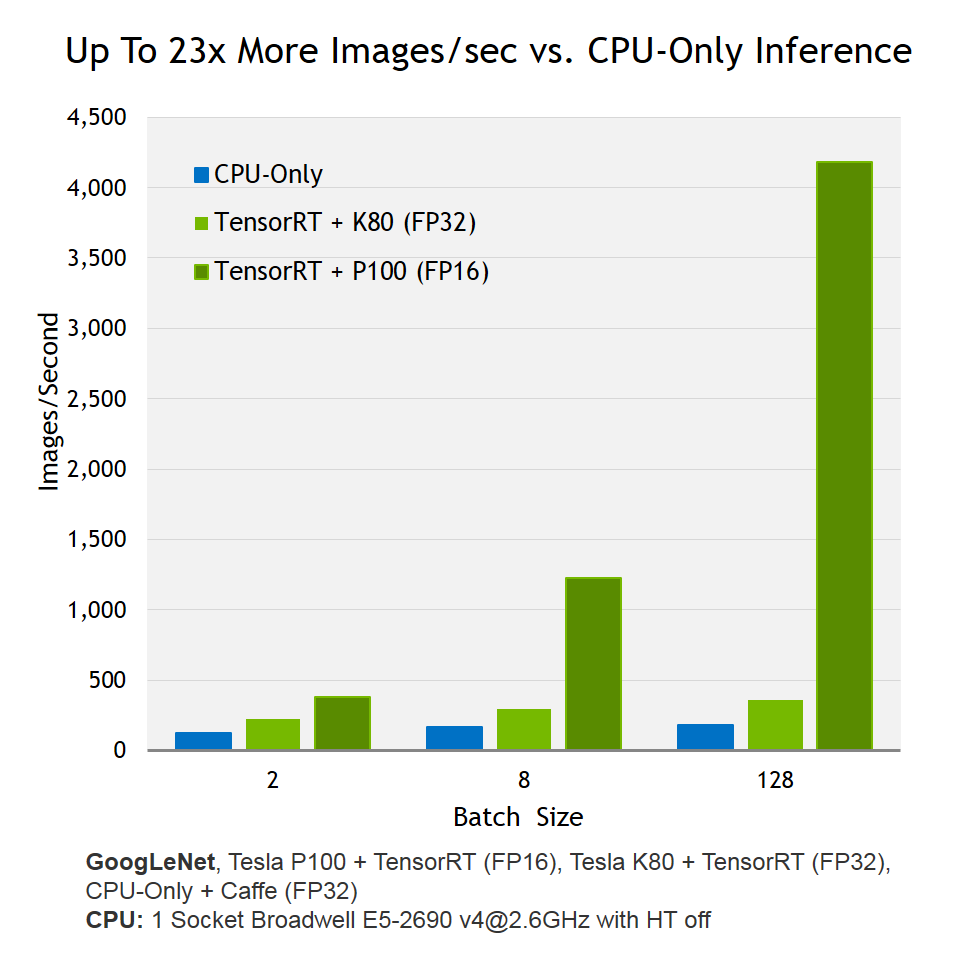

Nvidia Tensorrt Nvidia Developer This document provides an overview of the primary model optimization techniques available in the nvidia tensorrt model optimizer. these techniques can be applied individually or combined to achieve optimal model performance for deployment scenarios. From the conversion logs of trtexec, i noticed that executing each model with tensorrt inference approximately consumes around 1.6gb of memory, so i thought allocating 2gb for each instance would be sufficient. By understanding its optimization techniques from layer fusion and precision calibration to kernel auto tuning and memory management you can effectively leverage tensorrt to achieve dramatic performance improvements in your inference workloads. By applying these techniques, developers can significantly improve memory efficiency in tensorrt optimized deep learning models, leading to faster inference and better resource utilization. Tensorrt achieves high performance by using a combination of techniques such as kernel auto tuning, layer fusion, precision calibration, and dynamic tensor memory management. these techniques. To achieve optimal performance, it is essential to understand the various build options and runtime configurations available in tensorrt llm. this tutorial will provide an in depth explanation of the key optimization techniques and best practices for tuning the performance of tensorrt llm models.

Nvidia Tensorrt Nvidia Developer By understanding its optimization techniques from layer fusion and precision calibration to kernel auto tuning and memory management you can effectively leverage tensorrt to achieve dramatic performance improvements in your inference workloads. By applying these techniques, developers can significantly improve memory efficiency in tensorrt optimized deep learning models, leading to faster inference and better resource utilization. Tensorrt achieves high performance by using a combination of techniques such as kernel auto tuning, layer fusion, precision calibration, and dynamic tensor memory management. these techniques. To achieve optimal performance, it is essential to understand the various build options and runtime configurations available in tensorrt llm. this tutorial will provide an in depth explanation of the key optimization techniques and best practices for tuning the performance of tensorrt llm models.

Comments are closed.