What Is Tensorrt

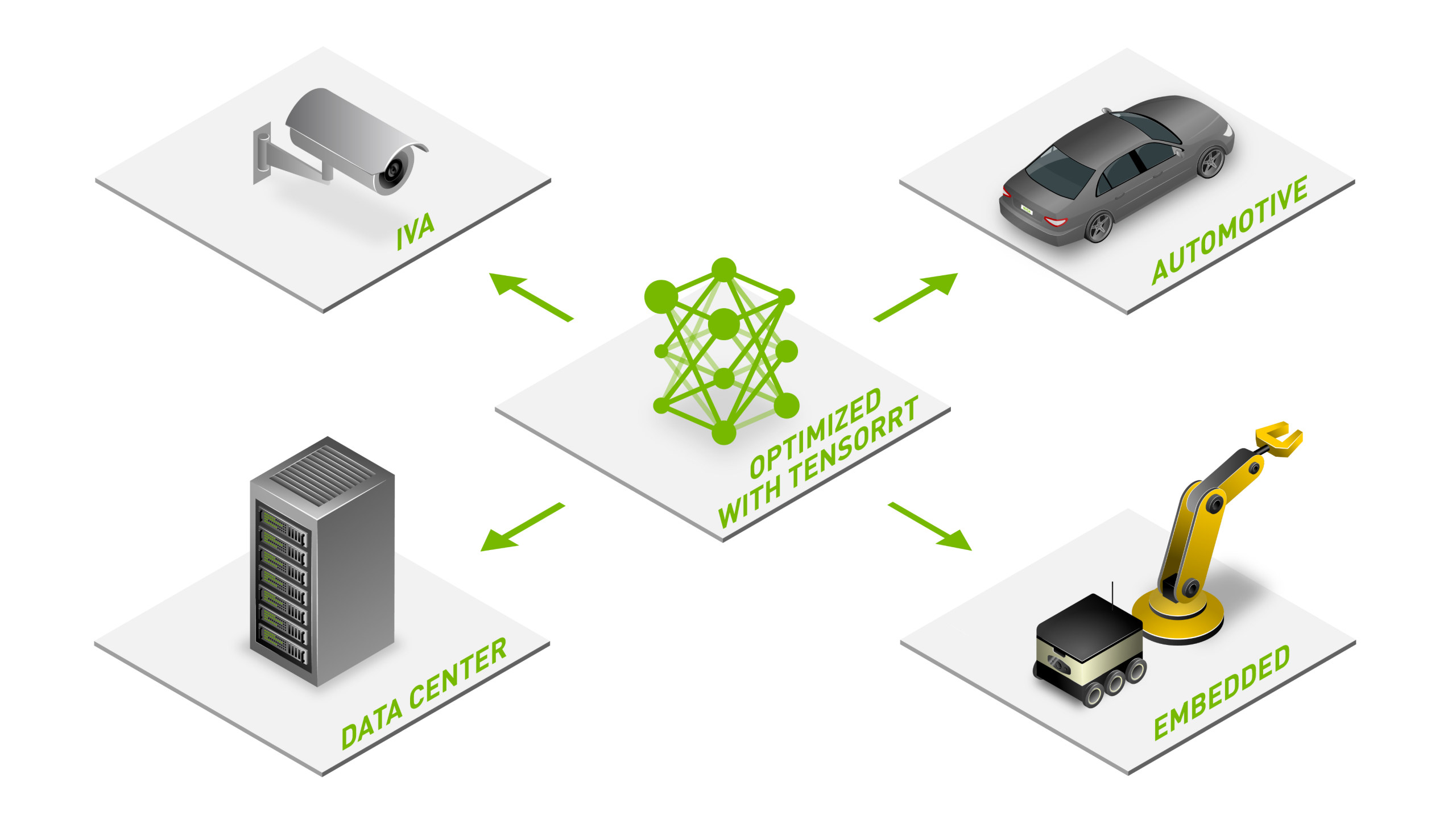

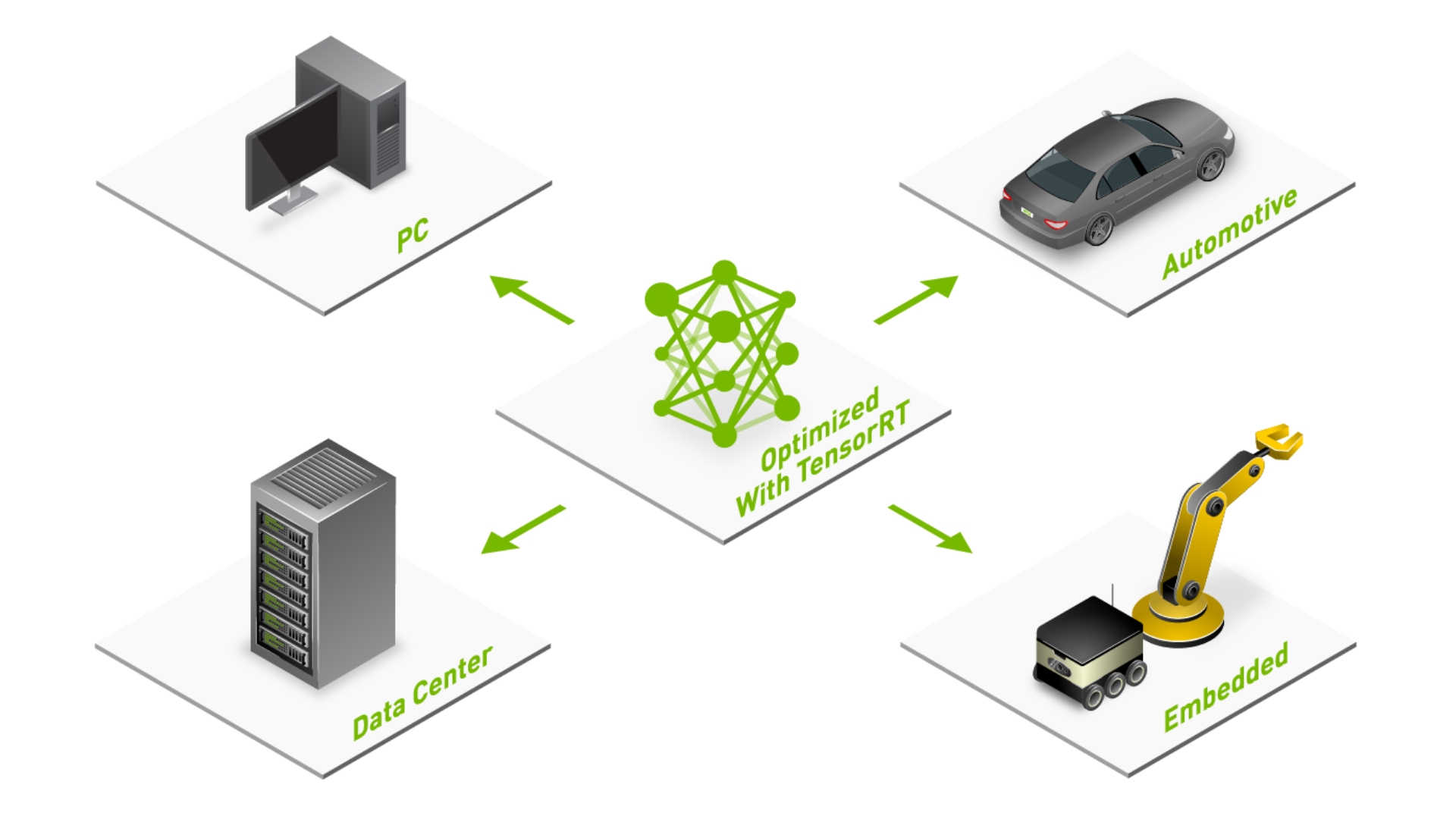

Tensorrt Sdk Nvidia Developer Tensorrt is an ecosystem of apis for building and deploying high performance deep learning inference. it offers a variety of inference solutions for different developer requirements. Tensorrt is an optimized inference library and toolkit developed by nvidia to maximize the performance (speed and efficiency) of deep learning models on nvidia gpus.

Nvidia Tensorrt Nvidia Developer Nvidia tensorrt is a high performance deep learning inference library and optimizer that plays a crucial role in accelerating ai workloads, particularly in vision tasks. Tensorrt 11.0 is coming soon in 2026 q2 with powerful new capabilities designed to accelerate your ai inference workflows. with this major version bump, tensorrt's api will be streamlined and a few legacy features will be removed. Tensorrt is an ecosystem of apis for building and deploying high performance deep learning inference. it offers a variety of inference solutions for different developer requirements. Tensorrt is an sdk for optimizing trained deep learning models to enable high performance inference. tensorrt contains a deep learning inference optimizer for trained deep learning models and an.

Tensorrt Sdk Nvidia Developer Tensorrt is an ecosystem of apis for building and deploying high performance deep learning inference. it offers a variety of inference solutions for different developer requirements. Tensorrt is an sdk for optimizing trained deep learning models to enable high performance inference. tensorrt contains a deep learning inference optimizer for trained deep learning models and an. Note: throughput numbers for vllm, sglang, and tensorrt llm are total output tokens sec under concurrent load on a single h100 80gb. ollama’s metric is requests sec because it processes sequentially. We ran vllm, tensorrt llm, and sglang on the same h100 gpu with the same model. here are the throughput, latency, and vram numbers you actually need to pick an engine. Tensorrt is an sdk that enables high performance inference for trained deep learning models. learn how to install, convert, and deploy tensorrt engines using various options and tools. Nvidia tensorrt is a high performance deep learning inference optimizer and runtime that maximizes throughput and minimizes latency on nvidia gpus. tensorrt optimizes models from frameworks like pytorch and tensorflow for production deployment on nvidia hardware.

Tensorrt Integration Speeds Up Tensorflow Inference Nvidia Technical Blog Note: throughput numbers for vllm, sglang, and tensorrt llm are total output tokens sec under concurrent load on a single h100 80gb. ollama’s metric is requests sec because it processes sequentially. We ran vllm, tensorrt llm, and sglang on the same h100 gpu with the same model. here are the throughput, latency, and vram numbers you actually need to pick an engine. Tensorrt is an sdk that enables high performance inference for trained deep learning models. learn how to install, convert, and deploy tensorrt engines using various options and tools. Nvidia tensorrt is a high performance deep learning inference optimizer and runtime that maximizes throughput and minimizes latency on nvidia gpus. tensorrt optimizes models from frameworks like pytorch and tensorflow for production deployment on nvidia hardware.

Deploying Deep Neural Networks With Nvidia Tensorrt Nvidia Technical Blog Tensorrt is an sdk that enables high performance inference for trained deep learning models. learn how to install, convert, and deploy tensorrt engines using various options and tools. Nvidia tensorrt is a high performance deep learning inference optimizer and runtime that maximizes throughput and minimizes latency on nvidia gpus. tensorrt optimizes models from frameworks like pytorch and tensorflow for production deployment on nvidia hardware.

Comments are closed.