Text To Image Diffusion Models Part Ii

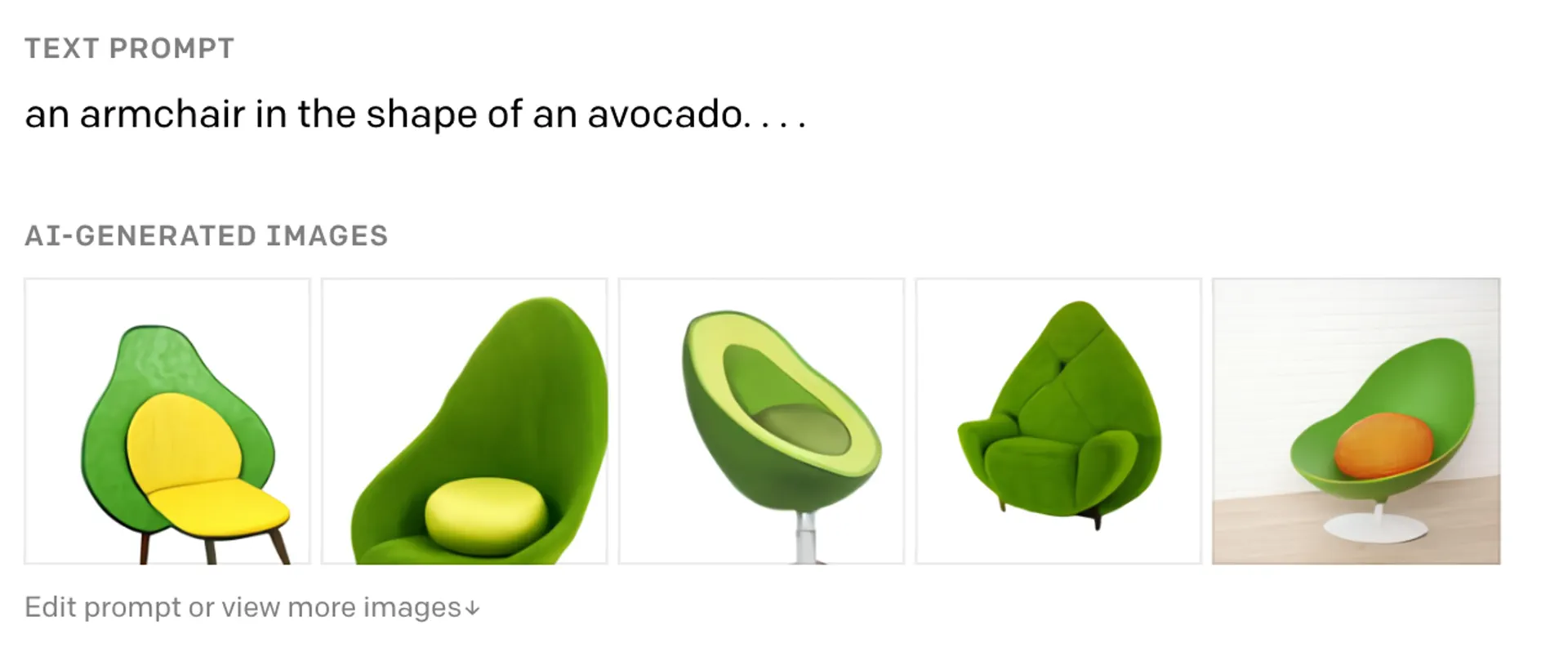

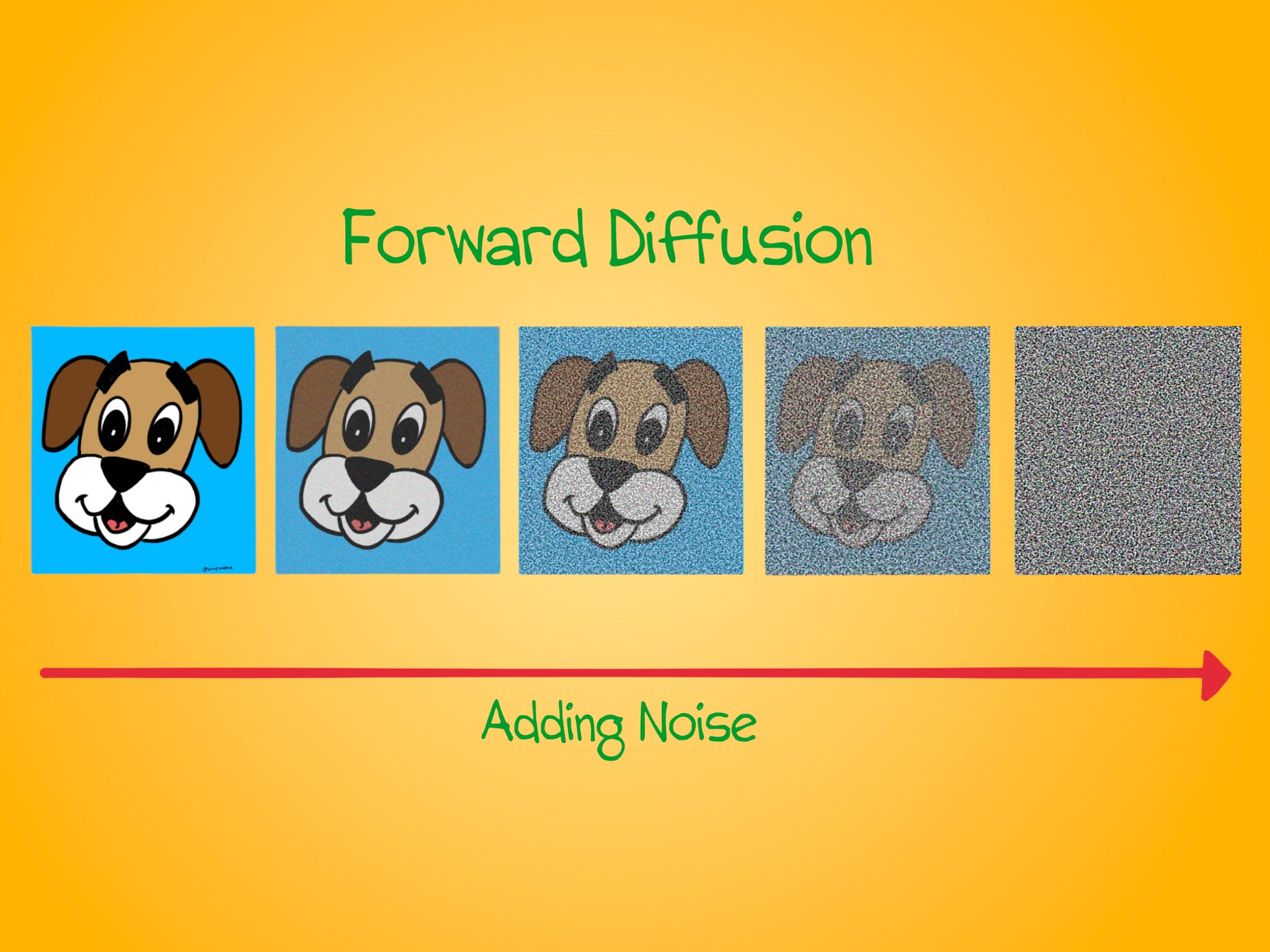

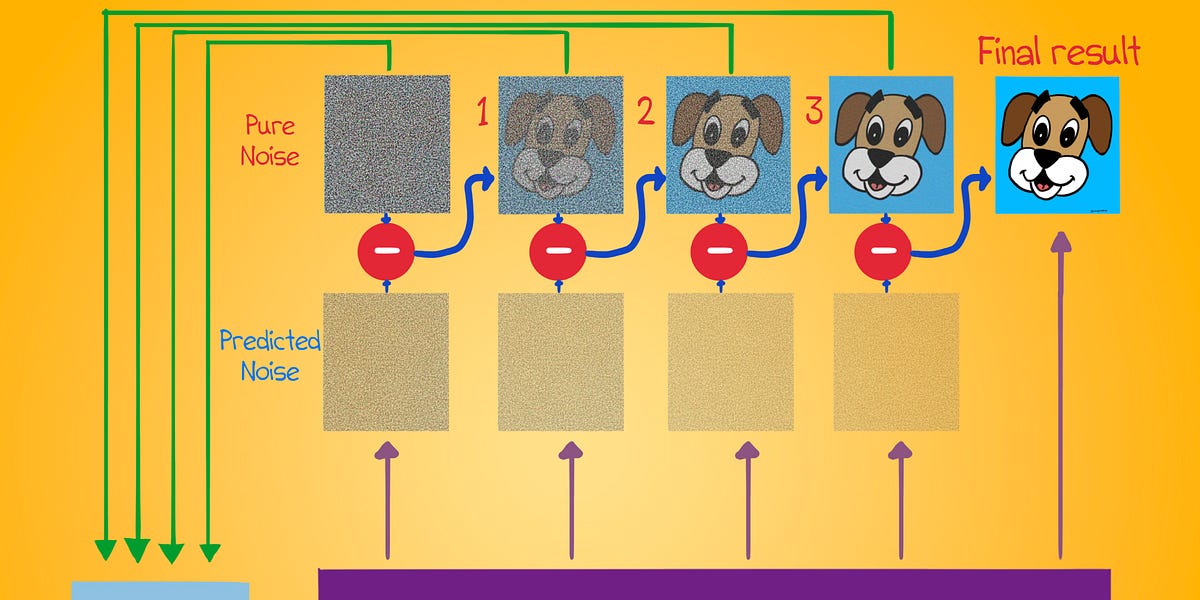

Text To Image Diffusion Models The authors propose a novel method to control image generation using diffusion models. without any extra models or training, they show how their approach can move, resize, and replace objects with items from real images. As a self contained work, this survey starts with a brief introduction of how diffusion models work for image synthesis, followed by the background for text conditioned image synthesis.

Text To Image Diffusion Models I focus on two ways of generating images from text prompts: vision transformers (vit) and diffusion models. in the first method, we divide an image into multiple patches and treat each patch as an element in a sequence. This paper surveys the field of personalizable t2i diffusion models, covering both existing advancements and directions for future work. we begin with an overview of the theoretical basis of diffusion models and methods for conditioning image generation based on novel concepts. Part 1: • text to image in 5 minutes: parti, dall e how image diffusion works more. In this article, we explore the diffusion models for image generation and art generation. we cover models like dall e 2, imagen, stable diffusion, and midjourney.

Text To Image Diffusion Models Part 1: • text to image in 5 minutes: parti, dall e how image diffusion works more. In this article, we explore the diffusion models for image generation and art generation. we cover models like dall e 2, imagen, stable diffusion, and midjourney. We propose a pluggable interaction control model, called interactdiffusion that extends existing pre trained t2i dif fusion models to enable them being better conditioned on interactions. specifically, we tokenize the hoi information and learn their relationships via interaction embeddings. Dall·e “1” was introduced in 2021 by openai, a transformer generates directly image tokens from both text and image tokens (more or less). dall·e “2” was released in 2022, it’s more sophisticated, and better at both quality and diversity. Inverse problem of image generation based on the text caption is a common challenge in computer vision com munity as well. last month openai released their work ”dall e 2” that can create original, realistic images and art from a text description. Text to image models are generally latent diffusion models, which perform the diffusion process in a compressed latent space rather than directly in pixel space. an autoencoder (often a variational autoencoder (vae)) is used to convert between pixel space and this latent representation.

Text To Image Diffusion Models Part Ii We propose a pluggable interaction control model, called interactdiffusion that extends existing pre trained t2i dif fusion models to enable them being better conditioned on interactions. specifically, we tokenize the hoi information and learn their relationships via interaction embeddings. Dall·e “1” was introduced in 2021 by openai, a transformer generates directly image tokens from both text and image tokens (more or less). dall·e “2” was released in 2022, it’s more sophisticated, and better at both quality and diversity. Inverse problem of image generation based on the text caption is a common challenge in computer vision com munity as well. last month openai released their work ”dall e 2” that can create original, realistic images and art from a text description. Text to image models are generally latent diffusion models, which perform the diffusion process in a compressed latent space rather than directly in pixel space. an autoencoder (often a variational autoencoder (vae)) is used to convert between pixel space and this latent representation.

Text To Image Diffusion Models Part Ii Inverse problem of image generation based on the text caption is a common challenge in computer vision com munity as well. last month openai released their work ”dall e 2” that can create original, realistic images and art from a text description. Text to image models are generally latent diffusion models, which perform the diffusion process in a compressed latent space rather than directly in pixel space. an autoencoder (often a variational autoencoder (vae)) is used to convert between pixel space and this latent representation.

Pre Trained Text To Image Diffusion Models Are Versatile Representation

Comments are closed.