Contextualized Diffusion Models For Text Guided Image And Video

Contextualized Diffusion Models For Text Guided Image And Video We generalize our contextualized diffusion to both ddpms and ddims with theoretical derivations, and demonstrate the effectiveness of our model in evaluations with two challenging tasks: text to image generation, and text to video editing. We generalize our contextualized diffusion to both ddpms and ddims with theoretical derivations, and demonstrate the effectiveness of our model in evaluations with two challenging tasks: text to image generation, and text to video editing.

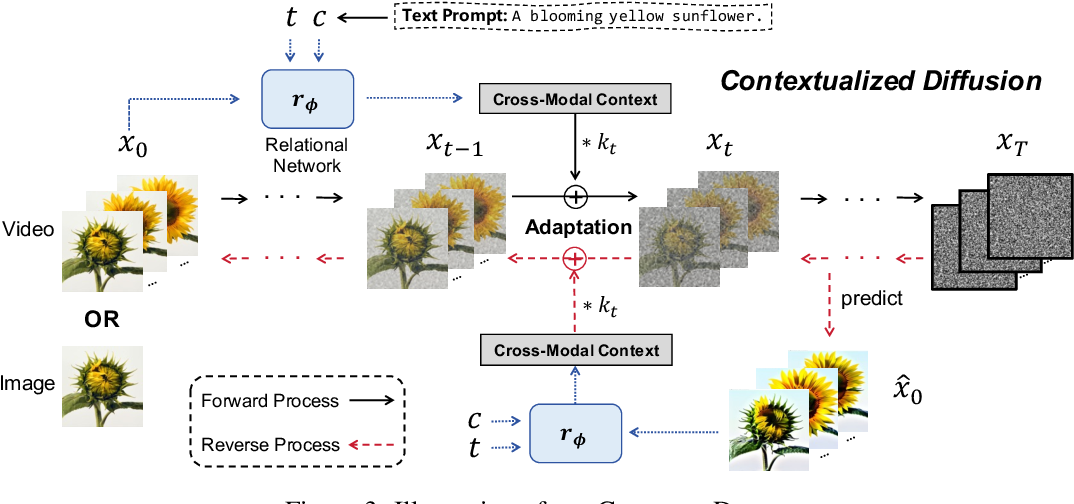

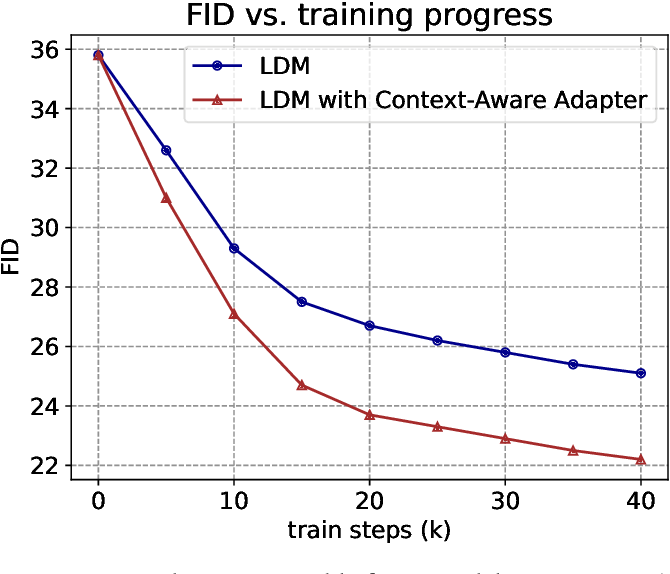

Figure 1 From Contextualized Diffusion Models For Text Guided Image And We propose a novel and general cross modal contextualized diffusion model (contextdiff) that harnesses cross modal context to facilitate the learning capacity of cross modal diffusion models, including text to image generation, and text guided video editing. A novel and general contextualized diffusion model (contextdiff) is proposed by incorporating the cross modal context encompassing interactions and alignments between text condition and visual sample into forward and reverse processes, thereby facilitating cross modal conditional modeling. We generalize our contextualized diffusion to both ddpms and ddims with theoretical derivations, and demonstrate the effectiveness of our model in evaluations with two challenging tasks:. We generalize our contextualized diffusion to both ddpms and ddims with theoretical derivations, and demonstrate the effectiveness of our model in evaluations with two challenging tasks: text to image generation, and text to video editing.

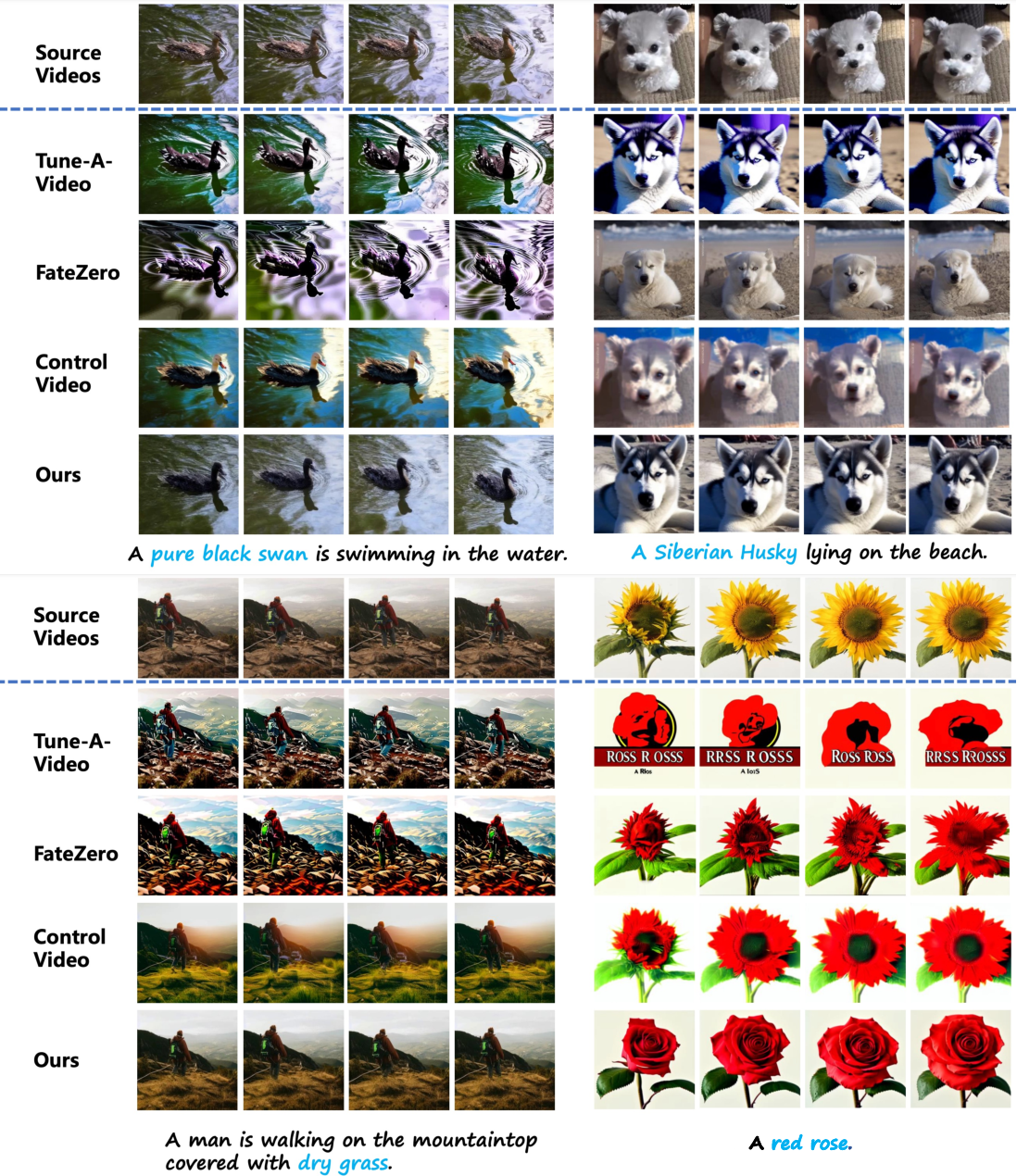

Figure 1 From Contextualized Diffusion Models For Text Guided Image And We generalize our contextualized diffusion to both ddpms and ddims with theoretical derivations, and demonstrate the effectiveness of our model in evaluations with two challenging tasks:. We generalize our contextualized diffusion to both ddpms and ddims with theoretical derivations, and demonstrate the effectiveness of our model in evaluations with two challenging tasks: text to image generation, and text to video editing. To address these gaps, we introduce llm contextual guided diffusion (lcgd), which integrates large language models (llms) into the noising and denoising phases to enhance semantic understanding, noise modulation, and feature selection. Text guided generative diffusion models unlock powerful image creation and editing tools. recent approaches that edit the content of footage while retaining structure require expensive re training for every input or rely on error prone propagation of image edits across frames. We generalize our contextualized diffusion to both ddpms and ddims with theoretical derivations, and demonstrate the effectiveness of our model in evaluations with two challenging tasks: text to image generation, and text to video. This paper introduces contextdiff, a novel contextualized diffusion model that incorporates cross modal context between text and visual samples in both forward and reverse processes for improved text guided image and video generation.

Figure 1 From Contextualized Diffusion Models For Text Guided Image And To address these gaps, we introduce llm contextual guided diffusion (lcgd), which integrates large language models (llms) into the noising and denoising phases to enhance semantic understanding, noise modulation, and feature selection. Text guided generative diffusion models unlock powerful image creation and editing tools. recent approaches that edit the content of footage while retaining structure require expensive re training for every input or rely on error prone propagation of image edits across frames. We generalize our contextualized diffusion to both ddpms and ddims with theoretical derivations, and demonstrate the effectiveness of our model in evaluations with two challenging tasks: text to image generation, and text to video. This paper introduces contextdiff, a novel contextualized diffusion model that incorporates cross modal context between text and visual samples in both forward and reverse processes for improved text guided image and video generation.

Comments are closed.