Pdf Cross Modal Contextualized Diffusion Models For Text Guided

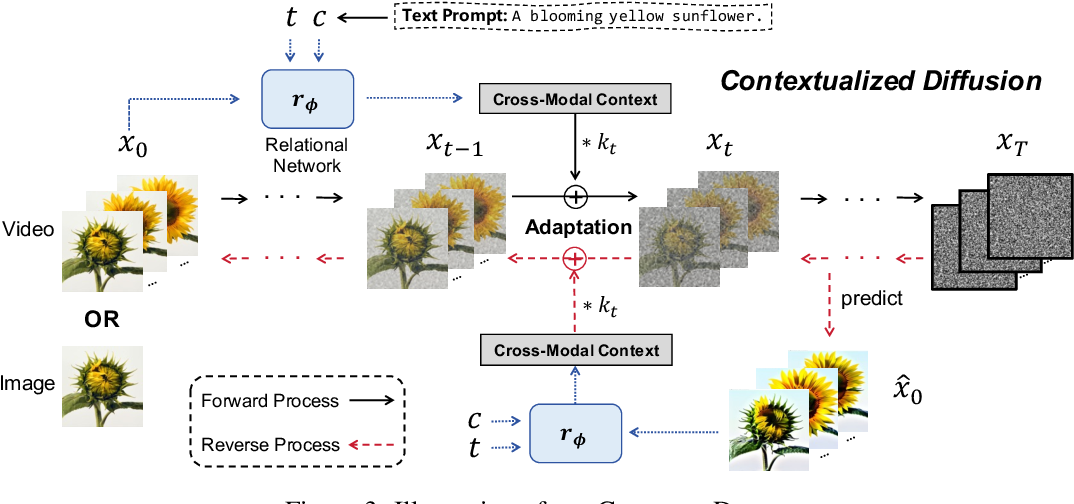

Contextualized Diffusion Models For Text Guided Image And Video View a pdf of the paper titled cross modal contextualized diffusion models for text guided visual generation and editing, by ling yang and 6 other authors. To address this issue, we propose a novel and general contextualized diffusion model (contextdiff) by incorporating the cross modal context encompassing interactions and alignments between text condition and visual sample into forward and reverse processes.

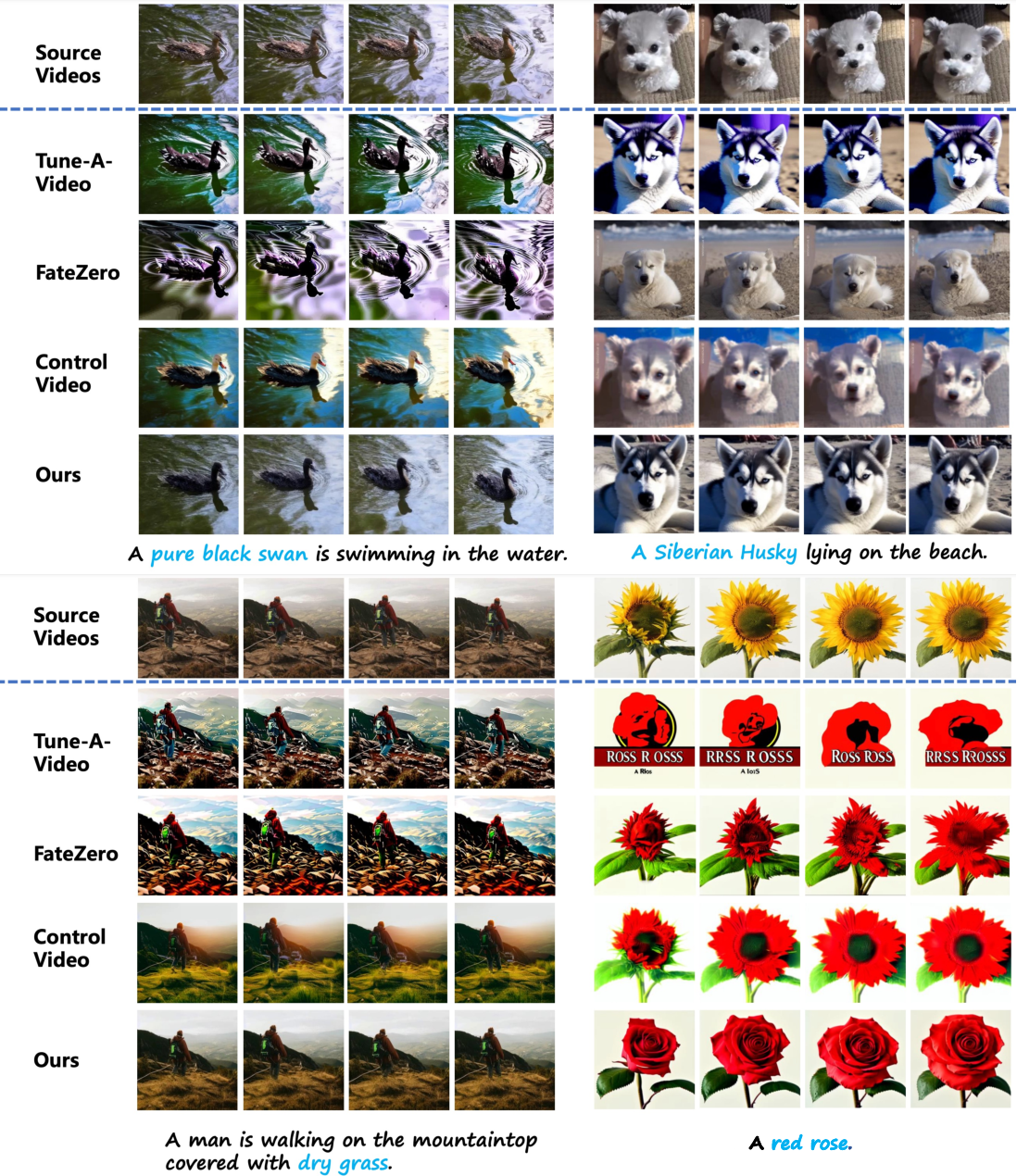

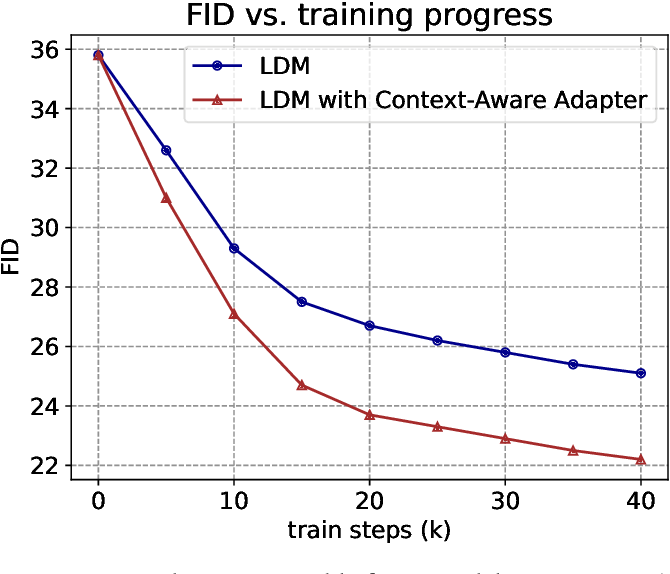

Pdf Cross Modal Contextualized Diffusion Models For Text Guided We generalize our contextualized diffusion to both ddpms and ddims with theoretical derivations, and demonstrate the effectiveness of our model in evaluations with two challenging tasks:. We propose a novel and general cross modal contextualized diffusion model (contextdiff) that harnesses cross modal context to facilitate the learning capacity of cross modal diffusion models, including text to image generation, and text guided video editing. This work presents imagen, a text to image diffusion model with an unprecedented degree of photorealism and a deep level of language understanding, and finds that human raters prefer imagen over other models in side by side comparisons, both in terms of sample quality and image text alignment. Download the full pdf of cross modal contextualized diffusion models for text guided. includes comprehensive summary, implementation details, and key takeaways.ling yang.

Universal Guidance For Diffusion Models Pdf Image Segmentation This work presents imagen, a text to image diffusion model with an unprecedented degree of photorealism and a deep level of language understanding, and finds that human raters prefer imagen over other models in side by side comparisons, both in terms of sample quality and image text alignment. Download the full pdf of cross modal contextualized diffusion models for text guided. includes comprehensive summary, implementation details, and key takeaways.ling yang. Conditional diffusion models have exhibited superior performance in high fidelity text guided visual generation and editing. nevertheless, prevailin. Bibliographic details on cross modal contextualized diffusion models for text guided visual generation and editing. Our work demonstrates that strategically reweighting cross modal interactions leads to more semantically accurate and visually coherent image generation, offering a promising approach for diffusion model research and applications. To address these limitations, we propose a novel and general cross modal contextualized diffusion model (contextdiff) that harnesses cross modal context to facilitate the learning capacity of cross modal diffusion models.

Figure 1 From Contextualized Diffusion Models For Text Guided Image And Conditional diffusion models have exhibited superior performance in high fidelity text guided visual generation and editing. nevertheless, prevailin. Bibliographic details on cross modal contextualized diffusion models for text guided visual generation and editing. Our work demonstrates that strategically reweighting cross modal interactions leads to more semantically accurate and visually coherent image generation, offering a promising approach for diffusion model research and applications. To address these limitations, we propose a novel and general cross modal contextualized diffusion model (contextdiff) that harnesses cross modal context to facilitate the learning capacity of cross modal diffusion models.

Figure 1 From Contextualized Diffusion Models For Text Guided Image And Our work demonstrates that strategically reweighting cross modal interactions leads to more semantically accurate and visually coherent image generation, offering a promising approach for diffusion model research and applications. To address these limitations, we propose a novel and general cross modal contextualized diffusion model (contextdiff) that harnesses cross modal context to facilitate the learning capacity of cross modal diffusion models.

Figure 1 From Contextualized Diffusion Models For Text Guided Image And

Comments are closed.