Stage Level Scheduling On Waitingforcode Articles About Apache Spark

Stage Level Scheduling On Waitingforcode Articles About Apache Spark Stage level scheduling is a relatively new feature in apache spark, and it still has some room for improvement, especially the support of the dataset api. but despite that, it's an interesting evolution that adds some controlled flexibility to the jobs!. The idea of writing this blog post came to me when i was analyzing kubernetes changes in apache spark 3.1.1. starting from this version we can use stage level scheduling, so far available only for yarn.

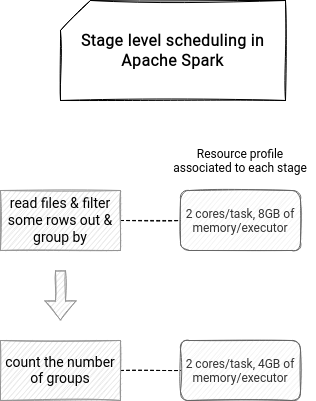

Performance And Cost Efficient Spark Job Scheduling Based On Deep By the end of these articles, you will be able to effectively leverage declarative programming in your workflows and gain a deeper understanding of what happens under the hood when you do. Allow users to specify task and executor resource requirements at the stage level. spark will use the stage level requirements to acquire the necessary resources executors and schedule tasks based on the per stage requirements. Spark developers can specify task and executor resource requirements at stage level spark (scheduler) uses the stage level requirements to acquire the necessary resources and executors and schedule tasks based on the per stage requirements. This paper first provides a brief introduction to the basics of spark, introduces common spark scheduling efficiency evaluation metrics, compares several common spark scheduling strategies, and analyzes how scheduling affects program execution efficiency.

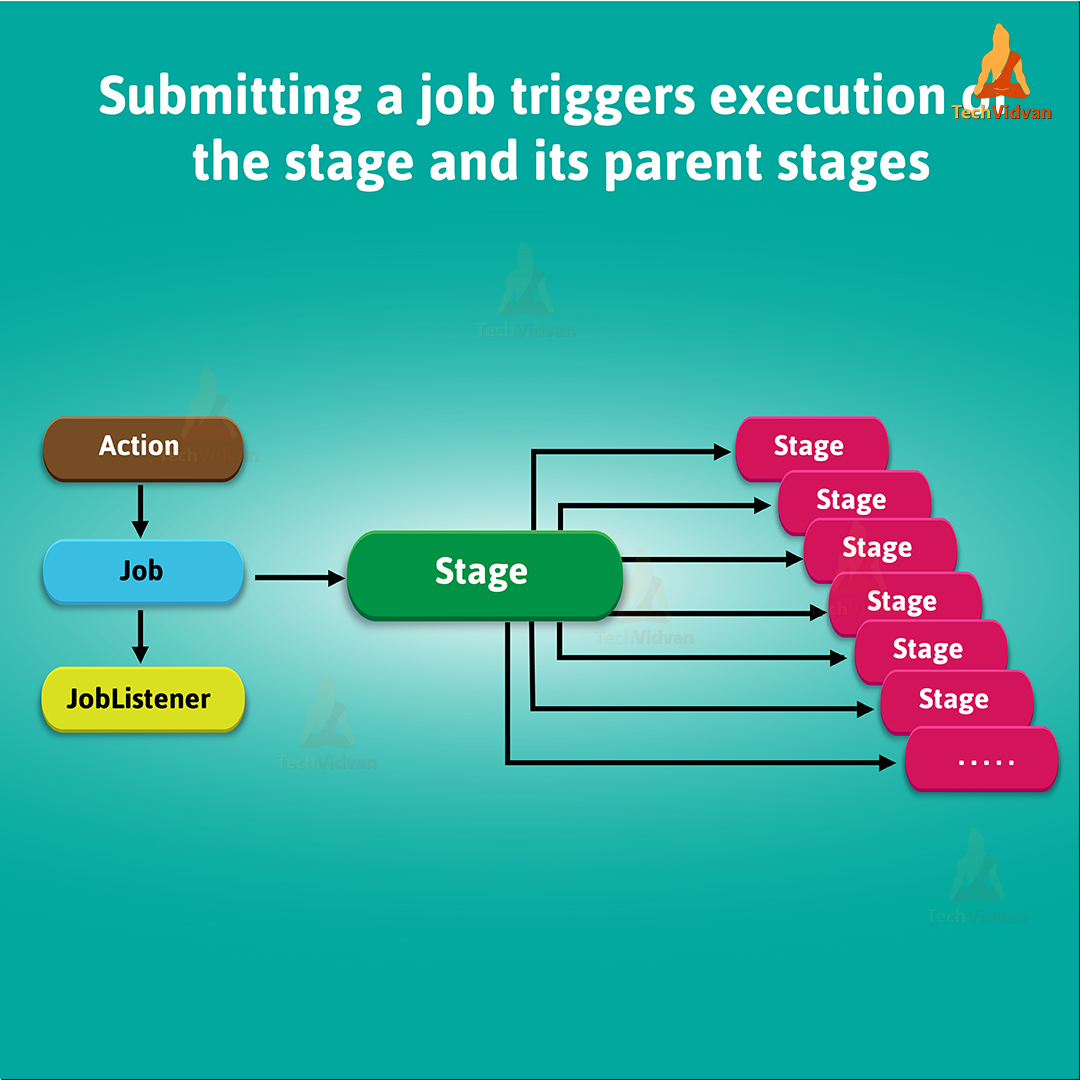

Apache Spark Stage Physical Unit Of Execution Techvidvan Spark developers can specify task and executor resource requirements at stage level spark (scheduler) uses the stage level requirements to acquire the necessary resources and executors and schedule tasks based on the per stage requirements. This paper first provides a brief introduction to the basics of spark, introduces common spark scheduling efficiency evaluation metrics, compares several common spark scheduling strategies, and analyzes how scheduling affects program execution efficiency. A spark application goes through a series of stages from its creation to completion. understanding this life cycle is crucial for optimizing performance, debugging, and managing resources. Spark developers can specify task and executor resource requirements at stage level spark (scheduler) uses the stage level requirements to acquire the necessary resources and executors and schedule tasks based on the per stage requirements. This happens because all these complex codes are broken down into different jobs, stages & tasks through a dag created before execution. to understand it all, we first need to understand a few key terminologies. In this article, we’ll walk through spark’s execution hierarchy step by step — from jobs triggered by actions, to shuffle driven stages, all the way down to tasks that run on individual cores.

Comments are closed.