Apache Spark Stage Level Scheduling

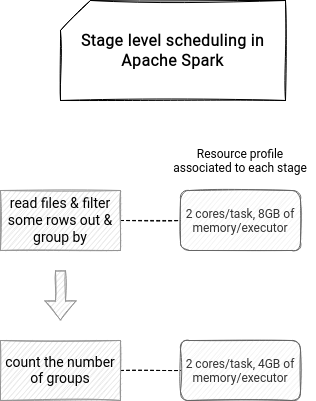

Stage Level Scheduling On Waitingforcode Articles About Apache Spark Spark’s scheduler is fully thread safe and supports this use case to enable applications that serve multiple requests (e.g. queries for multiple users). by default, spark’s scheduler runs jobs in fifo fashion. Spark developers can specify task and executor resource requirements at stage level spark (scheduler) uses the stage level requirements to acquire the necessary resources and executors and schedule tasks based on the per stage requirements.

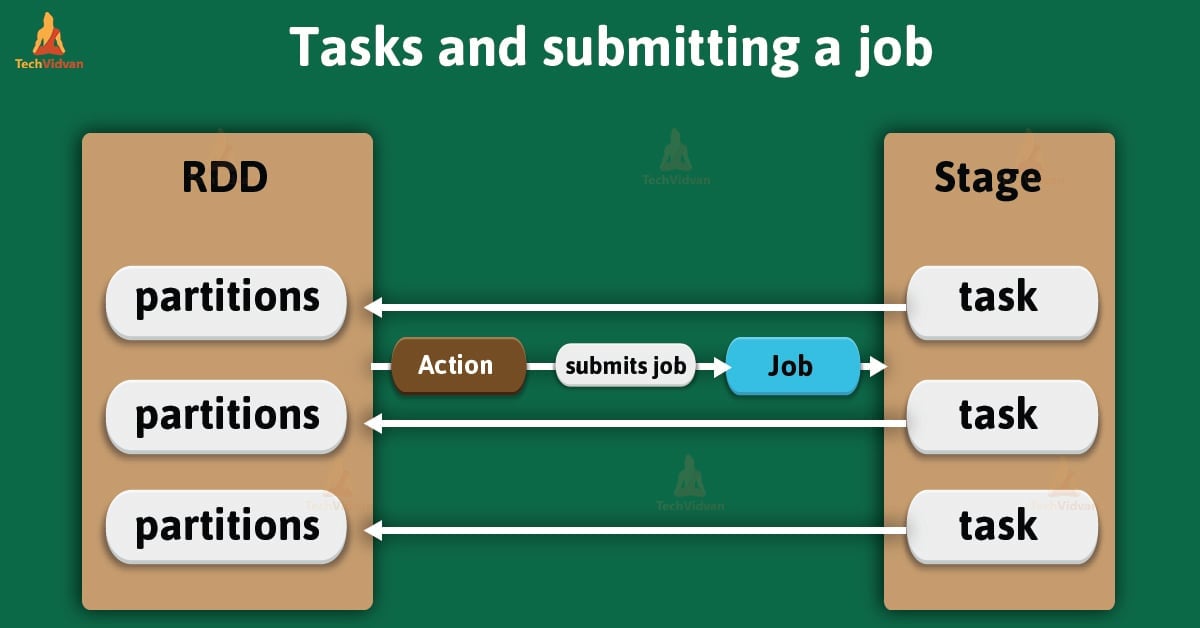

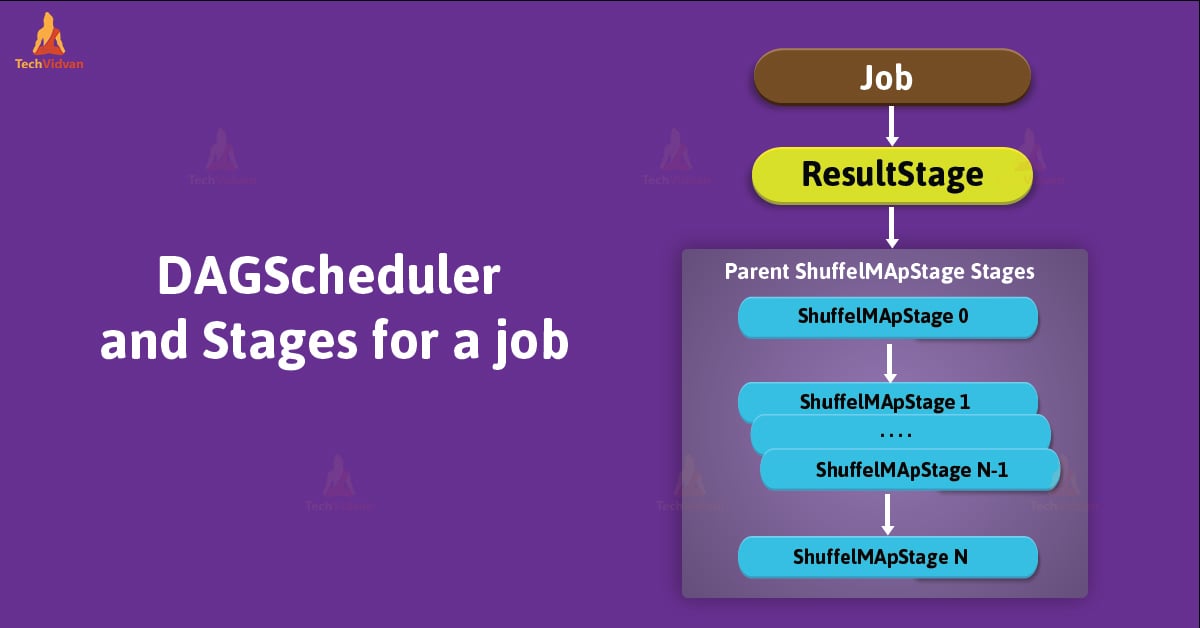

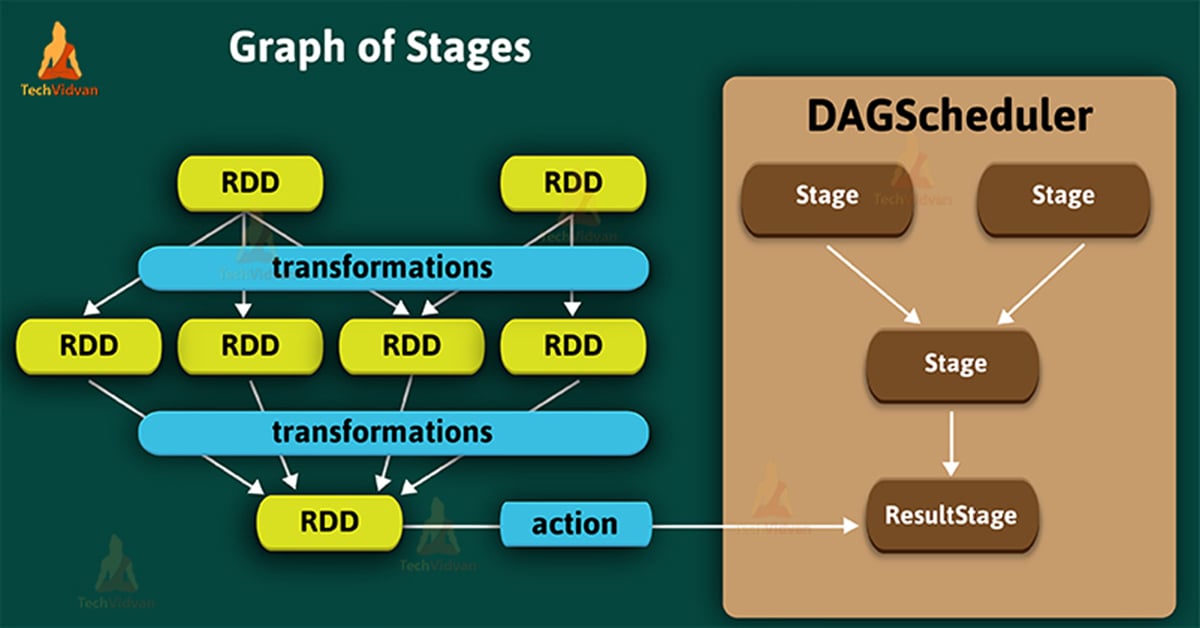

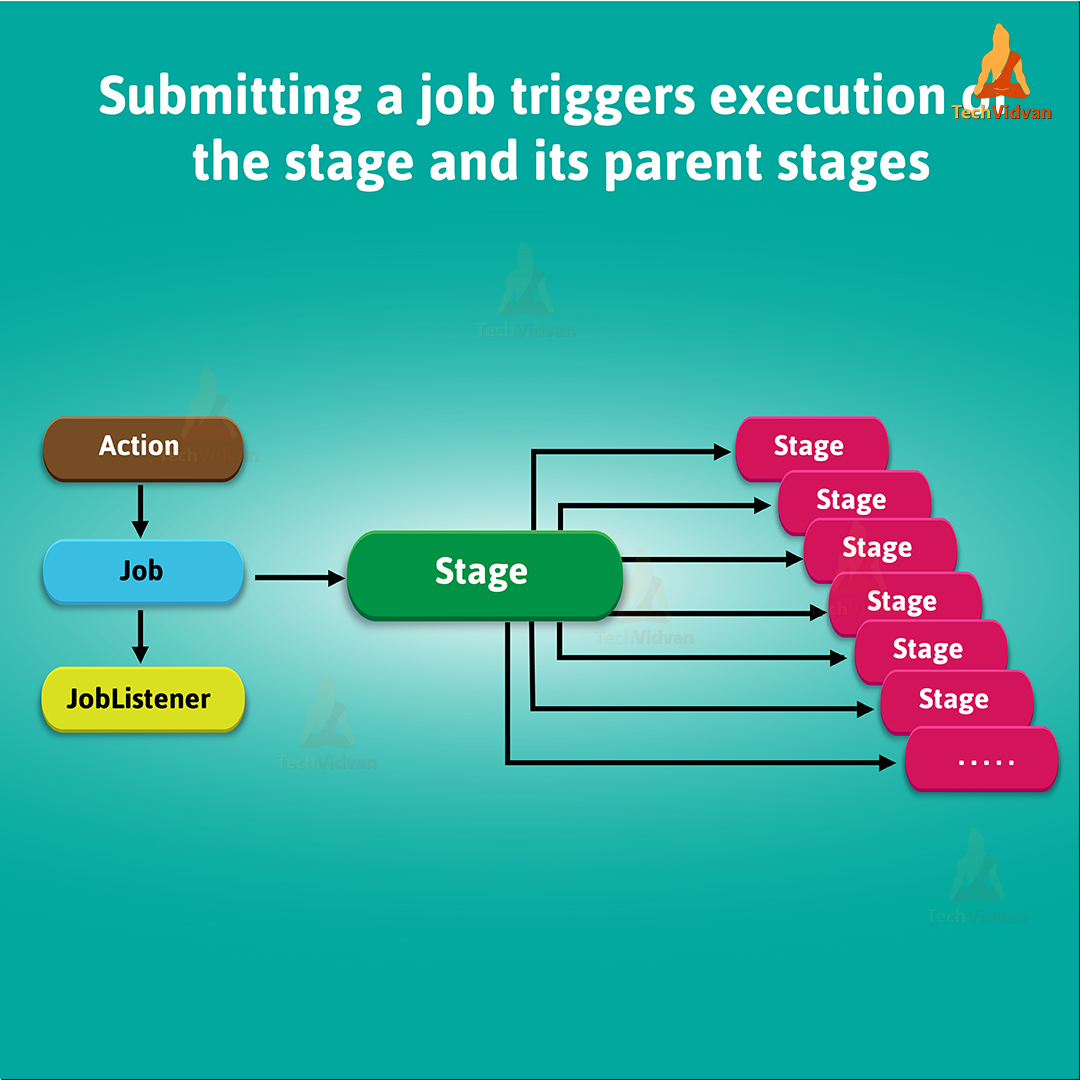

Apache Spark Stage Physical Unit Of Execution Techvidvan Spark developers can specify task and executor resource requirements at stage level spark (scheduler) uses the stage level requirements to acquire the necessary resources and executors and schedule tasks based on the per stage requirements. It creates a high level scheduling layer called a dag scheduler. whenever an action is encountered, the dag scheduler creates a collection of stages and keeps track of the rdds involved in the job and creates a plan based on the dag of the job. Prior to spark 3.0, these thread configurations apply to all roles of spark, such as driver, executor, worker and master. from spark 3.0, we can configure threads in finer granularity starting from driver and executor. Stage level scheduling defines the resources needed by each stage of an apache spark job. it's a relatively new feature because it got implemented only in version 3.1.1 for 2 cluster managers, yarn and kubernetes.

Apache Spark Stage Physical Unit Of Execution Techvidvan Prior to spark 3.0, these thread configurations apply to all roles of spark, such as driver, executor, worker and master. from spark 3.0, we can configure threads in finer granularity starting from driver and executor. Stage level scheduling defines the resources needed by each stage of an apache spark job. it's a relatively new feature because it got implemented only in version 3.1.1 for 2 cluster managers, yarn and kubernetes. It creates a high level scheduling layer called a dag scheduler. whenever an action is encountered, the dag scheduler creates a collection of stages and keeps track of the rdds involved in the job and creates a plan based on the dag of the job. The document discusses stage level scheduling and resource allocation in apache spark to enhance big data and ai integration. it outlines various resource requirements such as executors, memory, and accelerators, while presenting benefits like improved hardware utilization and simplified application pipelines. Spark scheduler is a core component of apache spark that is responsible for scheduling tasks for execution. spark scheduler uses the high level stage oriented dagscheduler and the low level task oriented taskscheduler. Delay scheduling: a simple technique for achieving locality and fairness in cluster scheduling. note: shuffle01 02 03 not on the same host with shuffle11, don’t need to retry. note: shuffle02 on the same host with shuffle11, retry shufflemaptask2.

Apache Spark Stage Physical Unit Of Execution Techvidvan It creates a high level scheduling layer called a dag scheduler. whenever an action is encountered, the dag scheduler creates a collection of stages and keeps track of the rdds involved in the job and creates a plan based on the dag of the job. The document discusses stage level scheduling and resource allocation in apache spark to enhance big data and ai integration. it outlines various resource requirements such as executors, memory, and accelerators, while presenting benefits like improved hardware utilization and simplified application pipelines. Spark scheduler is a core component of apache spark that is responsible for scheduling tasks for execution. spark scheduler uses the high level stage oriented dagscheduler and the low level task oriented taskscheduler. Delay scheduling: a simple technique for achieving locality and fairness in cluster scheduling. note: shuffle01 02 03 not on the same host with shuffle11, don’t need to retry. note: shuffle02 on the same host with shuffle11, retry shufflemaptask2.

Apache Spark Stage Physical Unit Of Execution Techvidvan Spark scheduler is a core component of apache spark that is responsible for scheduling tasks for execution. spark scheduler uses the high level stage oriented dagscheduler and the low level task oriented taskscheduler. Delay scheduling: a simple technique for achieving locality and fairness in cluster scheduling. note: shuffle01 02 03 not on the same host with shuffle11, don’t need to retry. note: shuffle02 on the same host with shuffle11, retry shufflemaptask2.

What Is Spark Stage Explained Spark By Examples

Comments are closed.