Simplifying Distributed Deep Learning Model Inference Webinar

Simplifying Distributed Deep Learning Model Inference Webinar Join our webinar to learn how to simplify distributed deep learning model inference using databricks, enhancing performance and scalability. For more information about stanford's online artificial intelligence programs visit: stanford.io ai to learn more about enrolling in this course visit:.

Slide 14 Distributed Deep Learning Pdf Deep Learning Computer The webinar, introduction to anyscale and ray ai libraries, is available on demand now. going forward, each webinar will focus on real world ai use cases and distributed computing challenges. In this session, we’ll introduce llm d, an open, kubernetes native framework for distributed inference. you’ll learn how red hat is leading efforts across the community to shape llm d into a scalable, operator friendly platform for production genai. The release of spark 3.4 introduces built in apis for distributed model training and model inference at scale, making it easier to integrate deep learning (dl) models into spark data processing pipelines. Learn kubernetes weekly 180 just landed. in this edition you will find: 🔥 hidden infrastructure challenges in distributed llm inference on kubernetes 🎯 simplifying model serving with.

Webinar Intel Ai Presents Distributed Deep Learning Using Horovod The release of spark 3.4 introduces built in apis for distributed model training and model inference at scale, making it easier to integrate deep learning (dl) models into spark data processing pipelines. Learn kubernetes weekly 180 just landed. in this edition you will find: 🔥 hidden infrastructure challenges in distributed llm inference on kubernetes 🎯 simplifying model serving with. Throughout the tutorial, we include several hands on exercises to enable attendees to gain first hand experience of running distributed dl training and inference on a modern gpu cluster. He works on advanced parallelization techniques to accelerate deep neural network training and optimize hpc resource utilization, covering a range of dl applications, including supervised learning, semi supervised learning, and hyperparameter optimization. Sequential learning, a distributed learning paradigm, is chosen to train the ai models instead, where data is assumed to be distributed across multiple nodes, and a single copy of the model is moved between the nodes for local training. In this workshop, we want to focus on the network side of such distributed setups, the unique challenges it presents and novel solutions for optimizing collaborative and distributed learning tasks.

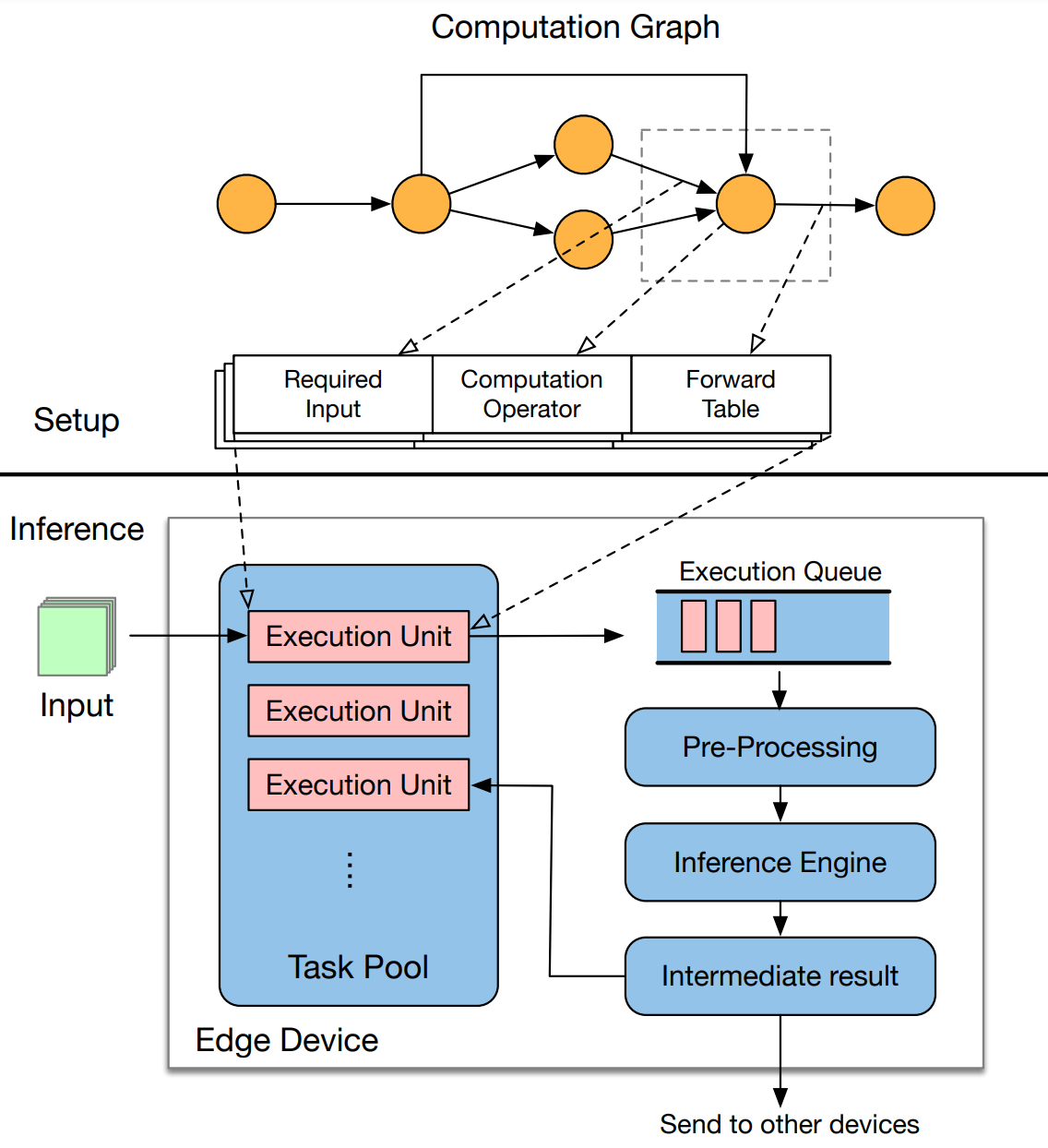

Distributed Inference Of Deep Learning Models Iqua Throughout the tutorial, we include several hands on exercises to enable attendees to gain first hand experience of running distributed dl training and inference on a modern gpu cluster. He works on advanced parallelization techniques to accelerate deep neural network training and optimize hpc resource utilization, covering a range of dl applications, including supervised learning, semi supervised learning, and hyperparameter optimization. Sequential learning, a distributed learning paradigm, is chosen to train the ai models instead, where data is assumed to be distributed across multiple nodes, and a single copy of the model is moved between the nodes for local training. In this workshop, we want to focus on the network side of such distributed setups, the unique challenges it presents and novel solutions for optimizing collaborative and distributed learning tasks.

Comments are closed.