Distributed Inference Of Deep Learning Models Iqua

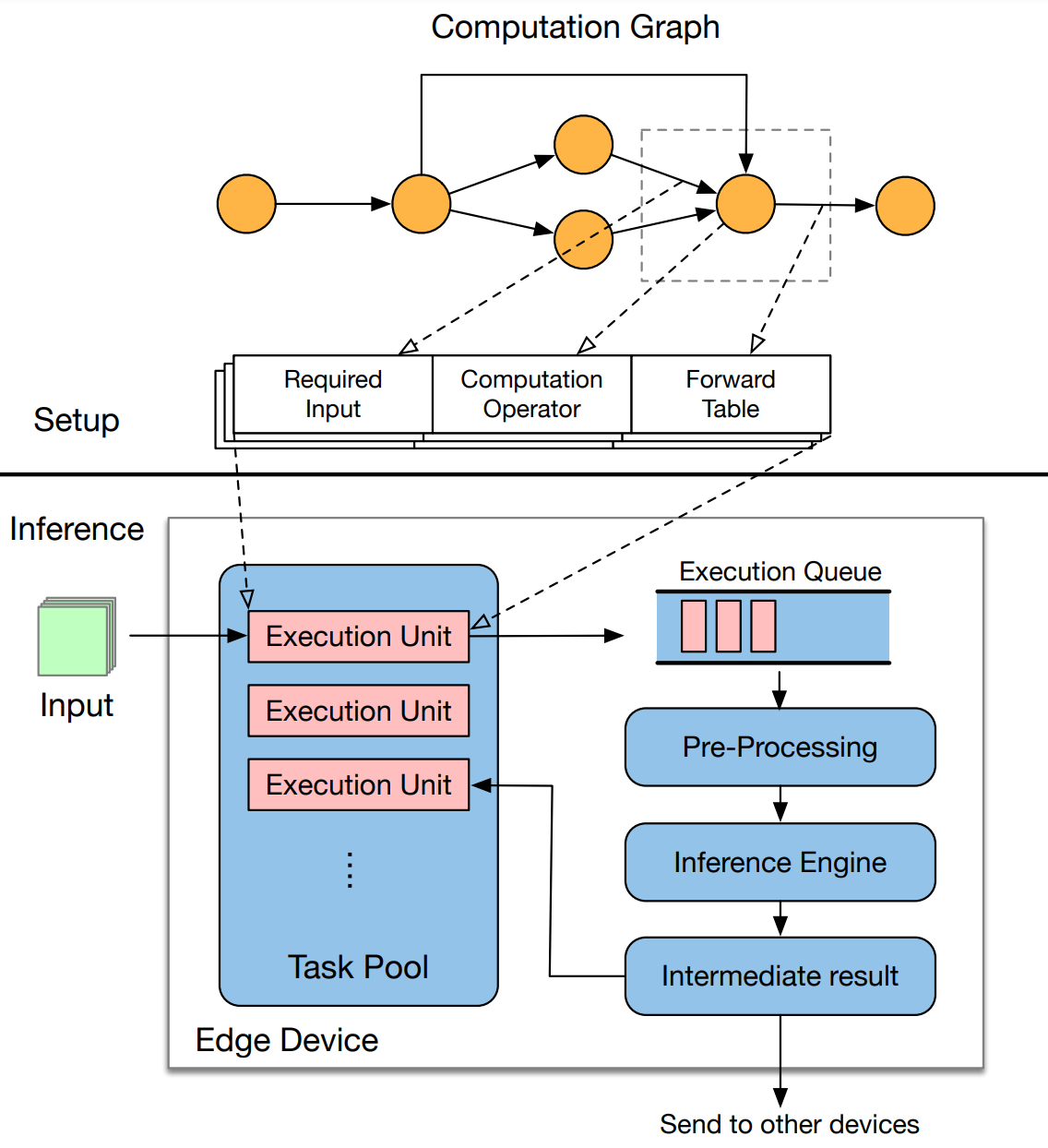

Distrifusion Distributed Parallel Inference For High Resolution In this work, we propose edgeflow, a new distributed inference mechanism specifically designed for general dag structured deep learning models. In this section, we briefly introduce the structure of deep learning models and their inference process, and show how the inference can be distributed among edge devices.

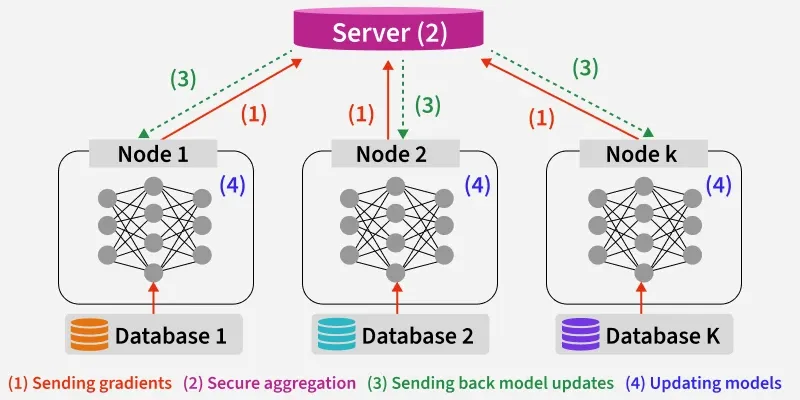

Distributed Inference Of Deep Learning Models Iqua Embedded distributed inference of neural networks has emerged as a promising approach for deploying machine learning models on resource constrained devices in an efficient and scalable manner. ‣ the model structure significantly affects the performance of existing distributed inference systems. ‣ edgeflow breaks the layer into execution units, and maintain the complicated layer dependencies by controlling the flow of intermediate results. “ distributed inference with deep learning models across heterogeneous edge devices,” in the proceedings of ieee infocom 2022, virtual conference, may 2–5, 2022. Recent years witnessed an increasing research attention in deploying deep learning models on edge devices for inference. due to limited capabilities and power c.

Distributed Inference César A Uribe “ distributed inference with deep learning models across heterogeneous edge devices,” in the proceedings of ieee infocom 2022, virtual conference, may 2–5, 2022. Recent years witnessed an increasing research attention in deploying deep learning models on edge devices for inference. due to limited capabilities and power c. This paper presents edgeflow, a new distributed inference mechanism designed for general dag structured deep learning models, which partitions model layers into independent execution units with a new progressive model partitioning algorithm. In this work, we consider a novel multimodal dnn based on the convolutional neural network architecture and explore several different ways to optimize its performance when training is executed on an apache spark cluster. In this paper, we focus on two key problems impacting deployment of distributed inference (di) models on sc: resource allocation and cold start latency. to address the two problems, we propose a hybrid scheduler for identifying the optimal server resource allocation policy. Abstract: deep neural networks (dnns) have established themselves as a fundamental tool in numerous computational modeling applications, having bypassed the critical step of feature extraction. despite their capabilities in modeling, training large scale dnn models is a very.

Distributed Inference This paper presents edgeflow, a new distributed inference mechanism designed for general dag structured deep learning models, which partitions model layers into independent execution units with a new progressive model partitioning algorithm. In this work, we consider a novel multimodal dnn based on the convolutional neural network architecture and explore several different ways to optimize its performance when training is executed on an apache spark cluster. In this paper, we focus on two key problems impacting deployment of distributed inference (di) models on sc: resource allocation and cold start latency. to address the two problems, we propose a hybrid scheduler for identifying the optimal server resource allocation policy. Abstract: deep neural networks (dnns) have established themselves as a fundamental tool in numerous computational modeling applications, having bypassed the critical step of feature extraction. despite their capabilities in modeling, training large scale dnn models is a very.

Github Aydanpirani Distributed Ml Inference A Simple Distributed In this paper, we focus on two key problems impacting deployment of distributed inference (di) models on sc: resource allocation and cold start latency. to address the two problems, we propose a hybrid scheduler for identifying the optimal server resource allocation policy. Abstract: deep neural networks (dnns) have established themselves as a fundamental tool in numerous computational modeling applications, having bypassed the critical step of feature extraction. despite their capabilities in modeling, training large scale dnn models is a very.

Distributed Deep Learning Geeksforgeeks

Comments are closed.