Short Explaining Explainability Understanding Concept Activation Vectors

Explaining Explainability Understanding Concept Activation Vectors One popular method for finding concepts is concept activation vectors (cavs), which are learnt using a probe dataset of concept exemplars. in this work, we investigate three properties of cavs. One popular method for finding concepts is concept activation vectors (cavs), which are learnt using a probe dataset of concept exemplars. in this work, we investigate three properties of cavs.

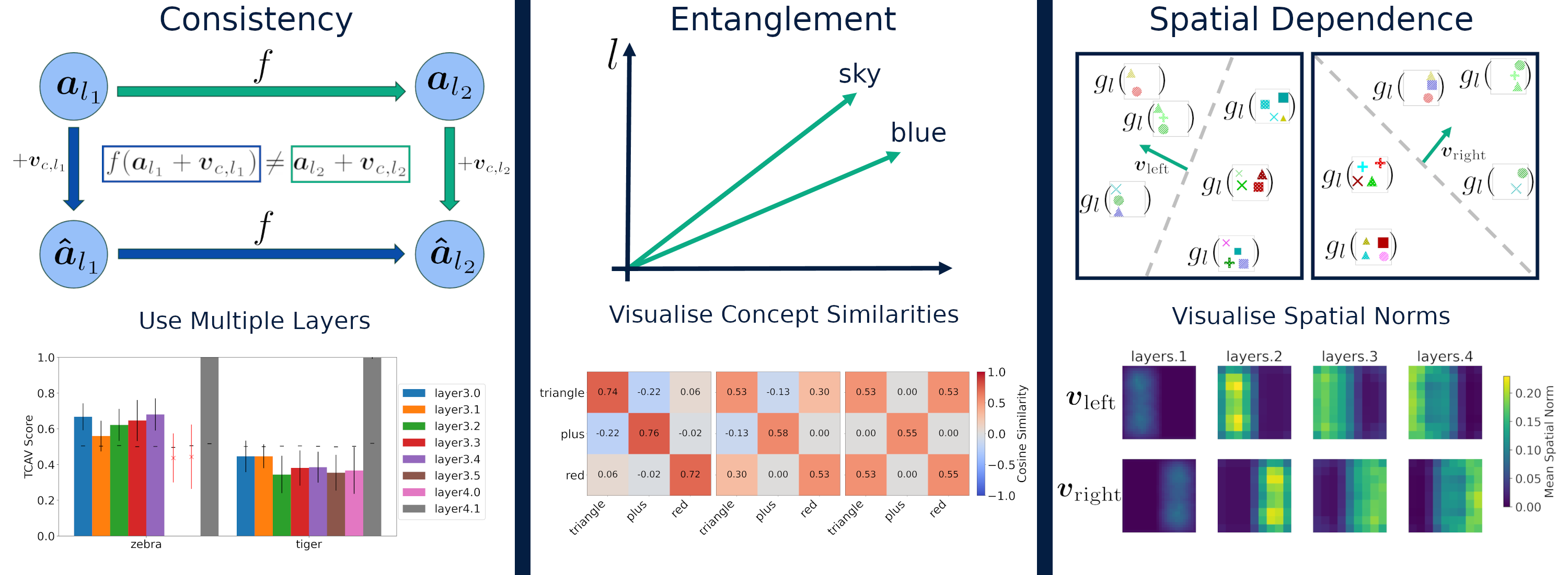

Explaining Explainability Understanding Concept Activation Vectors In this work, we investigate three properties of cavs. cavs may be: (1) inconsistent between layers, (2) entangled with different concepts, and (3) spatially dependent. each property provides both challenges and opportunities in interpreting models. One popular method for finding concepts is concept activation vectors (cavs), which are learnt using a probe dataset of concept exemplars. in this work, we investigate three properties of cavs: (1) inconsistency across layers, (2) entanglement with other concepts, and (3) spatial dependency. The paper explores deep learning models' decision making processes, focusing on concept activation vectors (cavs) to understand model behavior, consistency, entanglement, and spatial dependence. Explainable ai: testing with concept activation vectors (tcav) let’s start with the main goal of explainable artificial intelligence (xai) to motivate tcav: “interpretability,” i.e.,.

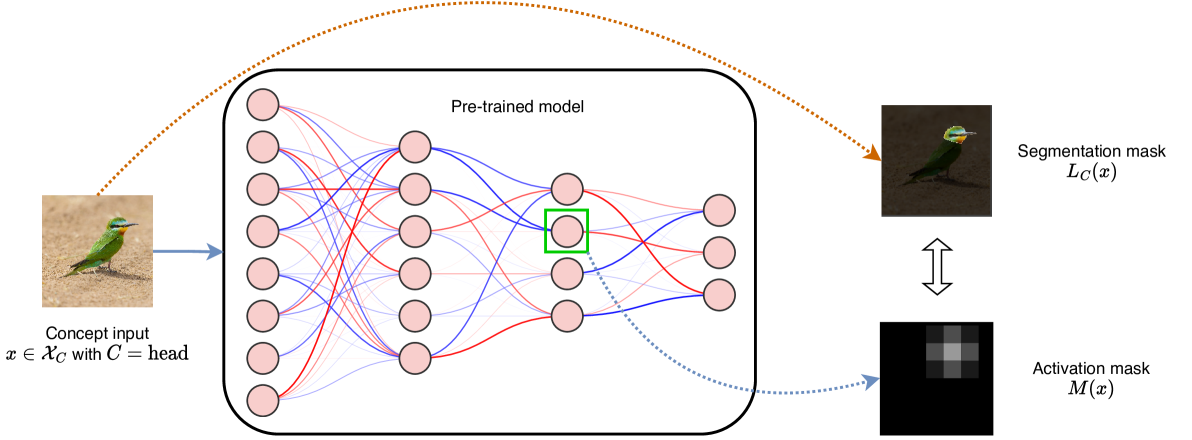

Explaining Explainability Understanding Concept Activation Vectors The paper explores deep learning models' decision making processes, focusing on concept activation vectors (cavs) to understand model behavior, consistency, entanglement, and spatial dependence. Explainable ai: testing with concept activation vectors (tcav) let’s start with the main goal of explainable artificial intelligence (xai) to motivate tcav: “interpretability,” i.e.,. The paper explores properties of concept activation vectors (cavs) in deep learning models, highlighting inconsistencies, entanglement, and spatial dependence. A popular method is concept activation vectors (cavs): a linear representation of a concept found in the activation space of a specific layer using a probe dataset of concept examples (kim etal.,2018). Building on this intuition, concept based explainability methods aim to study representations learned by deep neural networks in relation to human understandable concepts. here, concept activation vectors (cavs) are an important tool and can identify whether a model learned a concept or not.

Arxiv Papers Explaining Explainability Understanding Concept Activation The paper explores properties of concept activation vectors (cavs) in deep learning models, highlighting inconsistencies, entanglement, and spatial dependence. A popular method is concept activation vectors (cavs): a linear representation of a concept found in the activation space of a specific layer using a probe dataset of concept examples (kim etal.,2018). Building on this intuition, concept based explainability methods aim to study representations learned by deep neural networks in relation to human understandable concepts. here, concept activation vectors (cavs) are an important tool and can identify whether a model learned a concept or not.

Comments are closed.