Github Giovannimaffei Concept Activation Vectors Simple

Conceptvectors Benchmark Simple implementation of the explainability method presented in "interpretability beyond feature attribution: quantitative testing with concept activation vectors (tcav)", been kim et al., 2017. Hosted runners for every major os make it easy to build and test all your projects. run directly on a vm or inside a container. use your own vms, in the cloud or on prem, with self hosted runners.

Github Giovannimaffei Concept Activation Vectors Simple Simple implementation of "interpretability beyond feature attribution: quantitative testing with concept activation vectors (tcav)", been kim et al., 2017 pulse · giovannimaffei concept activation vectors. Concept activation vectors public simple implementation of "interpretability beyond feature attribution: quantitative testing with concept activation vectors (tcav)", been kim et al., 2017. Simple implementation of the explainability method presented in "interpretability beyond feature attribution: quantitative testing with concept activation vectors (tcav)", been kim et al., 2017. They are computed from hidden layer activations of inputs belonging either to a concept class or to non concept examples. adopting a probabilistic perspective, the distribution of the (non )concept inputs induces a distribution over the cav, making it a random vector in the latent space.

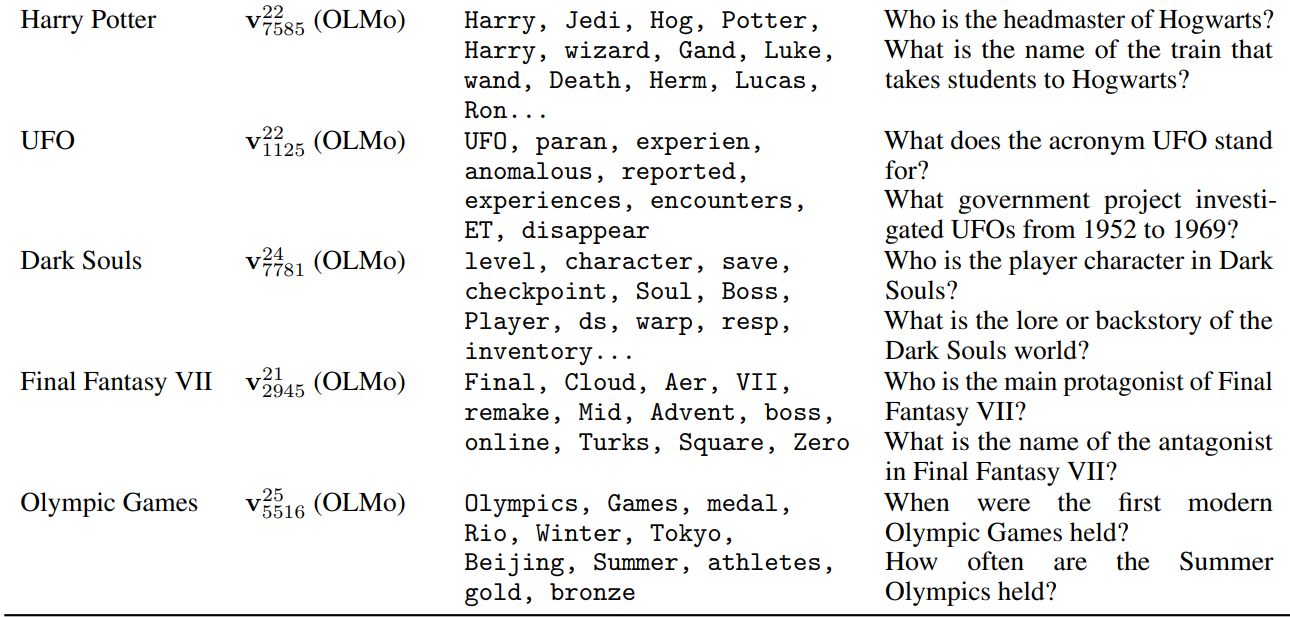

Activation Functions Github Simple implementation of the explainability method presented in "interpretability beyond feature attribution: quantitative testing with concept activation vectors (tcav)", been kim et al., 2017. They are computed from hidden layer activations of inputs belonging either to a concept class or to non concept examples. adopting a probabilistic perspective, the distribution of the (non )concept inputs induces a distribution over the cav, making it a random vector in the latent space. Here, concept activation vectors (cavs) are an important tool and can identify whether a model learned a concept or not. however, the computational cost and time requirements of existing cav computation pose a significant challenge, particularly in large scale, high dimensional architectures. Concepts are formatted and represented as input tensors and do not need to be part of the training or test datasets. concepts are incorporated into the importance score computations using concept activation vectors (cavs). Concept activation vectors (cavs) enable interpretability of a model with respect to human concepts, though cav generation requires the costly step of curating positive and negative examples for each concept one wishes to encode. Testing with concept activation vectors (tcav) is a new interpretability method to understand what signals your neural networks models uses for prediction. what's special about tcav compared to other methods?.

Github Inventivetalentdev Vectors Simple 3d 2d Vector Library Here, concept activation vectors (cavs) are an important tool and can identify whether a model learned a concept or not. however, the computational cost and time requirements of existing cav computation pose a significant challenge, particularly in large scale, high dimensional architectures. Concepts are formatted and represented as input tensors and do not need to be part of the training or test datasets. concepts are incorporated into the importance score computations using concept activation vectors (cavs). Concept activation vectors (cavs) enable interpretability of a model with respect to human concepts, though cav generation requires the costly step of curating positive and negative examples for each concept one wishes to encode. Testing with concept activation vectors (tcav) is a new interpretability method to understand what signals your neural networks models uses for prediction. what's special about tcav compared to other methods?.

Github Klaimen921 Vectors Part1 Concept activation vectors (cavs) enable interpretability of a model with respect to human concepts, though cav generation requires the costly step of curating positive and negative examples for each concept one wishes to encode. Testing with concept activation vectors (tcav) is a new interpretability method to understand what signals your neural networks models uses for prediction. what's special about tcav compared to other methods?.

Comments are closed.